⚠️ Important information

All models already include the Lightning LoRAs, except the SVI models, where Lightning is not included.

Do not use additional Lightning LoRAs on models that already have Lightning integrated, or the quality will be degraded.

For SVI models, you can use Lightning LoRAs if you want faster video generation.

2or3 ksampler (for svi) https://civarchive.com/models/2079192?modelVersionId=2668801

v2.1 (for nsfw v2) https://civarchive.com/models/2079192?modelVersionId=2562360

v2.1 with MMAUDIO (for nsfw v2) by @huchukato https://civarchive.com/models/2320999?modelVersionId=2613591

Another wf triple KSampler KSampler https://civarchive.com/models/1866565/wan22-continuous-generation-svi2-pro-or-gguf-or-32-phase-or-upscaleinterpolate-w-subgraphs-and-bus?modelVersionId=2559451

triple KSampler wf setup allows for more motion and helps prevent slow-motion issues. In exchange, your videos will take longer to generate.

For those having issues with my SVI workflow , you can try Kijai's wf here: https://github.com/user-attachments/files/24364598/Wan.-.2.2.SVI.Pro.-.Loop.native.json. Alternatively, you can try fmlf wf https://github.com/wallen0322/ComfyUI-Wan22FMLF/tree/main/example_workflows they will be simpler. There are others on Civitai that work very well too.

Qwen-VL workflow as an alternative to Grok for creating your dynamic NSFW prompts. Thanks @huchukato for his work: https://civarchive.com/models/2320999?modelVersionId=2611094

🟣 SVI Update – NSFW

⚡ Model Presentation (SVI-compatible version)

This update was made because the NSFW V2 models were not fully compatible with SVI LoRAs.

This version was created to work smoothly with SVI, while still functioning without them (though the workflow must be adapted).

Be careful, SVI LoRAs will only work with a workflow specifically designed for them, otherwise, it won't work.

There are two models available for SVI:

✔ Fast Move (FM) – Sexual scenes may differ from the Consistent Face model and will generally be faster.

✔ Consistent Face (CF) – Slightly better image quality, which may be preferable for anime-style videos; sexual scenes differ from Fast Move, but the difference is minimal.

You can also mix models between High and Low LoRAs:

FM (Fast Move) as High + CF (Consistent Face) as Low

CF (Consistent Face) as High + FM (Fast Move) as Low

Both combinations work and give slightly different results, offering more flexibility for your videos.

For this version, the main improvements include:

✔ Fully adapted for SVI LoRAs

✔ Greater flexibility: Lightning and SVI LoRAs must be loaded manually for custom workflows

🟣 SVI LoRAs – Strengths & Weaknesses

⚡ Overview

✅ Strengths

Best solution for making long videos

Excellent transitions between video segments

Reduced degradation compared to other solutions

Strong character coherence: the model retains information from the previous video, helping maintain consistency

⚠️ Weaknesses

Weaker prompt understanding

Weaker camera understanding

Videos are less dynamic

Sometimes slow-motion effect

(can be improved with proper Lightning LoRAs, dynamic prompts or triple ksampler)

🟣 SVI LoRAs – Download Links

⚡ Download

Note: Both LoRAs must be loaded manually in your workflow.

🟣 Suggested Lightning LoRA Combos (Optional)

⚡ Overview

You don’t have to use these Lightning LoRA combos. They are optional and allow you to fine-tune motion and degradation.

You can also use other Lightning LoRAs or assign different combos per video for more control.

🔥 Combo 1 – More Motion (Rapid Video Degradation)

High LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 4

or

💜 Combo 2 – Less Image Degradation

or

or

🟢 Combo 3 – Balanced Motion / Moderate Degradation

High LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 3

Low LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 1.5

🧠 Advanced Usage Tip

You can disable global Lightning LoRAs (in my wf) and assign different combos per video:

Combo 1 for Video 1, Combo 3 for Video 2, Combo 2 for Video 3.

Each combo produces different motion and degradation behavior.

If you want to create several-minute-long videos while maintaining high quality, it is possible, but it will take a very long time. You just need to avoid using Lightning LoRAs and use the full model instead.

🧠 Dynamic Prompts – Better Control & More Motion

You can use dynamic prompts to have better control and this helps to make the video more dynamic.

You just need to give this example prompt to an LLM like ChatGPT. It will be enough for it to describe the image you have and what you want as a video while keeping the same structure of the prompt in the following example.

⚠️ This will be a NSFW prompt; ChatGPT will not accept it.

You can use GROK https://grok.com, which can make NSFW prompt modifications.

For more examples of prompts with different poses, check:

Enhanced FP8 Model.

Give these prompts to GLM with the dynamic prompt structure if you want.

Example 1

(At 0 seconds: Wide shot showing a slightly overweight man casually walking down a city street, camera fixed in front, urban environment with buildings and cars.)

(At 1 second: Suddenly, a massive shark bursts from the pavement ahead, looking terrifying at first, pavement cracking, dust and debris flying, camera from side angle.)

(At 2 seconds: Medium shot from the side, the man stumbles backward in shock, while the shark dramatically slows down and strikes a comically exaggerated sexy pose, revealing large, exaggerated shark breasts, covered by a colorful bikini.)

(At 3 seconds: Close-up on the man’s face, eyes wide in disbelief, as he turns to look at the shark, small cartoon-style hearts floating above his head to emphasize his amazement, camera slightly low-angle.)

(At 4 seconds: Dynamic travelling shot showing the man frozen in the street, the shark maintaining its sexy pose, water splashes and debris still moving realistically, urban chaos around.)

(At 5 seconds: Wide cinematic shot pulling back, showing the man standing in the street, staring at the bikini-wearing shark with hearts above his head, epic perspective highlighting absurdity and humor.)

Example 2 – Anime NSFW

(At 0 seconds: The couple in a cozy bedroom, anime style, soft lighting highlighting their intimate embrace, her back arched slightly as he positions himself.)

(At 1 second: The man’s hips moving rhythmically, the head of his penis sliding effortlessly into her vagina, her body responding with a gentle, fluid motion, anime-style motion lines emphasizing the smooth penetration.)

(At 2 seconds: Her back arching deeply against him to intensify the pleasure, hips swaying with each thrust, breasts bouncing subtly, small hearts floating around them to capture the erotic energy.)

(At 3 seconds: Her face, eyes closed in bliss, a soft moan escaping, hands resting behind her head, anime-style blush on her cheeks, the air filled with a seductive aura.)

(At 4 seconds: The man penetrating her deeply, her body moving in sync with his, the bed sheets slightly rumpled, the room’s warm lighting enhancing the intimate, lustful atmosphere.)

(At 5 seconds: The couple locked in a passionate embrace, the scene exuding vibrant, seductive energy, anime style with smooth lines and soft shadows.)

🟣 Lightning Edition – NSFW I2V V2

⚡ Model Presentation (2 new versions available)

I originally planned to release only one V2, but some people preferred the NSFW V1 over the Fast Move V1 version, so depending on what you’re looking for, one version may suit you better than the other.

For these V2 versions, I tried a new approach:

✔ I made sure that most sexual poses work, while the model is also good for SFW content

✔ More flexible for general use

🔥 NSFW Fast Move V2

Improvements included in this version:

Better prompt understanding

Better camera understanding

Reduced unnecessary back-and-forth movements outside sexual poses

(cannot be completely removed, but strongly reduced)Improved bounce effect on the buttocks

If a man appears, he will no longer automatically attempt to penetrate the woman when she is nude

This version is designed for those who want more dynamic scenes with more movement.

💜 NSFW V2

The difference between this version and NSFW Fast Move V2:

Less camera control

Less camera understanding

But body movements are less pronounced (breasts and buttocks)

Some preferred V1 NSFW to V1 NSFW Fast Move, and this version keeps that spirit

For varied sexual poses, check the previews — there are many.

You can use the shown prompts and adapt them to your images, but of course, other prompts will work as well.

Don’t hesitate to use other LoRAs for creating specific concepts.

🔧 Recommended Settings

Steps: 2+2

(Jellai recommends 2+3 for even better results, and I agree)Sampler: Euler simple

CFG: 1

This model already includes the Lightning LoRAs, don’t use them, or the quality will be degraded

You need to download both models: H for High and L for Low.

Here is an example made with the base workflow + the First/Last Frame workflow + upscaler (only on the starting images) + inpainting (cum).

https://civarchive.com/images/114733916

🔞 LoRA Examples (with links and recommended weights)

LoRA: Penis Insert WAN 2.2

LoRA weight: 1

Face → Doggy

https://civarchive.com/images/112864483Face → Missionary

https://civarchive.com/images/112864718

https://civarchive.com/images/112864835Face → Doggy (leg aside)

https://civarchive.com/images/112864885Another example

https://civarchive.com/images/112864972

Other examples with different LoRAs

Face → Reverse Cowgirl — LoRA weight: 0.7

https://civarchive.com/images/112865189Face → Cowgirl — LoRA weight: 0.5

Face → Missionary — LoRA weight: 0.3

https://civarchive.com/images/112865381Face → Missionary — LoRA weight: 0.5

Face → Doggy leg aside — LoRA weight: 0.6

https://civarchive.com/images/112865551Face → Doggy — LoRA weight: 0.7

https://civarchive.com/images/112865696Face → Spoon — LoRA weight: 0.7

https://civarchive.com/images/112868124

Recommended LoRA to obtain a very realistic vagina and anus:

https://civarchive.com/models/2217653?modelVersionId=2496754

💡 Tip for Anime Style:

If you’re working in an anime style, feel free to follow the advice of @g1263495582 thanks to him for this.

Try these LoRAs together or separately; they help maintain face consistency:

🔹 Note:

For these LoRAs, use only Low Noise.

For the examples mentioned above, use High and Low Noise as indicated.

LoRA weight: 0.3

Many things can be done with the model, but don’t hesitate to use other LoRAs for specific purposes. And don’t hesitate to lower the LoRA strength to preserve the face as much as possible.

📷 Dynamic prompt example with different camera angles:

(at 0 seconds: wide shot showing the woman standing in the snowy plain, a massive giant dragon emerging behind her, snow cracking and dust rising).

(at 1 second: the woman jumps backward onto the dragon’s back as it bursts fully from the sky, camera tracking the motion from a side angle, debris and snow flying).

(at 2 seconds: medium shot from the side, the woman balances heroically on the dragon’s back as it begins to run forward across the snowy plain, slow-motion on her posture).

(at 3 seconds: close-up on the woman’s determined face, camera slightly low-angle to emphasize her heroic stance, snow and debris flying around).

(at 4 seconds: dynamic travelling shot alongside the dragon, showing the snowy plain, scattered debris and ice fragments flying everywhere as it gains speed).

(at 5 seconds: wide cinematic shot pulling back, showing the dragon taking off with the woman riding on its back, soaring above the snowy plain, epic perspective with snow, wind, and scale emphasizing the drama).

For available camera angles, check further down in the “cam V2” model description.

🔞 Normal Clip vs NSFW Clip:

You can also use the NSFW version for your clip.

It can bring positive effects for sexual scenes, but it can also cause issues, as in this example:

NSFW Clip:

https://civarchive.com/images/112864204Normal Clip (same seed):

https://civarchive.com/images/112864295

Here are the links to NSFW clips (thanks to zoot_allure855 for correcting the BF16 version):

BF16 fixed version:

https://huggingface.co/zootkitty/nsfw_wan_umt5-xxl_bf16_fixed/tree/mainFP8 version:

https://huggingface.co/NSFW-API/NSFW-Wan-UMT5-XXL/tree/main?not-for-all-audiences=true

❓ If you have any questions, don’t hesitate to ask!

Many people ask me for help via private message. You can do that, no problem, but I would appreciate it if you could do it in the comment section, it could help other people. Thank you.

🟣 Lightning Edition – NSFW I2V camera prompt adherence

⚡ Model Presentation (2 versions available)

I decided to release 2 versions. Both can produce different results, as you can see in the previews (the seed was the same).

Fast Move Version: provides more movement, and the movements will be faster, with better prompt understanding and camera handling

(you can see it in the 4th preview "fast move high" where the man slaps the woman)Natural Motion Version: offers more natural breast movements depending on the situation and produces slower scenes.

👉 Check both and choose the one that works best for you.

This NSFW edition is, of course, focused on sexual poses.

You should achieve very good results.

To create specific concepts, feel free to use specific LoRAs — they work very well with this model.

🔧 Recommended Settings

Steps: 2+2

(Jellai recommends 2+3 for even better results, and I agree)Sampler: Euler simple

CFG: 1

This model already includes the Lightning LoRAs, don’t use them, or the quality will be degraded

You can also use this T5, which may improve understanding:

https://huggingface.co/NSFW-API/NSFW-Wan-UMT5-XXL/tree/main?not-for-all-audiences=true

📝 Usage Tips

Start with a clean, high-resolution image for better results.

The model will not change the face; if it does, increase the resolution.

Also adjust your prompt if you are not getting what you want.

Wan understands some terms well, but not all.

For prompts, check the video previews: adapt them to your image.

Other prompts will work too.

🎥 Training

I trained 2 LoRAs:

The first one using several videos and images of different sexual positions.

The second one to bring more dynamic motion.

Respect to everyone who creates this type of LoRA — it requires a lot of work.

🙏 Credits

This model wouldn’t exist without the incredible work of these creators:

alcaitiff : https://civarchive.com/models/1295758/nsfw-fluxorwan-22orqwen-mystic-xxx?modelVersionId=2300332

Sweet_Pixeline : https://civarchive.com/models/1844313/penis-play-wan-22

anonimoose : https://civarchive.com/models/2008663/slop-twerk-wan-22-i2v

dtwr434 https://civarchive.com/models/1331682?modelVersionId=2098405

A special thanks to Alcaitiff and CubeyAI, two very kind and humble people.

🔔 Important

Please don’t support my work with buzzes here, I don’t need it.

If you want to support someone, support the creators listed above — they truly deserve it.

💬 Feedback

Feel free to give me feedback, positive or negative, to help improve future updates.

Update WAN 2.2 V2 CAM I2V – NEW feature Camera & Prompt Improvements

This custom version of WAN 2.2 I2V has been updated to deliver better prompt comprehension and improved handling of camera angles and cinematic movements. It provides more accurate scene interpretation, smoother transitions, and enhanced control over dynamic.

Key Features:

Excellent understanding of prompts and scene composition.

Supports various camera angles and movements, including zoom, dolly, pan, tilt, orbit, tracking, and handheld shots.

Ideal for cinematic storytelling, animated sequences, and creative video-to-image projects.

Flexible multi-step prompts, standard 4 steps (2+2), can be increased for higher fidelity.

Recommended sampler: Euler simple.

You can, of course, use your usual prompts; this is just one example among many.

Example Prompt with Different Camera Angles

(at 0 seconds: wide frontal shot of a man standing in front of an open fridge, cinematic lighting, subtle ambient kitchen reflections, the fridge contents visible, camera static).

(at 1 second: medium shot from the front as he opens the fridge fully, reaches for a can, slight zoom-in to emphasize the action, cinematic framing).

(at 2 seconds: camera shifts to a side medium shot, tracking him as he lifts the can to his mouth, fluid movement, maintaining lighting and reflections).

(at 3 seconds: camera starts a smooth 360-degree orbit around the man, following him as he drinks from the can, motion fluid, background slightly blurred for cinematic effect).

(at 4 seconds: close-up on his face and upper body while drinking, orbit continues subtly, fridge reflections accentuating realism, cinematic polish).

(at 5 seconds: final wide shot as he lowers the can, camera completes orbit to original angle, showcasing the kitchen space, lighting, and dynamic movement).

Available Camera Movements

Zoom / Dolly

zoom in

zoom out

camera zooms in on subject

camera zooms out gradually

dolly in

dolly out

camera dollies in slowly

camera dollies out steadily

crash zoom

Pan

pan left

pan right

camera pans across the scene

gentle pan left

sweeping pan right

Tilt

tilt up

tilt down

camera tilts up to reveal…

camera tilts down from…

Orbital / Tracking / Arc / Rotation

orbit around subject

360° orbit

camera circles around

tracking shot

camera tracks alongside subject

arc shot

curved camera movement

Other Movements & Styles

static camera / static shot

handheld shot

camera roll

Note:

LoRAs work perfectly with this model, offering full compatibility and consistent results across styles and concepts.

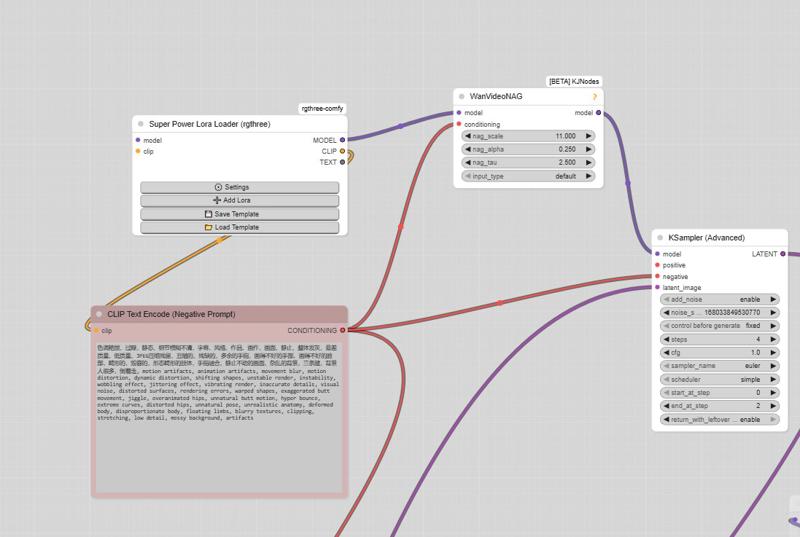

# SUPPLEMENTARY ADVICE

You can use negative prompts, but be careful: this will double the generation time.

Only use them if you really want to prevent something from appearing in your video.

In that case, enable the corresponding node; otherwise, keep it disabled.

⚠️ Important

The model must be used with CFG set to 1, so negative prompts do not work by default.

However, there is a simple way to enable them.

How to enable negative prompts:

Open the Manager

Search for kjnode and install it

In your workflow, add the WAN Nag node

To use it correctly:

Connect this node after the LoRA Loader

Feed it with the negative prompt

Then connect it to the first kSampler (High)

👉 Only use this option when necessary, to avoid unnecessarily increasing generation time.

And here is the negative prompt for unwanted movements:motion artifacts, animation artifacts, movement blur, motion distortion, dynamic distortion, shifting shapes, unstable render, instability, wobbling effect, jittering effect, vibrating render, inaccurate details, visual noise, distorted surfaces, rendering errors, warped shapes, exaggerated butt movement, jiggle, overanimated hips, unnatural butt motion, hyper bounce, extreme curves, distorted hips, unnatural pose, unrealistic anatomy, deformed body, disproportionate body, floating limbs, blurry textures, clipping, stretching, low detail, messy background, artifacts, butt bounce, moving hips, swinging hips, shaking butt, wiggling butt, moving lower body, moving pelvis, jiggling buttocks, bouncing butt, unstable stance, unnatural hip motion, exaggerated hip movement, hip sway, hip rotation, bottom motion, pelvis motion, wobbling hips, fidgeting lower body, dancing hips, pelvic movement, motion blur, unnatural movement

And here is the negative prompt from the official ComfyUI workflow:色调艳丽,过曝,静态,细节模糊不清,字幕,风格,作品,画作,画面,静止,整体发灰,最差质量,低质量,JPEG压缩残留,丑陋的,残缺的,多余的手指,画得不好的手部,画得不好的脸部,畸形的,毁容的,形态畸形的肢体,手指融合,静止不动的画面,杂乱的背景,三条腿,背景人很多,倒着走

Note: If you use the default workflow without the node, this negative prompt will not work.

UPTDATE: Lightning Edition – T2V

I’m not really experienced with T2V myself, but a colleague who works with it a lot tested this version and confirmed that it performs very well. From the few tests I managed to do on my side, I got the same impression, although I haven’t compared it directly with the base version yet.

The settings are the same as in the image-to-video version: 2+2 steps or more if you prefer, CFG at 1, and Euler simple (though other samplers also work great).

I don’t plan to make an NSFW version for the T2V release,

but for the I2V version, I’m already quite happy with the first results.

Update WAN 2.2 V1.1 I2V

Updated version of the original Lightning merge — same settings 2+2 steps or more, featuring more movement and smoother flow (depending on the prompt).

The model already works very well in NSFW. Just use the right LoRAs, and the movement will improve.

WAN 2.2 V1 I2V

This checkpoint is based on the original WAN 2.2, with the Lightning WAN 2.2 and Lightning WAN 2.1 LoRAs already integrated. This improves image quality, makes motion smoother and more dynamic, and removes the slow-motion effect that can occur with the Lightning models.

A common setup is to use 2 steps on the high model and 2 steps on the low model, though other settings may work as well. Do not apply the Lightning LoRAs manually — they’re already included in this checkpoint.

My workflow

https://civarchive.com/models/2079192/wan-22-i2v-native-enhanced-lightning-edition

Description

FAQ

Comments (118)

what strengths u guys using on nsfw loras on nsfw v2 or fast move v2 models? i know they have lighting loras but any of u use any slight lighting loras? Sometimes my loras degrade image quality. or i struggle with penis mouth interaction. which they move like cutting eachother.

Hey guys, I'm finding the SVI checkpoint from here in high, with easywan in low with loras turned down to almost nothing in both high and low is getting me insanely good generations with high prompt adherence and minimal concept bleed. I've been messing with this for months at this point and I've never gotten outputs like this before. Just putting this out there in case anyone else wants to try it out.

can you elaborate more and share a workflow?

@chrmaroudas625 Just use whatever workflow you normally do or are comfortable with. ITs quite simple. Where you would normally run the matching pair of checkpoint in the high and low; you instead run the high checkpoint from here, enhanced nsfw high, in my case the SVI version. And in the low checkpoint slot you run the old easywan low checkpoint. You can mix most checkpoints like this without many/any problems. The nice thing about this is that easywan doesn't have concept bleedthrough like all of these merged models. So you get minimal bleedthrough in your generations if you have it in the low slot. But still benefit from the concepts during the high noise run with one of these merges in high.

@chrmaroudas625 agreed share wf please, im normally comfortable with nothing.... because i don't make my own wf..... i literally dont understand most of those nodes lol /derp

so whats a good workflow for this WAN 2.2 Enhanced NSFW | SVI | camera prompt adherence (Lightning Edition) I2V and T2V fp8 GGUF? I never made videos yet, only images 3 years ago.

@bbrummy Hello! Start with the simplest option: take the NSFW V2 version and use the simplest WF (Workflow) possible; it will work perfectly. Here is the link: https://blog.comfy.org/p/wan22-day-0-support-in-comfyui. It's not very complicated: you need to load the High and Low models into the 'Load Diffusion Model' node. Do not use Lightning LoRAs, as they are already included in the model.

@taek75799 Appreciate it, thank you. I will check it out asap.

@taek75799 Hey sorry to bother you again.... But I have no idea again.... All these workflows i've managed to get are missing some custom node I can't find, or it comes out blurry and messed up. Or the workflow is so autistic i cant make sense of any of it or its in chinese. Any chance you can point me to a place i can find a workflow that is basic? Or maybe where I can learn to make an image to video workflow myself? I tried to replace the checkpoint with your "WAN 2.2 Enhanced NSFW | SVI | camera prompt adherence (Lightning Edition) I2V and T2V fp8 GGUF" but it breaks or doesn't come out well. No idea.... back to 0 lol..... X_X

@bbrummy Try this WF, it couldn't be simpler: https://blog.comfy.org/p/wan22-day-0-support-in-comfyui"

@taek75799 Thanks again, I'll give it another shot. Also these are the errors I usually get using someones random workflows, I have everything but some random custom node. The Node ID is usually some random 3 digit number.

Prompt execution failed

Cannot execute because a node is missing the class_type property.: Node ID '#176'

Also I have used this workflow but it doesn't seem to be able to do NSFW. I replaced the Load Diffusion Model to Unet Loader (GGUF) to again try to use your WAN 2.2 Enhanced NSFW | SVI | camera prompt adherence (Lightning Edition) I2V and T2V fp8 GGUF. But it comes out terribly X_X just blurry oversaturated mess.

@oldthrashbar can you give me more details on easywan you mentioned. or just the exact term to search in civitai search bar. i get an workflow when i use easywan in search bar and it has the AIO model. just any other clues to find it

@gayanholmes787 when you search i2v checkpoints it's one of the older ones, a gguf model. Has some anime girl making a heart sign.

@oldthrashbar Thanks. i found it. here is the link for anyone else. EasyWan22 FastMix - LOW-Q4_K_M | Wan Video Checkpoint | Civitai

Gosh man, you releasing them faster then people can test them xD

what i need to get svi running ? the checkpoint from here and svi lora ?

Hello! You need to have the checkpoint here along with the SVI LoRAs and the Lightning LoRAs, as well as an SVI WF (Workflow), otherwise it won't work. Check the description: you'll find links to download the SVI LoRAs, some examples of Lightning combos, and also several WFs.

@taek75799 i already downloaded all, but any lighting lora will work ?

@Seii1 Yes, any of them, but depending on which ones you use, you'll get different results.

@taek75799 thx its work now

@taek75799 another question, i read the text on the workflow comfy ui to make more than 5 sec, i generate the first video 5 sec and then move on to the second seed, but it generate the first seed again even tho the seed remain the same, or i need to make the second seed the same as the first seed ?

@Seii1 You don't need to have the same seed for the second 5-second video. In principle, if the seed is the same as the first video already generated, it won't re-generate it, although I have also encountered this issue where it generates the video all over again.

@taek75799 is it skip the first generation os it just regenrate the same video cause the same seed? because i just regenerate the first video again when i active the second seed thts why i took longer,

@taek75799 or i just deactive the first and only generate the second seed is that work ? i dont want to regenerate the first video again cause it take resources like ram and vram and make the whole generation longer

@Seii1 You shouldn't disable the first section: leave it active and do the same for each section, as explained in the tutorial. Change the seed if you want a different video, and keep the same seed once you are happy with the result."

@taek75799 oh i thought i just skip generating the first video, so basically if im happy with the result keep the seed and the move to the second video, but everytime i generate it will regerate again but the same video right?

@Seii1 yes

@taek75799 how to get .json for this?

WAN 2.2 Enhanced NSFW | SVI | camera prompt adherence (Lightning Edition) I2V and T2V fp8 GGUF

I dont understand how to get a .json image from a video, it just tells me unable to find workflow from the screenshot i take of the video.... even Grok can't help keeps telling me to find some attachments at the bottom of this page.... i'm lost and 0 clue now again....

The workflow uses Wan_2_2_I2V_A14B_HIGH_test-derniere-version-selforcing.safetensors and wan2.2_i2v_A14b_low_selforcing_last-version_rank64_lightx2v_4step_1022.safetensors but I can't find those. Any ideas?

Hello! The names have just been renamed. Check the description; it corresponds to Combo 2.

Ah, OK. Thank you!

Hello, I am new in AI world,

I been been playing around different workflow, But I can not get yours to work for the wan2.2_SVI_PRO-civitai.json.

once i run it, it said, [GetNode] ✗ Variable 'upscal' not found!

I disable it and try to make it run with another node for saving video.

then it said, [GetNode] ✗ Variable 'model low base' not found!

also for "wan2.2_LIGHTNING-EDITION_long-video_FP8GGUF.json"

which repo is for painteri2v? I download both version, and there is no "painteri2v advance" nodes

Hello! Make sure the 'Get' and 'Set' nodes are active. To do this, click on them and press Ctrl+B. Try placing all of them next to the groups; I think that works better, and keep them enabled. Here is the repo: https://github.com/princepainter/ComfyUI-PainterI2Vadvanced

@taek75799 I have solved the painter advance. then this wf is having the same problem.

It seem i don't understand how this set and get node work.

I didn't change any setting in the original workflow and it just doesn't work

I can't get the imported workflow to produce seamless transition like in the example videos, even using the same prompts and a similar initial image. The transition are all fades/blends. Any help appreciated.

Hello! Which WF are you using? The videos were made using either the SVI WF or the Triple KSampler one, but most of them were done with the Triple KSampler.

Triple KSampler

@psychopie194 Look here in the comments, you can see how the video was created: https://civitai.com/images/118594798"

where does this go inside? diffusion_models, gguf, unet? nothing works.. . ohhhh its in unet loader gguf.... now just still need some wf, if anyone can help. grok is telling me to go down to attachments to find the workflow? fuck...... Where do i find a .json for this?

ffs please someone post a .json file i can click on and download....

how do you get a .json from a video....? holy fuck im retarded even Ai cant help...

@bbrummy some people don't save the meta data so you can't get a json from it. you need to just keep trying videos until you find one with a json.

@bbrummy There are metadata on almost all of my videos. The ones made with SVI have them, but since I’m using a slightly older version of ComfyUI, it seems some people are unable to open them. Therefore, I’ve also included the JSON. Check the first preview of the SVI Q4KM model: in the comments, you’ll find a link to the JSON file.

@jgillservices377 @taek75799 Thank you guys. Slowly getting somewhere.... not sure though because now this is happening. Fast Groups Bypasser (rgthree) can't load this. Idk what to do anymore been at it for hours this is so mentally draining. Apparently everything is a buggy mess according to grok. So idk should i just delete comfy and just restart, maybe i've downloaded too many conflicting nodes.... out of ideas.

@bbrummy Fast Group Bypass is just a node that replaces the Ctrl+B shortcut on nodes. You can do it manually.

@taek75799 mhmmm.... mmmm.... so like how does one do this manually?

@bbrummy Right-click with your mouse on any node and use Ctrl+B to enable or disable it.

@taek75799 i have this rgthree node but it says i don't have it when i boot up comfy. is it just super bugged? should i just reinstall everything?

@bbrummy Try to install it manually using git clone.

@taek75799 yea i have done this a bunch now... still nothing. V_V

Hello, thanks for amazing model and wf. But I have one big problem. The face the woman in I2V model always change significantly overtime even when I keep the face always visible to the last frame of every segment. I'm using your lastest lightning workflow and fast move v2 q8. Also I'm using your reccomended setting. Is there anything I can do about it? Thank you!

Hello! The longer the video, the more the face and other details will degrade. If you want less degradation and more consistency for long videos, download the SVI WF along with the SVI CF model: they are designed for exactly that. Read the description, you will find some useful information there.

@taek75799 Thank you very much, Sir!

wait so whats the difference between nsfw version and nolightning version? lol i mean they both resulting in some nsfw videos

Hello! Lol, yes, they might seem similar. The difference is that one doesn't have the Lightning LoRAs included, among other things. Actually, the NSFW V2 models aren't ideal for use with SVI. So, I created specific models to work well with SVI; it allows for generating long videos with better consistency and less degradation.

@taek75799 oh boy lol I was thinking all of these included svi, but svi is just a lora?

@evantopsmith yes just a loras

Maybe I'm doing something wrong, but your FP8 models with built-in loras, in my opinion, works better - the movements are not slow, there is a better understanding of the prompt and the video quality is higher.

Hello, and thank you for your feedback! To be honest, with the Triple KSampler WF, you can achieve better results in terms of movement using the SVI version. It also understands if you specify in the prompt that you want something fast, even if the dynamism is less intense. I agree: the SVI versions are an alternative; they will be better for creating long videos.

Firstly, thank you for your amazing models. Secondly, thanks to your incredible performance, I'm completely confused in models. (( Also, Civitai's idiotic interface and their habit of renaming files don't help.

I don't have a fast internet connection and downloading everything for experiments is a bit difficult. Could you provide links to the best/latest models for the following cases (all nsfw):

1. fp8 I2V

2. fp8 T2V

3. fp8 I2V SVI

4. fp8 T2V SVI

Thanks

Hi! Everything okay?

I'm using your WAN Enhanced NSFW Lightning Edition (GGUF/fp8). My setup is:

- GPU: RX 7600 8 GB VRAM

- OS: Windows

- Use case: Simple NSFW I2V loops (repetitive thrusting close-up, focus on rigid penis, firm ass without excessive jiggle or gelatinous sinking, high sharpness from frame 1)

- Resolution: 512x512 or 480p (testing 720p)

- Length: 49–81 frames

- Motion amplitude: 1.0–1.2

- Color protect strength: 0.3–0.35

- LoRAs: SVI High/Low + Lightning low noise combo (weight 0.8–1.0)

I'm having some blur/degradation from frame 1 (original image loses sharpness right away) and slight morphing in penis/ass in some cases.

Which version do you recommend as the best for my setup (high sharpness + dry/consistent thrusting in short/long loops)?

- fp8 safetensors

- Q8 GGUF

- Q4_K_M GGUF

- Other?

And which LoRA combo (SVI + Lightning) to use (e.g. Combo 2 low degradation for sharpness)? Suggested weights? Any extra tweaks on motion amplitude, color protect, or prompt to avoid initial blur/degradation?

Thanks a lot for the awesome model!

Hello! The workflow you are using is not suitable for the different combos. It will be compatible with the NSFW V2 model, and those already have Lightning built-in. Therefore, the combos will be for the SVI models and will work with the SVI WFs. They will be better suited for creating long videos

Ram?

Hi guys! I replaced the Lightning Low 1022 LoRA with the 1017 version. I was having some issues with brightness shifts (sudden increases) during certain transitions with the 1022. I've updated the WFs and the descriptions.

a week later: are you still favoring the 3 KSampler workflow, and did you end up changing your step counts per sampler since then? and the replaced loras are for that wf?

@juliusmartin Hello! I only use the Triple KSampler. I'm not sure if the simple WF with two KSamplers has the same issue, but if it does, you should also replace the 1022 LoRAs with the 1017 ones. However, when I tested SVI, I never encountered this problem with it.

Yes, I prefer the Triple KSampler; it doesn't have that slowdown issue.

Which would be the perfect model for a RTX 4060, 8GB Vram, 32GB Ram, and Ryzen 5 7500f? I have been using the IV2 Q5_K_M giving the results of 6 second videos for 5 - 10 mins. this is for 480p then upscaled

I was thinking of the FAST MOVE V2 Q8, but then again I just started and I'm not really sure

Hello! Try either Q6_K or Q8.

I'm using Q8 on my 3060Ti 8gb. 32gb ram + 48gb pagefile. It's pretty good for 5 sec 480p. With optimized workflow and sageattention

i have the same graphics card with a ryzen 9. use FastmoveV2 Q4KMH and KML. takes me 2 minutes for a 3 second clip which is better than anything ive generated with smoothmix or wan 2.2

which models and loras would you suggest with a 5090 and 64gb of ram?

Hello! Choosing Q8, for the LoRA is really a matter of personal preference.

I am getting crazy with this checkpoint!!! Tried different models (V2 FP8, V2 Q8) with the settings described here and same workflow and tried a seed + prompt that worked well with other NSFW WAN... and I only get pure noise. Really weird, I am sure I am omitting something critical but cannot find it.

Hello! Send me your WF after generating the video so I can check it.

Tried again, in this case with one of your uploaded WF, and it worked with the Q8 GGUF model flawlessly. I think the problem was in the Lora chaining and KSampler versions used.... my simple workflows of HI-LO(+/-Lora), resized image and KSamplers in chain simply dont work.

I also get just noise/gray when using the new SVI models with my regular SVI workflow. I do however NOT use the IAMCCS_WanImageMotion node. I was hoping it would work with either kijai's WanVideo wrapper nodes, or the native nodes and not rely on that particular node?

Hmpf.. I think it may be a difference between SVI and SVI PRO? The KJ nodes expect a SVI PRO lora.. not sure if the IAMCCS_WanImageMotion node expect?

@madein_72 我之前使用SVI工作流得到棕色噪声视频,是因为使用了SVI官方团队发布的SVI PRO LORA。应该使用kj上传的SVI PRO LORA才能得到正确的结果

@nng2025 Problemet er jo at "nolightning SVI cf" modellen her har SVI non-pro innebygget.. når man legger begge sammen, så blir det dobbelt opp, og kvaliteten blir skikkelig dårlig.

Hi everyone, does anyone have a workaround to increase the motion range in SVI?

When I use the SVI + Combo 3 setup, the facial consistency is top-notch, but the movement is very restricted. Sometimes, I just get pure noise when trying to move the character between different spaces, or the motion looks glitchy/uncanny.

On the other hand, if I switch to the V2 model with the 3-subgraph workflow, the character's face deviates too much from the original.

Hello! Have you tried the Triple KSampler WF?

try painterI2V custom node on github

I use a laptop and I'm listing its specifications below. Which model should I use? Also, I'm looking for a ready-made workflow for my laptop. I would appreciate your help.

Windows 11 Home Single Language (1 Month Xbox Game Pass for PC)

Intel® Wi-Fi 6E AX211 2x2 AX + Bluetooth 5.3 M.2 2230

Intel® Raptor Lake Core™ i7-13700HX 16C/24T; 30MB L3; E-CORE Max 3.70GHZ P-CORE Max 5.00 GHZ; 55W

32GB (2x16GB) DDR5 1.1V 4800MHz SODIMM

Monster Backpack

16'' FHD+ 1920X1200 165Hz IPS Matte LED Screen

NVIDIA® GeForce® RTX 5070 Max-Performance 8GB GDDR7 128-Bit DX12 (100 Watt + 15 Watt DB.2.0)

1TB M.2 NVMe GEN4 SSD (Read: 5000MB/s – Write: 3600 MB/s)

Hello! Maybe the Q6_K or else the Q4_K_M.

"Monster Backpack" W

Did you update all of your models to force SVI? Wasn't there some models that didn't use SVI? I can't find them. All of them have SVI in the name now.

Don't mind the filename; I think Civitai renames them according to the title.

@taek75799 oh ok. Which one is the latest/highest quality non SVI ones? I’ve been using V2FP8 in my 20sec workflow that has 2k downloads atm.

@EchoSingularity The NSFW v2 in Q8 will be better.

@EchoSingularity It really depends on your definition of quality. For video quality, pick the one without the Lightning LoRA merged (SVI). For NSFW quality, use the NSFW or NSFW Fast Move ones.

@taek75799 I will try it thank you. I thought because the SVI was in the name that it was only for SVI workflows. I am updating my workflow for consistent quality and wanted to have your latest model in there.

@taek75799 I am still getting best fidelity results on the enhancednsfwcameraprompt_v2fp8.safetensors model vs the v2q8 gguf. Everything with SVI in the name seems to lose fidelity and detail quickly.

@taek75799 Is it because of lightning Lora’s maybe?

@EchoSingularity It's possible regarding the Lightning LoRAs. What you can do is check the videos longer than 10 seconds made with the SVI models; the metadata is included. Alternatively, check the JSON files saved after generating the videos to see if your settings are correct.

@taek75799 I'm using a non svi workflow I made around your original .safetensor models that uses last frame, does 4 phases, and stitches together for 20 second videos on 12gb cards at 536 × 808. I posted it and it's at like 2.4k downloads. I may try SVI in the future but for now I was using your non SVI models and all the new models I try lose quality quickly. Maybe because they're gguf? I'm not sure why, it's not lightning lora but the original enhancednsfwcameraprompt_v2fp8.safetensors model gets better results than any of the newer model versions with this workflow I made : https://civitai.com/models/2207167/wan22-i2v-12gb-20-seconds-mmaudio-60fps-low-vram

@EchoSingularity I checked the workflow, and it uses the last frame of one clip as the first frame of the next. This method tends to cause generational loss or degradation more easily compared to SVI 2.0 Pro.

@EchoSingularity Thank you for the link. I'll try out your WF; it looks interesting ,As for why fp8 might work better than GGUF, I'm not sure. In theory, Q8 should be better, but the most important thing is that fp8 works well.

@g1263495582 Thank you for trying it out. There is degredation because of how the workflow uses last frame yes, but I am still seeing better results with the enhancednsfwcameraprompt_v2fp8.safetensors checkpoint instead of the new GGUF which is interesting.

@g1263495582 If you have a chance try my workflow with different models and you’ll see the degradation is not like other last frame workflows

Anime styles really don't seem to get along with Lightning LoRA. I was also wondering, are you planning to make an MXFP8 version instead of the standard FP8?

For anime-style facing issues with inconsistent eyes or mouths shifting from simple lines to realistic lips, you can resolve this by setting your samples to 3. Alternatively, if speed is not a priority, try removing the Lightning LoRA from the high pass while keeping it in the low pass; in this configuration, set your high steps to 10 (experimenting down to 5 is possible) and your low steps between 2 and 4.

As for the mouth issue, avoid using the word 'lips' in your prompt; use 'mouth' instead.

Hello, and thank you for your feedback and your other advice. I will check out the mxfp8 version, I wasn't familiar with that format.

@taek75799 If you're interested in making MXFP8 models, check out silveroxides/convert_to_quant. I also recommend using the --make-hybrid-mxfp8 flag as a workaround for hardware that doesn't support MXFP8.

@g1263495582 Thanks, very interesting. It can also be done in int8.

@taek75799 I've noticed something with Wan 2.2 and I'm wondering if you've seen it too. It seems like when I use phrases like 'the camera' or 'as camera' for control, the model doesn't follow instructions very well. It works much better when I just use 'camera' directly. Have you noticed this, or is it just me?

@g1263495582 I didn't know that, and yet I use different camera angles quite a bit. Thanks for that, I'll give it a try as soon as I can

Honestly, this is a mix of bad UI in civitai and bad descriptions made by the OP. This is impossible to understand, there is too much, too mixed, and I simply don't know what I end up downloading. Badly named models lead to this too; I downloaded 18gb of probably wrong data because the models are named in a vague way you cannot tell what you selected. I downloaded NSFW Q4 models and I now see them named as wan22EnhancedNSFWSVICamera_nsfwV2Q4KMHIGH and low. I did not want SVI, I wanted the lightning included version, that is, if I understood this wall of words.

From what I’ve read, you want to separate the models because they’re currently lumped together and look messy, right? So, one would be just the SVI family, another would be for lighting only, and then the others—is that correct? Also, the names are too long, right?

Hello! You have to rename them when you download them. Civitai renames the files, and I have no control over that.

As for the rest, I think the description is clear enough. What I mean is that a minimum level of knowledge is required for Wan. I can't make tutorials on how to use Wan, what the Lightnings are for, etc. This is not a tutorial page.

It's super confusing but if you want Lightning version then there are NSFW Fast Move V2 and NSFW V2. From the wall of text in the model description you probably want to start with NSFW Fast Move V2.

@taek75799 I assure you, the description is completely unclear. A million models in one card, the list of which could take half an hour to scroll through. Then another couple of hours to figure out how these models differ from each other. Why couldn't they be split into several cards on the website?

@rmbl3 Hello! There are 5 models in total. As for the others, they are just the same ones in GGUF format, so there are 5 descriptions. I guarantee you that my description is more detailed than any other Wan model on this site. I invite you to find a better description for a Wan model on this site and post the link here. And please, don't say '30 min' when I can do it in 20 seconds

@taek75799 yeah im gonna have to agree that the title cards are a bit misleading. You might want to clear up that these are all SVI so lightning lora will always be an option to use or not. but once I read the description and looked at a couple workflows I understood the big picture and what the title cards ment. Oh and if ur using this you should probably know the basics like the difference between fp8, q8_0, q6_k and then fp is full precision (higher vram) and the q models are all gguf (lower vram) and high and low models is like bare minimum wan knowledge. Might want to read a couple basic wan 2.2 tutorials first. lol obviously this isn't all directed at you taek lol

Edit: actually I did end up with one that wasnt SVI so my mistake lol wan22EnhancedNSFWCameraPrompt_nsfwV2Q8Low and High

skill issue lol

@drwang55805 Thank you, this should be at the top of the description.

In all the videos, the girl always moves her body in time with the guy, impaling herself on the shaft. Does anyone know a secret to fixing this?

sounds like one of those good kind of problems :P