⚠️ Important information

All models already include the Lightning LoRAs, except the SVI models, where Lightning is not included.

Do not use additional Lightning LoRAs on models that already have Lightning integrated, or the quality will be degraded.

For SVI models, you can use Lightning LoRAs if you want faster video generation.

2or3 ksampler (for svi) https://civarchive.com/models/2079192?modelVersionId=2668801

v2.1 (for nsfw v2) https://civarchive.com/models/2079192?modelVersionId=2562360

v2.1 with MMAUDIO (for nsfw v2) by @huchukato https://civarchive.com/models/2320999?modelVersionId=2613591

Another wf triple KSampler KSampler https://civarchive.com/models/1866565/wan22-continuous-generation-svi2-pro-or-gguf-or-32-phase-or-upscaleinterpolate-w-subgraphs-and-bus?modelVersionId=2559451

triple KSampler wf setup allows for more motion and helps prevent slow-motion issues. In exchange, your videos will take longer to generate.

For those having issues with my SVI workflow , you can try Kijai's wf here: https://github.com/user-attachments/files/24364598/Wan.-.2.2.SVI.Pro.-.Loop.native.json. Alternatively, you can try fmlf wf https://github.com/wallen0322/ComfyUI-Wan22FMLF/tree/main/example_workflows they will be simpler. There are others on Civitai that work very well too.

Qwen-VL workflow as an alternative to Grok for creating your dynamic NSFW prompts. Thanks @huchukato for his work: https://civarchive.com/models/2320999?modelVersionId=2611094

🟣 SVI Update – NSFW

⚡ Model Presentation (SVI-compatible version)

This update was made because the NSFW V2 models were not fully compatible with SVI LoRAs.

This version was created to work smoothly with SVI, while still functioning without them (though the workflow must be adapted).

Be careful, SVI LoRAs will only work with a workflow specifically designed for them, otherwise, it won't work.

There are two models available for SVI:

✔ Fast Move (FM) – Sexual scenes may differ from the Consistent Face model and will generally be faster.

✔ Consistent Face (CF) – Slightly better image quality, which may be preferable for anime-style videos; sexual scenes differ from Fast Move, but the difference is minimal.

You can also mix models between High and Low LoRAs:

FM (Fast Move) as High + CF (Consistent Face) as Low

CF (Consistent Face) as High + FM (Fast Move) as Low

Both combinations work and give slightly different results, offering more flexibility for your videos.

For this version, the main improvements include:

✔ Fully adapted for SVI LoRAs

✔ Greater flexibility: Lightning and SVI LoRAs must be loaded manually for custom workflows

🟣 SVI LoRAs – Strengths & Weaknesses

⚡ Overview

✅ Strengths

Best solution for making long videos

Excellent transitions between video segments

Reduced degradation compared to other solutions

Strong character coherence: the model retains information from the previous video, helping maintain consistency

⚠️ Weaknesses

Weaker prompt understanding

Weaker camera understanding

Videos are less dynamic

Sometimes slow-motion effect

(can be improved with proper Lightning LoRAs, dynamic prompts or triple ksampler)

🟣 SVI LoRAs – Download Links

⚡ Download

Note: Both LoRAs must be loaded manually in your workflow.

🟣 Suggested Lightning LoRA Combos (Optional)

⚡ Overview

You don’t have to use these Lightning LoRA combos. They are optional and allow you to fine-tune motion and degradation.

You can also use other Lightning LoRAs or assign different combos per video for more control.

🔥 Combo 1 – More Motion (Rapid Video Degradation)

High LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 4

or

💜 Combo 2 – Less Image Degradation

or

or

🟢 Combo 3 – Balanced Motion / Moderate Degradation

High LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 3

Low LoRA →

https://huggingface.co/Kijai/WanVideo_comfy/blob/709844db75d2e15582cf204e9a0b5e12b23a35dd/Lightx2v/lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors

Weight: 1.5

🧠 Advanced Usage Tip

You can disable global Lightning LoRAs (in my wf) and assign different combos per video:

Combo 1 for Video 1, Combo 3 for Video 2, Combo 2 for Video 3.

Each combo produces different motion and degradation behavior.

If you want to create several-minute-long videos while maintaining high quality, it is possible, but it will take a very long time. You just need to avoid using Lightning LoRAs and use the full model instead.

🧠 Dynamic Prompts – Better Control & More Motion

You can use dynamic prompts to have better control and this helps to make the video more dynamic.

You just need to give this example prompt to an LLM like ChatGPT. It will be enough for it to describe the image you have and what you want as a video while keeping the same structure of the prompt in the following example.

⚠️ This will be a NSFW prompt; ChatGPT will not accept it.

You can use GROK https://grok.com, which can make NSFW prompt modifications.

For more examples of prompts with different poses, check:

Enhanced FP8 Model.

Give these prompts to GLM with the dynamic prompt structure if you want.

Example 1

(At 0 seconds: Wide shot showing a slightly overweight man casually walking down a city street, camera fixed in front, urban environment with buildings and cars.)

(At 1 second: Suddenly, a massive shark bursts from the pavement ahead, looking terrifying at first, pavement cracking, dust and debris flying, camera from side angle.)

(At 2 seconds: Medium shot from the side, the man stumbles backward in shock, while the shark dramatically slows down and strikes a comically exaggerated sexy pose, revealing large, exaggerated shark breasts, covered by a colorful bikini.)

(At 3 seconds: Close-up on the man’s face, eyes wide in disbelief, as he turns to look at the shark, small cartoon-style hearts floating above his head to emphasize his amazement, camera slightly low-angle.)

(At 4 seconds: Dynamic travelling shot showing the man frozen in the street, the shark maintaining its sexy pose, water splashes and debris still moving realistically, urban chaos around.)

(At 5 seconds: Wide cinematic shot pulling back, showing the man standing in the street, staring at the bikini-wearing shark with hearts above his head, epic perspective highlighting absurdity and humor.)

Example 2 – Anime NSFW

(At 0 seconds: The couple in a cozy bedroom, anime style, soft lighting highlighting their intimate embrace, her back arched slightly as he positions himself.)

(At 1 second: The man’s hips moving rhythmically, the head of his penis sliding effortlessly into her vagina, her body responding with a gentle, fluid motion, anime-style motion lines emphasizing the smooth penetration.)

(At 2 seconds: Her back arching deeply against him to intensify the pleasure, hips swaying with each thrust, breasts bouncing subtly, small hearts floating around them to capture the erotic energy.)

(At 3 seconds: Her face, eyes closed in bliss, a soft moan escaping, hands resting behind her head, anime-style blush on her cheeks, the air filled with a seductive aura.)

(At 4 seconds: The man penetrating her deeply, her body moving in sync with his, the bed sheets slightly rumpled, the room’s warm lighting enhancing the intimate, lustful atmosphere.)

(At 5 seconds: The couple locked in a passionate embrace, the scene exuding vibrant, seductive energy, anime style with smooth lines and soft shadows.)

🟣 Lightning Edition – NSFW I2V V2

⚡ Model Presentation (2 new versions available)

I originally planned to release only one V2, but some people preferred the NSFW V1 over the Fast Move V1 version, so depending on what you’re looking for, one version may suit you better than the other.

For these V2 versions, I tried a new approach:

✔ I made sure that most sexual poses work, while the model is also good for SFW content

✔ More flexible for general use

🔥 NSFW Fast Move V2

Improvements included in this version:

Better prompt understanding

Better camera understanding

Reduced unnecessary back-and-forth movements outside sexual poses

(cannot be completely removed, but strongly reduced)Improved bounce effect on the buttocks

If a man appears, he will no longer automatically attempt to penetrate the woman when she is nude

This version is designed for those who want more dynamic scenes with more movement.

💜 NSFW V2

The difference between this version and NSFW Fast Move V2:

Less camera control

Less camera understanding

But body movements are less pronounced (breasts and buttocks)

Some preferred V1 NSFW to V1 NSFW Fast Move, and this version keeps that spirit

For varied sexual poses, check the previews — there are many.

You can use the shown prompts and adapt them to your images, but of course, other prompts will work as well.

Don’t hesitate to use other LoRAs for creating specific concepts.

🔧 Recommended Settings

Steps: 2+2

(Jellai recommends 2+3 for even better results, and I agree)Sampler: Euler simple

CFG: 1

This model already includes the Lightning LoRAs, don’t use them, or the quality will be degraded

You need to download both models: H for High and L for Low.

Here is an example made with the base workflow + the First/Last Frame workflow + upscaler (only on the starting images) + inpainting (cum).

https://civarchive.com/images/114733916

🔞 LoRA Examples (with links and recommended weights)

LoRA: Penis Insert WAN 2.2

LoRA weight: 1

Face → Doggy

https://civarchive.com/images/112864483Face → Missionary

https://civarchive.com/images/112864718

https://civarchive.com/images/112864835Face → Doggy (leg aside)

https://civarchive.com/images/112864885Another example

https://civarchive.com/images/112864972

Other examples with different LoRAs

Face → Reverse Cowgirl — LoRA weight: 0.7

https://civarchive.com/images/112865189Face → Cowgirl — LoRA weight: 0.5

Face → Missionary — LoRA weight: 0.3

https://civarchive.com/images/112865381Face → Missionary — LoRA weight: 0.5

Face → Doggy leg aside — LoRA weight: 0.6

https://civarchive.com/images/112865551Face → Doggy — LoRA weight: 0.7

https://civarchive.com/images/112865696Face → Spoon — LoRA weight: 0.7

https://civarchive.com/images/112868124

Recommended LoRA to obtain a very realistic vagina and anus:

https://civarchive.com/models/2217653?modelVersionId=2496754

💡 Tip for Anime Style:

If you’re working in an anime style, feel free to follow the advice of @g1263495582 thanks to him for this.

Try these LoRAs together or separately; they help maintain face consistency:

🔹 Note:

For these LoRAs, use only Low Noise.

For the examples mentioned above, use High and Low Noise as indicated.

LoRA weight: 0.3

Many things can be done with the model, but don’t hesitate to use other LoRAs for specific purposes. And don’t hesitate to lower the LoRA strength to preserve the face as much as possible.

📷 Dynamic prompt example with different camera angles:

(at 0 seconds: wide shot showing the woman standing in the snowy plain, a massive giant dragon emerging behind her, snow cracking and dust rising).

(at 1 second: the woman jumps backward onto the dragon’s back as it bursts fully from the sky, camera tracking the motion from a side angle, debris and snow flying).

(at 2 seconds: medium shot from the side, the woman balances heroically on the dragon’s back as it begins to run forward across the snowy plain, slow-motion on her posture).

(at 3 seconds: close-up on the woman’s determined face, camera slightly low-angle to emphasize her heroic stance, snow and debris flying around).

(at 4 seconds: dynamic travelling shot alongside the dragon, showing the snowy plain, scattered debris and ice fragments flying everywhere as it gains speed).

(at 5 seconds: wide cinematic shot pulling back, showing the dragon taking off with the woman riding on its back, soaring above the snowy plain, epic perspective with snow, wind, and scale emphasizing the drama).

For available camera angles, check further down in the “cam V2” model description.

🔞 Normal Clip vs NSFW Clip:

You can also use the NSFW version for your clip.

It can bring positive effects for sexual scenes, but it can also cause issues, as in this example:

NSFW Clip:

https://civarchive.com/images/112864204Normal Clip (same seed):

https://civarchive.com/images/112864295

Here are the links to NSFW clips (thanks to zoot_allure855 for correcting the BF16 version):

BF16 fixed version:

https://huggingface.co/zootkitty/nsfw_wan_umt5-xxl_bf16_fixed/tree/mainFP8 version:

https://huggingface.co/NSFW-API/NSFW-Wan-UMT5-XXL/tree/main?not-for-all-audiences=true

❓ If you have any questions, don’t hesitate to ask!

Many people ask me for help via private message. You can do that, no problem, but I would appreciate it if you could do it in the comment section, it could help other people. Thank you.

🟣 Lightning Edition – NSFW I2V camera prompt adherence

⚡ Model Presentation (2 versions available)

I decided to release 2 versions. Both can produce different results, as you can see in the previews (the seed was the same).

Fast Move Version: provides more movement, and the movements will be faster, with better prompt understanding and camera handling

(you can see it in the 4th preview "fast move high" where the man slaps the woman)Natural Motion Version: offers more natural breast movements depending on the situation and produces slower scenes.

👉 Check both and choose the one that works best for you.

This NSFW edition is, of course, focused on sexual poses.

You should achieve very good results.

To create specific concepts, feel free to use specific LoRAs — they work very well with this model.

🔧 Recommended Settings

Steps: 2+2

(Jellai recommends 2+3 for even better results, and I agree)Sampler: Euler simple

CFG: 1

This model already includes the Lightning LoRAs, don’t use them, or the quality will be degraded

You can also use this T5, which may improve understanding:

https://huggingface.co/NSFW-API/NSFW-Wan-UMT5-XXL/tree/main?not-for-all-audiences=true

📝 Usage Tips

Start with a clean, high-resolution image for better results.

The model will not change the face; if it does, increase the resolution.

Also adjust your prompt if you are not getting what you want.

Wan understands some terms well, but not all.

For prompts, check the video previews: adapt them to your image.

Other prompts will work too.

🎥 Training

I trained 2 LoRAs:

The first one using several videos and images of different sexual positions.

The second one to bring more dynamic motion.

Respect to everyone who creates this type of LoRA — it requires a lot of work.

🙏 Credits

This model wouldn’t exist without the incredible work of these creators:

alcaitiff : https://civarchive.com/models/1295758/nsfw-fluxorwan-22orqwen-mystic-xxx?modelVersionId=2300332

Sweet_Pixeline : https://civarchive.com/models/1844313/penis-play-wan-22

anonimoose : https://civarchive.com/models/2008663/slop-twerk-wan-22-i2v

dtwr434 https://civarchive.com/models/1331682?modelVersionId=2098405

A special thanks to Alcaitiff and CubeyAI, two very kind and humble people.

🔔 Important

Please don’t support my work with buzzes here, I don’t need it.

If you want to support someone, support the creators listed above — they truly deserve it.

💬 Feedback

Feel free to give me feedback, positive or negative, to help improve future updates.

Update WAN 2.2 V2 CAM I2V – NEW feature Camera & Prompt Improvements

This custom version of WAN 2.2 I2V has been updated to deliver better prompt comprehension and improved handling of camera angles and cinematic movements. It provides more accurate scene interpretation, smoother transitions, and enhanced control over dynamic.

Key Features:

Excellent understanding of prompts and scene composition.

Supports various camera angles and movements, including zoom, dolly, pan, tilt, orbit, tracking, and handheld shots.

Ideal for cinematic storytelling, animated sequences, and creative video-to-image projects.

Flexible multi-step prompts, standard 4 steps (2+2), can be increased for higher fidelity.

Recommended sampler: Euler simple.

You can, of course, use your usual prompts; this is just one example among many.

Example Prompt with Different Camera Angles

(at 0 seconds: wide frontal shot of a man standing in front of an open fridge, cinematic lighting, subtle ambient kitchen reflections, the fridge contents visible, camera static).

(at 1 second: medium shot from the front as he opens the fridge fully, reaches for a can, slight zoom-in to emphasize the action, cinematic framing).

(at 2 seconds: camera shifts to a side medium shot, tracking him as he lifts the can to his mouth, fluid movement, maintaining lighting and reflections).

(at 3 seconds: camera starts a smooth 360-degree orbit around the man, following him as he drinks from the can, motion fluid, background slightly blurred for cinematic effect).

(at 4 seconds: close-up on his face and upper body while drinking, orbit continues subtly, fridge reflections accentuating realism, cinematic polish).

(at 5 seconds: final wide shot as he lowers the can, camera completes orbit to original angle, showcasing the kitchen space, lighting, and dynamic movement).

Available Camera Movements

Zoom / Dolly

zoom in

zoom out

camera zooms in on subject

camera zooms out gradually

dolly in

dolly out

camera dollies in slowly

camera dollies out steadily

crash zoom

Pan

pan left

pan right

camera pans across the scene

gentle pan left

sweeping pan right

Tilt

tilt up

tilt down

camera tilts up to reveal…

camera tilts down from…

Orbital / Tracking / Arc / Rotation

orbit around subject

360° orbit

camera circles around

tracking shot

camera tracks alongside subject

arc shot

curved camera movement

Other Movements & Styles

static camera / static shot

handheld shot

camera roll

Note:

LoRAs work perfectly with this model, offering full compatibility and consistent results across styles and concepts.

# SUPPLEMENTARY ADVICE

You can use negative prompts, but be careful: this will double the generation time.

Only use them if you really want to prevent something from appearing in your video.

In that case, enable the corresponding node; otherwise, keep it disabled.

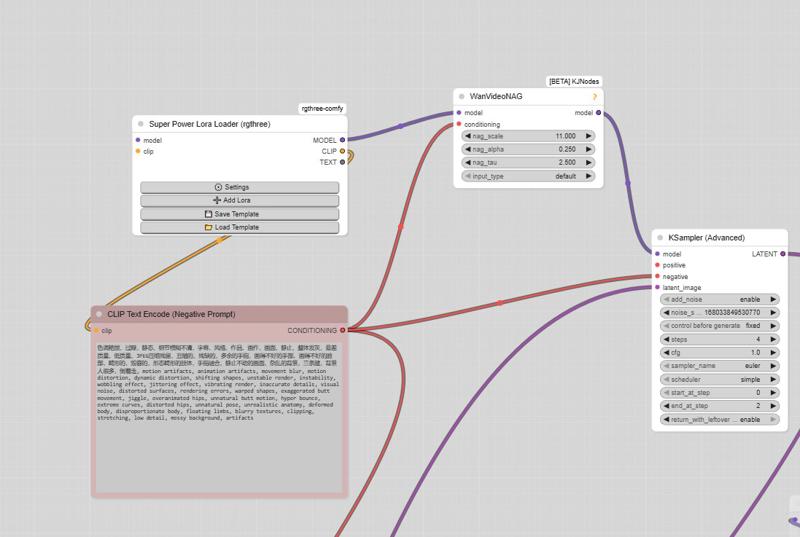

⚠️ Important

The model must be used with CFG set to 1, so negative prompts do not work by default.

However, there is a simple way to enable them.

How to enable negative prompts:

Open the Manager

Search for kjnode and install it

In your workflow, add the WAN Nag node

To use it correctly:

Connect this node after the LoRA Loader

Feed it with the negative prompt

Then connect it to the first kSampler (High)

👉 Only use this option when necessary, to avoid unnecessarily increasing generation time.

And here is the negative prompt for unwanted movements:motion artifacts, animation artifacts, movement blur, motion distortion, dynamic distortion, shifting shapes, unstable render, instability, wobbling effect, jittering effect, vibrating render, inaccurate details, visual noise, distorted surfaces, rendering errors, warped shapes, exaggerated butt movement, jiggle, overanimated hips, unnatural butt motion, hyper bounce, extreme curves, distorted hips, unnatural pose, unrealistic anatomy, deformed body, disproportionate body, floating limbs, blurry textures, clipping, stretching, low detail, messy background, artifacts, butt bounce, moving hips, swinging hips, shaking butt, wiggling butt, moving lower body, moving pelvis, jiggling buttocks, bouncing butt, unstable stance, unnatural hip motion, exaggerated hip movement, hip sway, hip rotation, bottom motion, pelvis motion, wobbling hips, fidgeting lower body, dancing hips, pelvic movement, motion blur, unnatural movement

And here is the negative prompt from the official ComfyUI workflow:色调艳丽,过曝,静态,细节模糊不清,字幕,风格,作品,画作,画面,静止,整体发灰,最差质量,低质量,JPEG压缩残留,丑陋的,残缺的,多余的手指,画得不好的手部,画得不好的脸部,畸形的,毁容的,形态畸形的肢体,手指融合,静止不动的画面,杂乱的背景,三条腿,背景人很多,倒着走

Note: If you use the default workflow without the node, this negative prompt will not work.

UPTDATE: Lightning Edition – T2V

I’m not really experienced with T2V myself, but a colleague who works with it a lot tested this version and confirmed that it performs very well. From the few tests I managed to do on my side, I got the same impression, although I haven’t compared it directly with the base version yet.

The settings are the same as in the image-to-video version: 2+2 steps or more if you prefer, CFG at 1, and Euler simple (though other samplers also work great).

I don’t plan to make an NSFW version for the T2V release,

but for the I2V version, I’m already quite happy with the first results.

Update WAN 2.2 V1.1 I2V

Updated version of the original Lightning merge — same settings 2+2 steps or more, featuring more movement and smoother flow (depending on the prompt).

The model already works very well in NSFW. Just use the right LoRAs, and the movement will improve.

WAN 2.2 V1 I2V

This checkpoint is based on the original WAN 2.2, with the Lightning WAN 2.2 and Lightning WAN 2.1 LoRAs already integrated. This improves image quality, makes motion smoother and more dynamic, and removes the slow-motion effect that can occur with the Lightning models.

A common setup is to use 2 steps on the high model and 2 steps on the low model, though other settings may work as well. Do not apply the Lightning LoRAs manually — they’re already included in this checkpoint.

My workflow

https://civarchive.com/models/2079192/wan-22-i2v-native-enhanced-lightning-edition

Description

FAQ

Comments (255)

how did you manage to make a Q4_K_M version? I tried to do it locally from your FP8 model, but its FP8 scaled so there was a Zero tensor pointer and this was causing it to crash, please let me know how you got around this - unless you probably have the fp16 model lol

Hello, indeed, I did it using the BF16 version (also works with FP16).

It’s impossible, at least for now, to do it from FP8.

Idk man those vagina's look pretty unrealistic and ugly, just being honest. Great concept but those vagina's are a turn off.

Hello, I also sometimes get vaginas that are not very realistic. It really depends on the starting image.

I’ve already had very good results. I included a LoRA in the description that can help with this.

I could have added a vagina LoRA directly into the model, but by doing so, the model tended to turn penises into vaginas.

It’s very difficult to achieve something coherent in all situations—you have to make choices.

So I aimed to create something as stable as possible, in any situation.

The model is therefore capable of many things, but it still needs some guidance.

For me, this lora is very good. https://civitai.com/models/2217653?modelVersionId=2496754

The first version produces better vaginas, but it will also cause more bugs in certain cases.

To be honest, perfection in any existing model will always come with its share of issues.

That’s why I recommend using certain LoRAs while slightly lowering the strength, to achieve very good results.

There are loras to make those better.

@taek75799 You are great at using this approach. Versatility is the best option. Because often, users need to have a woman's vagina and a man's penis in the frame. And if someone needs detailed vaginas, let the right LORAs be used.

You are doing a great job!

Hello all, the link of taek75799 ends up in 404 not found. Any chance i get the correct link please? I have to get rid of vaginas that look like Vekna's wife......

@Suzukara Hello, try this one: https://civitai.com/models/1888857?modelVersionId=2137972

@taek75799 The title of Femaled-Vaginus - Female Body Enhancer says that this is a T2V version. Can it be used for I2V workflows?

@realrusboriska “The title Femaled-Vaginus – Female Body Enhancer”

Are you talking about the LoRA? If so, give it a try. Most T2V LoRAs work very well in I2V.

I only do I2V and I use some T2V LoRAs. However, I haven’t tried this one myself, but I was told that it works.

@taek75799 Yes, I mean this LoRA. I also use I2V models, so I usually try to look for I2V LoRAs . Thanks for clarifying that T2V LoRAs can work as well.

The 1920 x 1080 resolution causes issues at the bottom of the screen.

This issue is likely caused by the fact that 1080 pixels is not a multiple of 64.

to solve this problem, make video 1920 x 1088.

Which one from all is the I2V one?

Hello, everyone except the one named t2v.

@taek75799 sorry i meant T2V my bad, all the last ones are I2V?

@AkaHimeP Yes, that’s right, there is only one T2V version.

Have you tried the trick of using the I2V model to make T2V?

You just need to put a white image in Load Image and enter the prompt.

Many people have confirmed that it works, but personally, I have never tested it.

tested the new V2 Q4km, not so sure, but i think, the quality is much worse than the last GGUF model, i mean the generated videos, has a lot of noise in it. the face changing still a big problem.

Hello, and thank you for your feedback.

I imagine that when you say “previous version,” you mean the equivalent Q4KM version?

Strange, I don’t have this issue.

To be honest, it seems complicated for me to identify the cause of the problem remotely.

If you want, you can send me the workflow with the parameters that generate the lower-quality video, and I’ll try to see if there’s a problem.

You can use this link, it’s very easy: https://www.swisstransfer.com/fr-fr

@taek75799 well, i was using Q8 mostly, but i ran a few tests with Q4 too and didn't see much difference in quality before, but the generated clips with the latest one, had a lot of noise in it, even thought i used a classic style filter or something, but i didn't, thx anyway. not sure how or why, but i see some heads turning 180 degrees, like an exorcist movie!! which is funny.

@zereshkealt Haha, I see the problem.

Actually, I’ve already had this issue with the previous version.

I just fixed it with a prompt like "the person turns around".

But if you’re interested, I don’t know if you’ve done a comparison using the same seed and the same prompt between the two versions to see if it produces the same effect.

I plan to release the Q8 version soon, hoping I’ve helped you.

@taek75799 not sure i'm following this bit: "the person turns around"? should i use it in the negative prompt? no, it wasn't the same seed, it was and I2V workflow which i had saved and i've been using it as a base workflow to test different models. the Q8 version, would be great. then i'll wait for that.

@zereshkealt I think my problem was the same as in the movie The Exorcist haha, but I had it on Fast Move V1, I believe.

I was able to fix it with, I think, "the person turns around" in the positive.

I’m sure I experienced it with FP8; it’s probably due to certain NSFW LoRAs.

Hello sir, i m a little overwhelmed. Not easy. So i have downloaded the v2 fast move in high and low noise. Do they include natively the lora penis insert or do i have to add it on the "adding loras" node ?Do we need loras at all in fact ? (Well you mentioned better anus and pussy lora). And do they include the v2 cam better movement cam compréhension models ? Is that v2 cam a lora i can add ? Or a model so i need to change the v2 fast move nsfw by the v2 cam ? Oh and if during the generation i don t like what phase 4 générate, i just need to put all the other seeds on the other phase at "fixed" and the phase 4 seed on "ramdomize" so when i click render it only generate the phase 4 5s vidéo ? Thanks a lot !!! Your workflow got enormous potential, it s amazing, really impressed !! Good job !!

Hello, I’ll try to help you as best I can.

For the LoRA, yes, I recommend adding one depending on what you want to do. This model, as you can see, is capable of doing a lot, but it’s still preferable to add one in certain contexts.

For example, if a man penetrates a woman, with my model, the penetration is too fast. Check the description: there are examples with an excellent LoRA for this.

I didn’t include it directly in the model because it caused many issues, such as when a man is with a woman, he would penetrate her even if it wasn’t indicated in the prompt.

For the LoRA node, here’s the link to the official Comfy blog: there you can find their simple workflow and see where to place the LoRA node. It’s very simple, but be careful: never use a Lightning LoRA with my model, they are already included.

Yes, CAMs are also included: they are almost as good as the CAM version, but not quite. I can’t explain here why, it’s a bit long and my message is already long.

You can keep the CAM model: it’s SFW and remains my personal choice, since I rarely do NSFW.

So I imagine your question is about my workflow; in that case, I answer: yes, that’s exactly it.

I hope to share another workflow soon that allows perfectly smooth transitions most of the time, but I still need to simplify the process because it seems complicated.

Thank you very much for your feedback; it could help other people.

the fp8 model run out of my VRAM,but it still works faster than other i2v models,what a strange model,hope you can make a q6 gguf model

Hello and thank you for your feedback 🙂

Yes, it depends on your PC.

For me as well, FP8 is the fastest version, but Q6KM offers better quality, and Q8 even more so.

The Q4KM version uses less VRAM.

It will be slower depending on your configuration, but it will work with less VRAM.

@taek75799 Okay, gotta ask. Which version do you recommend for a RTX 4070TI Super (16 gigs of VRAM)? Looking for quality.

@Russader I have an RTX 4060 Ti with 16 GB of VRAM and 64 GB of RAM.

I always use the Q8 version; it’s the one that offers the best quality.

I don’t know if some people want the NSFW versions (not Fast Move) in GGUF format.

I’ve already made the Q4KM version.

If anyone prefers it, feel free to let me know so I can share it.

That would be great to have and mess around with, yes please! It's tough for me to balance camera control and immense jiggle and business sometimes. But that could be my low system, or more likely just crap/lazy prompting. So it'd be nice to switch it up and experiment. You're awesome.

I'm really looking forward to your NSFW Fast v2 Q8 High version.

would appreciate the (not fast) nsfw v2 Q8 gguf!

@eastnod1990 @readlyfire1112 Thanks for your feedback, friends. I’ll make Q6K and Q8 versions of each model.

The jiggle physics are a bit too intense for my taste. I'd like to tone them down.

@g1263495582 Hello, are you using the fast move version?

If so, try using the other NSFW version instead—it will be less pronounced.

What is the difference between the Q8 version and the new FAST MOVE Q4KM? which one is more optimized for mac generation?

@Baxinoid Hello, I can’t tell you which version will be the most optimized for Mac, as I’m not familiar with it.

The best version will be Q8: it is the closest to the FP16 version, but it will of course require more resources.

Then come Q6K, Q5, Q4, and so on.

Any chance on new, better t2v version?

Hi, for now, to be honest, I don’t think I’ll focus on that.

Have you tried putting a white image with the I2V version and entering a prompt?

Many people have told me that it works well to convert I2V versions into T2V.

@taek75799 i'll try that. Thanks.

@taek75799 I'm trying that now. Seems to work pretty well but the resulting video is too washed out. I also tried a fully transparent background but it wasn't much better. Will keep experimenting.

@Grimmster Thank you for your feedback. I’ll need to ask the person who gave me the tip; it’s possible they might make a small change, maybe to the steps or the sampler, I’m not entirely sure.

@taek75799 实测可行,效果比T2V的好

@Grimmster It worked for me. I used, i think, t2v loras and very detailed prompting.

@Grimmster Wanted to give you another tip. You can use ms paint. Just make sure the white image is at the resolution you want when you save it.

finally i2v is worth using (IMO), super fast and high quality, I'm a smooth brain so I'm using swarmui, but it simply works, 4 min for a 3 sec gen on a 3090.

hope you make a 5b in the future to reduce that time even further (2 min gen)

Nice!

Has anyone managed to find a way to have a face next to a penis without the penis going in the mouth?

Hello, try to work around the issue using the prompt, as in this example.

Another question: are you using the NSFW CLIP?

If so, check the description to see the difference with and without it.

Here is an example prompt:

https://civitai.com/images/112883436

Another example here:

https://civitai.com/images/113718298

For the man at the end, the trick is the same:

"The man mid-motion shaking his head from left to right in firm refusal"

In I2V, any tip on how to create a threesome scene with two men from the image of a woman (alone)?

Hello, good question. I can’t really tell you exactly how to do it for now, since I’ve never tried it.

To make a single man appear, it’s easy: I simply specify it in the prompt, for example “the man appears on the right”.

For a 5-second video, that seems quite short. I think it would be better to make two 5-second videos:

the first with the appearance of the first man,

the second where another man appears.

Then, for the prompt, you can find an example here:

https://civitai.com/images/112885028

Or, even simpler, use a recent LoRA. I haven’t tested it, and this one is T2V, but feel free to try it.

I personally use many T2V LoRAs to do I2V, and it works most of the time.

Here is the LoRA, and don’t forget that WAN is capable of many things—the key is often the prompt.

https://civitai.com/models/2223036/double-stuffed-wan-22-a14b?modelVersionId=2502753

@taek75799 It worked! The consistency could be better, but it's not bad at all. Thanks!

@liant007790 For better coherence, don’t hesitate to increase the resolution.

And don’t forget one important thing: between each transition, the quality will degrade.

If the person’s face is not clearly shown at the end of the 5 seconds, it will therefore inevitably be altered.

GOTH FUTANARI WARNING (softcore)

https://files.catbox.moe/sld55p.mp4

https://files.catbox.moe/21oa5v.mp4

did some more testing, again it works, but is quite aggressive and it will make unintentional 'bouncing', breasts, ass, penis, etc...

also not sure about it but I think chinese prompting illustrious style seen to work better.

But still this is by far ( to me at least) the best model as it offers a speed / quality AND it works right out of the box on SwarmUI, zero fiddling with nodes like in Comfy, so if you are lazy like me and want to spend more time generating than installing unnecessary shit this is THE MODEL.

🩷 Guys here is a Pro TIP i used NSFW Fast Move v2 fp8 its So faster then Q6,Q4 and others believe me take a look ,

Fp8 - it take 750x450 40FPS - 64 seconds time only

Extreme Quality+Real Physics,Horney_face_expressions

Q6 - It Take 750x450 40FPS - 120sec

Good_Quality,Horney_face_expressions

Official Q6 - 750x450 - 40FPS - 140sec

Good_Quality_Real_Face_Expressions_Pain&Cry_ But_Censored

i have rtx 4050,ram 32GB

fp8 model on a 6gb card? do you have an insanely fast ssd with pagefile?

I have a RTX 3070 and 32GB RAM, I am currently using the original Q4_K_S version and I get 180 seconds of generation in 5 steps.

I will try the fp8 version.

@ThirenG187 bro i just made a workflow which runs in single ksampler with only 1 model using low fp8 model its real fast and so agressive consistent face i can sharem, if you guys need one

@kumarkishank959811 would like to see the workflow

@kumarkishank959811 share it, please.

I tried it and it didn't work; it disconnects after the high noise sampler. Did you add any options to the ComfyUI startup?

Hello, I would like to ask which checkpoint mode is suitable for my system specifications.

My specs are:

- CPU: Ryzen 5 3600XT

- GPU: RTX 5060 Ti (16GB VRAM)

- RAM: 32GB

Which checkpoint mode would you recommend for stable performance?

Thank you very much.

Hello, I have an RTX 4060 Ti with 16 GB of VRAM and 64 GB of RAM.

I use the Q8 version, it’s the most accurate one, so it should work well.

If generation time feels too long, you can use the Q6K version instead it will be a better compromise.

I’ll probably release it this weekend.

I have the same graphics card and processor, but 16 more RAM, with all the optimizations (sage, etc.), a 5-second 720p video takes about 230 seconds on the Q8, I haven't found a better combination of quality and speed yet, I recommend it.

@inquisitor075612 wait really? is there heavy disk swapping at all? I was playing around with it on the q_4 version, but i might have to give a larger quant a go, I happen to also have the same card and 48GB of ram

@FloatsYourStoat right now, my overflow takes about 12 gb not counting the card in first high KSampler) Provided that I keep absolutely everything, including Clip and VAE, in the card. The Q8 is a pretty heavy model - especially with LoRA, but half a minute for the difference between the native Q6 and Q8 is crucial for me. I can provide you with the log of my generation process in messages if it would be interesting.

@FloatsYourStoat In the end, of course, I think it's about "taste" and a search for balance specifically for self. It just seems to me that Q8 "understands" me better, if you understand what I mean)

@inquisitor075612 A whole extra minute? I do like things to be on the faster side, Q6 does sound enticing whenever it releases for V2. Do you stick to 5 second videos? or do you chain them together? and when you say 720p do you mean square or portrait?

@FloatsYourStoat 592x864, I'm combining videos into a chain, you can see the examples in my profile.

@inquisitor075612 Oh I see, those are pretty darn good. I guess an extra minute isn't bad at all then considering the length

@FloatsYourStoat now I have found, as it seems to me, a balance for really long videos from one iteration (30-40 secs), this is an interpolation to 60 FPS from 24 FPS with 105 frames per piece.

@inquisitor075612 I actually gave it go with the long vids just using the currently available V2 Q4, but unfortunately my computer just about completely locked up from OOM issues at the rife stage going from 16 to 60 fps with each of the segments having 81 frames (using the workflow from the vids in the previews here modified a bit to remove the attention nodes as i use the arguments for that in my startup bat). Not sure if its because I use a different rife node than the included one but it really took too long at that step to be feasible

@FloatsYourStoat I use normalVRAM and this is the best option on my graphics card.

I am delighted with your work, I switched to it from others and now I use it only, may I know if you are planning a new version of q8 and when?

Hello Inquisitor, I really like the videos you make.

I definitely plan to do it. I won’t give an exact date, but I think it should be ready in less than two weeks.

I’ll probably release the Q6K version this weekend, so feel free to try it.

I know some people with very powerful PCs who actually prefer it over Q8 because it’s faster while still being very accurate.

Thanks a lot for your feedback.

@taek75799 I am very pleased that you appreciated my crafts) I will definitely watch the latest release of Q6K, unfortunately, with the fp8 version, I do not get the quality I expect

@inquisitor075612 I recently found out that the Q8 versions are better than the fp8 versions. I thought it was the opposite! So now I use Q8 when available too!

For the next version, can I request that you don't separate 'fast' and 'normal' modes for low noise?

I don't think taek has been doing that, wdym

Hello and thank you for your comment.

I’m not sure I fully understand: do you mean using the high model in fast move and the low model in normal NSFW?

Sorry if that’s not what you meant. In any case, you can already do this, or even do the oppositeI’m pretty sure it will work.

@taek75799 Yeah, that's exactly it. I think the focus should be on the 'high noise' part. For 'low noise', there's no need to separate them—just a single version is enough.

It definitely works; I've tested it myself. Even mixing High Noise (FP8) and Low Noise (GGUF) with different LoRAs runs without any issues. Sorry if my previous message wasn't clear.

@g1263495582 No problem, it was clear, they just wanted to be sure.

I don’t really plan to do that, to be honest.

If I put the fast move on high and the normal on low, I would, for example, get more pronounced chest movements.

On the other hand, if I put the normal on high, that won’t be the case.

But your idea is good and could help others. I’ll try it myself tonight to see what it gives.

@taek75799 Actually, the physics, movement, and object placement are all handled by the High Noise step, while the Low Noise step is mainly for capturing textures and refining object shapes.

Here are examples showing the output of each stage: https://jumpshare.com/s/01BUXoJavHwE3ckQx4We -> High Noise output. https://jumpshare.com/s/0r0ns8KAS3QQfAgqscpQ -> Low Noise output.

Generally speaking, distinct types of artifacts originate from specific stages: major structural issues (like extra hands or object glitches) typically come from the High Noise stage, whereas issues like slight shape morphing, or texture changes usually come from the Low Noise stage.

@g1263495582 Thank you for your comparison; it’s very interesting.

@taek75799 A tip for anime style: Try stacking LiveWallpaper LoRA + 2D Animation Effect LoRA + Live2D LoRA + Wriggling Motion LoRA + SmoothMix LoRA at the Low Noise stage.

Keep the strength for each around 0.3 - 0.5. In my experience, this significantly helps stabilize the face.

@g1263495582 Thank you very much for that. I know many people enjoy making anime, so I’ll probably include it in the description, likely with examples to help.

I was able to do 3 tests, it’s good.

I added it to the description, thank you.

@taek75799 Thanks, I saw the update in the description. I really want to highlight that these LoRAs should be applied only at the Low Noise stage, since that is the part responsible for handling textures and details. For the Live2D LoRA, this is the specific one I use: wan2.2 live 2d风格动态壁纸 - v1.0 | Wan Video LoRA | Civitai, though the one you linked works fine as well.

Also, could you please clearly emphasize in the description that Wan2.2 is native 5s (16fps) and maxes out at roughly 7s? If push it beyond that, the result will basically look like a scene from a horror movie. (I see a lot of people trying to generate videos longer than 7s with Wan2.2 thinking it works, so it would be great to emphasize this point.)

I use high noise 0.5 and low noise 0.9. Cfg 1. But the result still improper and often gave eyes rolled out and tongued out. My negative is already followed this post.

Could you help me?

Can I see your video and workflow? Also, CFG 1 means the negative prompt is not used.

@g1263495582 https://drive.google.com/file/d/1XY5fpsGQJ_1AaxRxpexvGiQmiZcRwLDs/view?usp=sharing

I've deleted all videos because of storage. Perhaps the workflows only is fine?

And also, I tried disabled negative prompt and only use CGF. The result was more wild tho.

@nessusprod Wan2.2's limit is usually 7sec at 16fps. Since I saw 145 frames in your settings, I suggest lowering it.

When exactly does the video break? If it happens at the beginning, try turning off the LoRA. If it breaks near the end, just shorten the video length.

I think I see Can u connect like this https://postimg.cc/Lhm2vJzd or https://postimg.cc/WhCRRzJN ?

Thank you, g1263495582, for helping.

Indeed, the video length is too long: WAN was trained on 81-frame videos at 16 fps.

When you say you are using high noise at 0.5 and high at 0.9, I assume you are referring to the LoRA in the workflow?

If that’s the case, I don’t see any issue apart from the video length.

If I may give you one piece of advice: replace the RIFE VFI node. It is quite slow in the interpolation process.

There is an equivalent node in the Fill Node pack. Check the description at the bottom—there is a link to my workflows. Download any of them and you’ll see it; it’s much faster.

@g1263495582 It often near the end.

Yeah I think I could.

But, my I ask you some questions regarding your pictures?

1. What is the difference between first, second picture and also with my existing?

2. Where do you connect the width and height links?

@taek75799 any chance for we could make a 7-10 seconds good video with wan22?

and thanks for your suggestion! I’m new and still learning your workflows. I’m not gonna lie, it’s pretty overwhelming for me😅.

@nessusprod Hello, look: this one is over 30 seconds:

https://civitai.com/images/113718298

It was made with the start-end frame workflow. I included the process to make it. Of course, it’s not perfect regarding brightness changes, but it can vary depending on the starting image you provide.

This one is 15 seconds:

https://civitai.com/images/112884429

It was made with my workflow, which you can find at the bottom of the description. The transitions were done with fun vace, but I haven’t integrated this feature into the workflow yet. Therefore, the transition won’t be as good with the posted workflow.

Basically, to explain how it works: we make a 5-second video. The next step is to take the last frame of that video and continue for another 5 seconds, and so on.

@nessusprod

1. not difference

2. from https://postimg.cc/MctPJ06X this Comfyui-Resolution-Master but you need to snap 16 for wan

@taek75799 Okay got it. I’ll try to using vace first. Thanks for the answer bro.

@g1263495582 alright then I will make it only 81 frames. Thanks bro.

I’m just wanna say thanks for both of you because I can generate great results! The length is really a culprit. @g1263495582 @taek75799

And now, what remains is the results have no audio 😅.

any tips on how to stop getting upside down vags and no a_holes when seen from behind?

It happens, you need luck and might want a lora

Hello, try using a LoRA.

This is also a problem I noticed during testing.

I could correct it, but unfortunately it caused many more issues, such as turning a penis into a vagina.

Check the description: there is a vagina LoRA, or you can use a LoRA like doggystyle, which helps a lot, with a weight of around 0.5.

so doesnt work with loras ? fastmove v2 its a t2v or i2v ? result is bad in both

Hello, this is an I2V version.

It works with LoRAs, but don’t set your LoRA too high, for example 0.5.

There are examples in the description with different LoRAs.

If the quality issue isn’t caused by the LoRA, you can send me your workflow if you want, so I can check it.

@taek75799 thx. and nsfw fastmove v2 version for t2v ? i see only sfw

@gambikules858 There is no NSFW T2V version of my model.

However, you can try using a white image and adding a prompt; it should behave like T2V.

I haven’t tested it myself, but several people have shared this trick with me.

Hi, i am very limited with computing power, can you please test out the best settings to use for SVI (Stable Video Infinity LoRA) for this model and add it to description, would really appreciate it man

Hello, I tried a bit. I left the LoRA at 1 and didn’t go any further, sorry, lack of time.

If some people can do it, otherwise I’ll do it when I can. Thank you for your comment.

@taek75799 your a star brother, I understand with the time, so it's all good will just wait for an update if you ever get around to doing it, thanks for taking the time to try it out thou 🤛

@ThirenG187 Hello, I tried a bit more, but it requires a workflow specifically designed for this, so a WAN Wrapper; otherwise, it doesn’t work as well with the native workflow. To be honest, I won’t use this LoRA for now, even if it can help. I prefer doing my transitions with Fun VAE, they are almost invisible, even if you sometimes have to redo them several times. And thanks for your comment.

Do you use loras on both high and low? I seem to get blurrier faces and motion when using on high, and better results when only using on low? Using the fp8 fast v2

what lora did you use?

Hi, for some reason when I create 24 fps+ videos they seem to be quite fast, almost like if I used the 2x speed option on youtube... even if I input "slow motion"

Hello, yes, this is normal: the WAN 14B model outputs videos at 16 fps.

If you want a higher frame rate, you need to use interpolation.

For this, there is a custom node called RIFLE. You can find it here:

https://github.com/filliptm/ComfyUI_Fill-Nodes

It’s a node pack that includes many nodes. There is another custom node that also does interpolation, but I recommend this one because it provides very fast interpolation.

If you want, you can take my workflow at the bottom of the description.

You don’t have to use it—just look at where the RIFLE node is located (top left).

That way, you’ll know where to place it in your own workflow.

@taek75799 That's a pretty huge package. I'll have to take a look through them. The extensive documentation on the git page is a little alarming though. That is definitely not standard practice. You're supposed to make people guess what the nodes do, not tell them how to use them! Writing it all out clearly spoils the surprise.

@taek75799 I'm new to vid-gen, but I'm having a blast testing out all these models. Thank you for putting in the work and sharing it with us.

I've been using REAL Video Enhancer for upscaling and interpolation. I've seen y'all recommend these custom nodes and I will get around to checking them out. So far the RVE app has been working well for me. It is highly configurable and comes preloaded with several of the best open sources models. https://github.com/TNTwise/REAL-Video-Enhancer

Oh, and you can get it as a flatpack on the main repos.

I downloaded and used your model and it works fine. But, whenever it finishes, the faces are all distorted. is there a solution to fix it?

Hello, can you send me the wf you use to make the videos? So I can check it.

@taek75799 please help me too

More steps!

@qek how many?

@Alkor64 can i see your video+workflow?

@Alkor64 No problem with the workflow, are the parameters the same?

Ideally, you would send the workflow used to create the video, or otherwise it’s exactly the same, nothing has changed? no LoRA?. Or the video that doesn’t work, in DM if you want.

@taek75799 so, do you want the video so you can inspect it?

@taek75799 & @g1263495582 here are the results

@Alkor64 It seems that the video is no longer available.

@Alkor64 🤔 i see. Are you using a LoRA? If so, try to avoid using it at high noise levels, or simply turn it off. Alternatively, you can try using it at the low noise settings listed in the description. Also, what sampler and scheduler are you using? Sorry for answer late.

@g1263495582 Thank you for your reply. He sent me the workflow there were issues with the step settings. I didn’t get any feedback, but I think everything is good now. Thanks to you for helping.

@taek75799 @g1263495582 i'm using the pec bounce lora both high & low at strength 1

https://civitai.com/models/2111254/pec-bounce

@Alkor64 I don't have any issues with the resulting video. Did you set it up like this? https://postimg.cc/QFPqy8MY

@g1263495582 it works now. Thanks. sorry for the late reply

Please help my workflow: https://postimg.cc/RqsvL18c

I have 4060ti 8vram and this workflow make 5 second video 5 minutes and low quality

Hello, could you please send me the workflow in JSON?

Can you recommend a fast and high-quality NSFW model for the 4060ti 8vram? I don't know what to download. @taek75799

@taek75799 ok i send

@Paffys yes Q4KM

@taek75799 I couldn't find the JSON, but in the template section, the basic one is i2v wan22. So I just converted the diffusion models section directly to the GGUF loader. Is there a model you can recommend that I can use comfortably, or is it in the settings?

@taek75799 It's already downloaded, but I don't know if I'm doing something wrong. Can you create a simple workflow for me? Five minutes is too long, isn't it? Also, it's removing the first video, but the second video is noisy. Plus, I'm getting a CUDA error.

@Paffys Open the workflow you used where the video quality is not good.

Then go to the ComfyUI logo (bottom left or top left), click on it, then click File, then Export.

You will get your JSON file.

After that, you can upload the JSON here; it will be easier to identify the cause.

@Paffys For the workflow, you can check mine at the very bottom of the description, or try the simplest one, the default ComfyUI workflow.

You just need to download the GGUF node and, most importantly, disable Lightning LoRAs, as they are already included in the model. https://blog.comfy.org/p/wan22-day-0-support-in-comfyui

@Paffys It's a common workflow. It is not the kind of info I'd need

@qek What should I use?

Repost for taek: "Did you launch it with --highvram (or maybe, --normalvram, it's by default) --highvram doesn’t seem to unload models once loaded.

You ran Wan 2.2 14B, there are two transformers, Low and High. Maybe you need a separate workflow for it..."

@Paffys Wan 2.2 is too big (28B params in total), I have been using Wan 2.1 14B, it has no refiner

@qek Are we sure this model is for 8 VRAM, or is there an error on my end? I directly downloaded and installed the comfyui setup, so I have no place to write code.

@Paffys Your workflow is the basic ComfyUI one, just in a subgraph. I don’t see any issues; everything looks fine.

The resolution seems correct.

Is the video really very bad, or are you aiming for extremely high quality?

Please give me a link ahaha i want download iv2 model for my pc

@taek75799 I think they mean something else like OOM or slow generation speed

@taek75799 https://ibb.co/9HmnVqHk my error's

@taek75799 can you suggestion for another model wan2.1 it is good?

@qek If I move the drive from my hard drive to an M2 SSD, will that fix the problem?

@Paffys Wan Anime 5B, please try with my suggested workflow, I liked it

@Paffys "If I move the drive from my hard drive to an M2 SSD, will that fix the problem?" Why? No, try making your swap file higher instead

@Paffys "can you suggestion for another model wan2.1 it is good?" Just base Wan 2.1 14B with a general porn lora

@Paffys If you get an OOM error, try a Q3 GGUF.

I don’t provide them here, but others do; for example, check SmoothMix in GGUF.

@taek75799 smoothMix is just a merge with 3 porn loras with low weights, please do not suggest it, its users have been giving negative feedback due to its outputs

@qek Ah thanks, then it’s really a very simple merge.

@qek I might offer a Q3 version. I don’t know if it’s the solution, but if some people need it, I don’t mind.

v2 Q8 coming ?

fp8 is enough

The Q6_KM this weekend, I don’t know when I’ll be able to do the Q8.

@taek75799 Q8 is pointless, has the size of fp8 but slower

@qek On my side, it’s really very light, it’s 6 seconds slower per generation compared to FP8.

@qek fp8 is the worse i dont know why people even use it

@qek fp8 is comparable to Q5 even the dev mention it.. you need to dig deeper on things before commenting with opinion. Below Q6 you will get face warping.. Q8 is the best you can get with detailed generation its so obvious. fp8 is fast for a reason... There are people who prefer quality over speed.

@taek75799 Will wait patiently for Q8 .. dont matter how long it takes 😁

@ApexArtist1 "fp8 is the worse" No

"i dont know why people even use it" Not to get OOM nor slowdowns due to GGUF

"fp8 is comparable to Q5 [and your similar comments]" May be valid

"you need to dig deeper on things" Give me a shovel then to dig deeper

"before commenting with opinion" Your comments are also your opinions/I did

@qek ok 😁

@ApexArtist1 You're right, Q8 is comparable to fp16 and a lot better in quality compared to fp8. This qek guy is spouting nonsense.

@qek On 16 GB VRAM 32 GB RAM, Q8 Fast move V1 gives me much better character consistency. It's almost perfect with keeping clothing and faces even with hard cuts while FP8 V2 causes blurring issues even with faces that you are directly looking at. And with Sageattention, Radial attention and torch.compile I get exactly the same s/it with no OOM issues.

@nomando222 what i thought facial consistency is really bad with fp8 it just morph to different persons

@taek75799 you are GOAT

@qek And where can we see examples of your work on which fp8 is enough? :)

@ApexArtist1

I did some tests with the FP8 v2 and Q8 v1 models from @taek75799, and the Q8 v1 really surpasses the FP8 v2 in terms of maintaining quality and detail of faces, even though it's the older version. The FP8 v2 has more refined movements, but it doesn't surpass the Q8 in terms of quality... and I didn't see much difference in the generation time between the two models. I'll also be eagerly awaiting the Q8 V2. Congratulations to @taek75799 for the excellent work!

@lukas477 you are waiting for NSFW Fast move V2 Q8 ?

@ApexArtist1 yes!

@ApexArtist1 Yeah I stopped using V2. V1 Q8 is the best and I'll wait for V2 Q8 to surpass it.

@nomando222 nice to know alot of people waiting for NSFW fast move v2 Q8

Thanks for your amazing working in these models!

I have tried generating videos using nsfw fast move Q8, and the model's text adherence and the accuracy of the camera movement impressed me greatly.

I'm wondering, if I want to create 2d/2.5d anime-style sfw videos, which model is most recommended? Is it V2 Cam Q8? Because I really don't want the characters to suddenly get together and do things related to sex.

Hello and thank you for your feedback. The nsfw fast move v2 version has better text understanding, feel free to try it.

If you want to create 2D sfw animations, try the cam v2 model: it is not nsfw and has better camera understanding. I think that if you use a high enough resolution, the fidelity will be good, but not perfect. Check the description (nsfw v2 model), you will find LoRAs recommended by g1263495582 that work well for 2D.

@taek75799 Yes, I have seen g1263495582's suggestions on generating anime style but i don't understand that "if you use a high enough resolution, the fidelity will be good, but not perfect". What does this indicate about the cam v2 model's limitations?

@gallopfeel263 Let’s say that Cam V2 is a bit like the base Wan 2.2, meaning it’s not the best option for making anime. However, you can look at the different previews: there are many anime examples, and yet I didn’t use any LoRA.

how should this be used, in your workflow you load .safetensor files, but the model files here are .gguf, any help on how to use them would be greatly appreciated.

Just change node from load diffusion model to unet loader (gguf)

Hello, here is the FP8 of the model: https://civitai.com/models/2053259?modelVersionId=2477539

@g1263495582 I could kiss you, worked like 2 days on solving this until I saw your message, thx for helping out!

I use wan2gp and even I put it into chkpts, I cannot choose it. whats wrong?

Post your launch arguments

Have you built yourself a finetune for wan2gp? or downloaded and modified someone elses? if not check out futafilth's post on the wan2gp discord. He shared a finetune. You'll have to edit the json for whichever model you download and whereever you save it but it works. I don't think theres any quick plug and play option at the moment without a custom finetune.

@qek none, its default empty at autolaunch. i did use different checkpoints, but this one doesnt appear in the list

@TheFunk the one on pinokio by beepbeepmeep. works fine for months with different checkpoints, loras etc. and optimized for my needs on performance etc. but this checkpoint doesnt appear to be listet

seems theres no support for GGUF files in wan2gp

Fast move V2 Q8 coming ?

The thread is a duplicate of the same one made by ApexArtist1, have you read it?

@qek hey thanks ..I see it

@aikomatrio uf, that thread is long

I used your workflow and got the following error:

# ComfyUI Error Report

## Error Details

- Node ID: 117

- Node Type: KSamplerAdvanced

- Exception Type: RuntimeError

- Exception Message: Given groups=1, weight of size [5120, 36, 1, 2, 2], expected input[1, 32, 3, 110, 74] to have 36 channels, but got 32 channels instead

Hello, check here: https://www.reddit.com/r/StableDiffusion/comments/1mc2gkq/given_groups1_weight_of_size_5120_36_1_2_2/

I know someone who had the same error as you; the solution from the Reddit link worked for him.

Thank you very much, the “killer” was the node “flow2-wan-video.” I deleted it manually, and the workflow is now running on my 5060 ti 16 GB. Merry Christmas from Germany!

@Miakss Thank you, Merry Christmas to you and your family 🎄

What am I doing wrong? I keeping getting: VAEEncode

ERROR: VAE is invalid: None If the VAE is from a checkpoint loader node your checkpoint does not contain a valid VAE.

Do you use a checkpoint with a baked vae?

@lobo is this not a checkpoint?

Hello, here is the official ComfyUI workflow: https://www.swisstransfer.com/d/67b8decf-2a35-48e6-b01d-d3487e7f4956

so that you can check if everything is in order. Are you using the correct VAE? WAN 2.1?

In the workflow, the Lightning LoRAs are enabled. You shouldn’t use them with these models.

This model doesn't have a merged VAE. You must use a 'Load VAE' node, and use the 'Load Diffusion Model' node for the model itself.

@taek75799 I noticed in the workflow the Load VAE node is obscured behind the Find Perfect Resolution node. So maybe they didn't see it.

I'm a little confused. The NSFW FAST MOVE V2 FP8 and NSFW V2 FP8 models are declared as T2V models, but the sample images are declared as img2vid. Can anyone tell me whether they are T2V or I2V models? And do the recommended settings, steps: 2+2 CFG: 1, only apply to NSFW FAST MOVE V2 FP8 or also to NSFW V2 FP8? I'm asking because I can't generate good quality with these models. Not even close to the sample images or the images attached below. Maybe someone can help me. Thank you.

Hello, look to the right of the previews, you’ll find the information. Under the publication date, it says "Image to Video" on all models except one T2V model. It only takes one among all these models for it to be archived like T2V and I2V here. You can find my workflow at the bottom of the description. Most videos still have the metadata, except those longer than 5 seconds. You can download them and drag them into your ComfyUI interface; they are all generated in 4 steps (2+2).

If you want, send me your file with which you generated a low-quality output so I can check it. If you want, you can do it here. Thank you.

@taek75799 Thank you very much. My mistake, I misread that 🙈🙈. I'll test it again tomorrow.

I definitely fell in to this pit, I assumed based on the naming that it was a merged I2V/T2V model. Might I respectfully suggest making it a little clearer that there are different model sets for I2V and T2V and to look carefully at the version. You stopped marking them as I2V/T2V at some point and the T2V designations are a 15 second horizontal scroll. CivitAI does not make this any easier, I think we can agree.

As for Revisions, where does that leave T2V, is that a few versions behind the newer re-trains you've done?

using the NSFW FAST MOVE V2 FP8 + the NSFW BF16 Fixed clip and when I use loras the video comes out as if it's under water with ripples. works perfectly without loras

Hello, thank you for your feedback. What if you lower the LoRA strength, for example to 0.5?

The model acceleration is really great; there's no slow motion. Could you tell me which LoRa model it's fused with?

Hello, for the v2, WAN General NSFW, Wan 2.2 POV Missionary, and other LoRAs that I have trained.

The prompt adherence is amazing. how did you do it ? what is the technique

I want to train my first wan 2.2 lora do you have a guide ? which tool should i use kohya or AI toolkit which one do you use ?

can you help me..

Hello and thank you for your feedback. Regarding prompt adherence, I only trained a LoRA using hundreds of videos that were more focused on dynamism. The captions were made with Qwen VL. I’m still hesitating to make a V3 for the Cam model, as it takes a lot of time. I use Musubi Tuner; I can’t train WAN LoRAs with AI Toolkit, but I think WAN LoRAs will be very good as well. I got good results with Kontext and Z Image.

@taek75799 you have suggestions for Patreon for who has good setups for that and stays curent with the advances? maybe I'll finally have to figure out runpod with all the expanding memory requirements... several years ago I had followed Aitreprenuer and SECourses waiting for them to put out more stuff but neither of them ever progressed beyond the basics.

@TrafficMeany Hello, I’m not subscribed to any Patreon. If I can give you some advice, check Reddit:

https://www.reddit.com/r/StableDiffusion/

https://www.reddit.com/r/comfyui/

You’ll find all the latest news there. You can also join the Discord: https://discord.gg/2ZgzWrYw

@TrafficMeany Search Apex Artist

@taek75799 did you train with the prompt format: (at 0 seconds:

the captions format for video or the agent instruction which one do u use or can you share the agent instruction.

@aikomatrio Hello, the base WAN understands prompts like at 1 sec, etc., but also 1, 2, etc. It’s just a habit I got into: by using at 1 sec, I indeed used this prompt system during LoRA training to improve understanding. For the captions, I used Qwen VL2.5, then Qwen VL3, giving it instructions.

@ApexArtist1 ...anyone more advanced you recommend?

@TrafficMeany jokerai

@TrafficMeany I really like what Apex Artist does on their YouTube channel: https://www.youtube.com/@ApexArtistX

any chance for a help?

which version to download for a 8gb vram card?

Thanx!

Hello, try the q4KM version. It’s the lightest version, but you still need a lot of RAM. I don’t know if it works with 16 GB, but with 32 GB it will work.

@taek75799 thank you, i have 32gb of ram. but, it wont work, which text encoder i need to download?

@damir_96586599 This one: umt5_xxl_fp8_e4m3fn_scaled.safetensors. It’s surprising that it doesn’t work, even when lowering the resolution? Have you already tried Q3? It’s not available here. Is your workflow the one using ComfyUI nodes or the WAN Wrapper?

@damir_96586599 With 32GB RAM and 8GB vram, try using FP8/Q8. The text encoder is linked in the description.

@g1263495582 i tried all, when it comes to van video encode, it simply starts and then says out of memory

@damir_96586599 Send me your workflow if you want: https://www.swisstransfer.com/fr-fr

@taek75799 workflow is downloaded from here, suggested resources

@damir_96586599 If it’s my workflow, it only uses the basic ComfyUI nodes (the other nodes don’t cause any issues), so it should work. Try the simplest workflow possible: https://blog.comfy.org/p/wan22-day-0-support-in-comfyui

And here is the documentation with the correct text encoder and VAE: https://docs.comfy.org/tutorials/video/wan/wan2_2

@damir_96586599 Many people tell me that they can’t make videos or that they have other issues. They also use my workflow, but when they send me the workflow that doesn’t work, some settings are no longer the same. Sometimes things get changed unintentionally.

Thank you for all the work your put into this! Very well thought out.

Thank you for this excellent models this is top notch stuff. The v2 is excellent for blowjob, however i find that it is less good with less movement than the V1 for other nsfw (vaginal for ex). V1 is quite good at it, but it has less capacity than V2 to keep consistant identity. Thanks again.

Hello and thank you for your feedback. I generally agree with what you said. My approach was precisely to keep as much facial consistency as possible and to make the model perform well in all situations, with better prompt understanding. Some people didn’t like fast movements, so I decided to give them more control. Therefore, an NSFW LoRA should speed up the movements.

@taek75799 thanks for the tips. Semms alas that quite a few lora in your links are already dead. Keep up the good work.

hi again, can i ask about the workflow u r using in these clips? since ur clips doesn't have "wide opening mouth" problem, i thought i try ur workflow, but the super power lora node, is impossible to get? why? i already have the rgthree custom node installed and updated, why am i still getting the missing node error? btw, are u still using lora with ur model? that's the more immediate question, so i can figure out if i need this node or not.

Hello, try using the latest version of the workflow (Painter I2V). I removed the Super Power LoRA, but if you want it, download V1: inside it, you’ll find a rgtree folder. Go to your custom nodes directory and replace it. The Super Power LoRA isn’t essential; I just find it more convenient for WAN.

You can use the 'LoRA Loader Stack (rgthree)' node instead. However, if you specifically want a node similar to 'Super Power LoRA', try using this one:https://github.com/HenkDz/nd-super-nodes .

@taek75799 thx, but are u sure? it's still exists in the 3rd section. funny thing, it is still exists in the removed super power lora workflow!! if the lora isn't important for what i mentioned, then i can remove the node, or use other lora loaders.

@zereshkealt yes, you can remove it or use other lora loader.

@zereshkealt I’m not sure I understand. I just checked: the workflow with Painter does not include Super Power LoRA, only Power LoRA. The V1 includes Super Power LoRA with the rgtree folder (to make Super Power LoRA appear), and the latest one does not include Super Power LoRA.

It’s not mandatory: you can use any LoRA loading node, such as Load LoRA model only or, as suggested by g1263495582, https://github.com/HenkDz/nd-super-nodes.

It’s the same creator as Super Power LoRA.

@taek75799 thx. is this the workflow? : https://civitai.com/models/2079192?modelVersionId=2397553 if so, i just downloaded it again and the 3rd loop, has a super power lora in it, it's not in the main workflow, but still gives the error of missing node. i'll try to remove it.

@zereshkealt I just checked again: I do have in all sections, the 3rd one too, Power LoRA and not Super Power LoRA. I’m not saying you’re lying, but in this field, there are often bugs. To be sure, I’ll ask a colleague to check and I’ll be able to modify it. Thank you for your feedback.

@taek75799 definitely not lying, u know how it is when u don't have the node installed(funny thing is, u can't see what it is there, except the error of missing node! if u want me to show u, i can make a video and show u, that's what i'm seeing, as i've said before thanks for the answer. i removed the nodes, just getting Oom right now, i think it's VAE decode, i have to use the "Tiled" one. thx. still going to wait for the Normal NSFW Q8 version, but i'm going to test this Q6 right now.

@zereshkealt Lol, don’t worry, I believe you ;)

@taek75799 managed to make ur workflow, work, the fram generation is a nice touch, can u give me some advise about the ghosting in the generated video? btw, i'm creating videos in 12fps, should i change the 64fps to 48fps in video combine node?

@zereshkealt WAN generates videos at 16 fps. Then, with the Rife node, you can choose the target interpolation frame rate for your video. 48 works.