Light Concepts

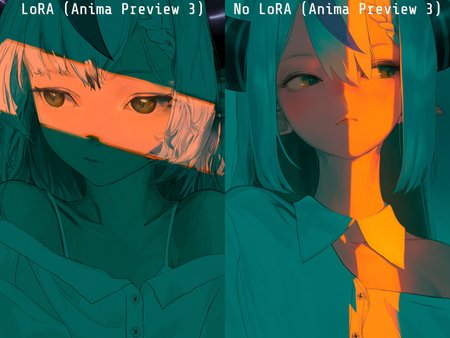

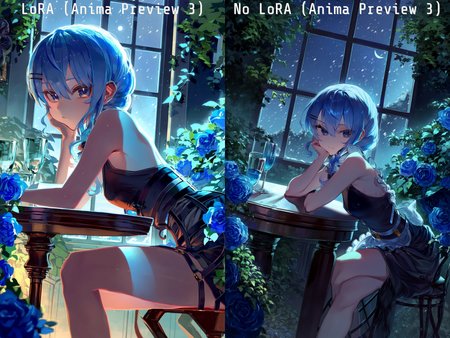

Training data is a collection of various light concepts I enjoy using that are not overly represented in large datasets, trained as a single lora.

ℹ️ LoRA work best when applied to the base models on which they are trained. Please read the About This Version on the appropriate base models and workflow/training information.

I've trained many of these concepts before, generally they are nice to use to enhance the lighting of generations or give interesting effects.

Trained on a large mixed NL and tags dataset, at mixed [1024, 1536] resolutions. Previews are mostly generated at 1024x1536 with a combination of tags and NL prompts.

Concept tags

Not limited to, but collected by works containing:

dispersion

hue shifting

refraction

subsurface scattering

translucent

bioluminescence

caustics

dappled moonlight

glowing hot

ultraviolet lightWorks best in combination with NL if you name a character, describe their basic appearance, and finish with descriptions of light sources and their effects on the scene:

A vibrant and dynamic illustration of Hoshimachi Suisei from Hololive, featuring her squatting in front of a glowing triangular prism.

A beam of white light enters the prism from the left and refracts into a vibrant rainbow on the right. The background is a solid dark grey to emphasize the lighting effects.Description

Trained on Anima Preview 3 Base

With a mix of natural language and tag captions.

Training config:

# trained using diffusion-pipe commit 518ba041d867e6d76c31806a8d0b6a0263fb22eb

output_dir = '/mnt/d/anima/training_output/light'

dataset = 'dataset-anima.toml'

# training settings

epochs = 1000

# Per-resolution batch sizes

# micro_batch_size_per_gpu = 16

micro_batch_size_per_gpu = [[512, 32], [1024, 32], [1536, 16]]

pipeline_stages = 1

gradient_accumulation_steps = 1

gradient_clipping = 1

warmup_steps = 100

# misc settings

save_every_n_epochs = 1

#save_every_n_steps = 1000

#save_every_n_examples = 4096000

#checkpoint_every_n_epochs = 1

#checkpoint_every_n_minutes = 120

activation_checkpointing = true

#reentrant_activation_checkpointing = true

partition_method = 'parameters'

# partition_method = 'manual'

# partition_split = [10]

save_dtype = 'bfloat16'

caching_batch_size = 1

map_num_proc = 8

steps_per_print = 1

compile = true

[model]

type = 'anima'

transformer_path = '/ComfyUI/models/diffusion_models/anima-preview3-base.safetensors'

vae_path = '/ComfyUI/models/vae/qwen_image_vae.safetensors'

llm_path = '/ComfyUI/models/text_encoders/qwen_3_06b_base.safetensors'

dtype = 'bfloat16'

#cache_text_embeddings = false

llm_adapter_lr = 1e-6

#timestep_sample_method = 'uniform'

#flux_shift = true

#multiscale_loss_weight = 0.5

sigmoid_scale = 1.3

[adapter]

type = 'lora'

rank = 32

dtype = 'bfloat16'

[optimizer]

type = 'adamw_optimi'

lr = 2e-5

betas = [0.9, 0.99]

weight_decay = 0.01

eps = 1e-8resolutions = [512, 1024, 1536]

enable_ar_bucket = true

min_ar = 0.5

max_ar = 2.0

num_ar_buckets = 9

[[directory]]

path = '/mnt/d/training_data/images_light_captions'

repeats = 8

Dataset format:

tags = full tag list

first_n_tags = kept first 8 tags

nl_caption = natural language captions generated with tag grounding

# captions.json

{

"image_1.jpg": [

{tags},

{first_n_tags}{nl_caption}",

{tags}{nl_caption}",

{nl_caption}{tags}",

}