Light Concepts

Training data is a collection of various light concepts I enjoy using that are not overly represented in large datasets, trained as a single lora.

ℹ️ LoRA work best when applied to the base models on which they are trained. Please read the About This Version on the appropriate base models and workflow/training information.

I've trained many of these concepts before, generally they are nice to use to enhance the lighting of generations or give interesting effects.

Trained on a large mixed NL and tags dataset, at mixed [1024, 1536] resolutions. Previews are mostly generated at 1024x1536 with a combination of tags and NL prompts.

Concept tags

Not limited to, but collected by works containing:

dispersion

hue shifting

refraction

subsurface scattering

translucent

bioluminescence

caustics

dappled moonlight

glowing hot

ultraviolet lightWorks best in combination with NL if you name a character, describe their basic appearance, and finish with descriptions of light sources and their effects on the scene:

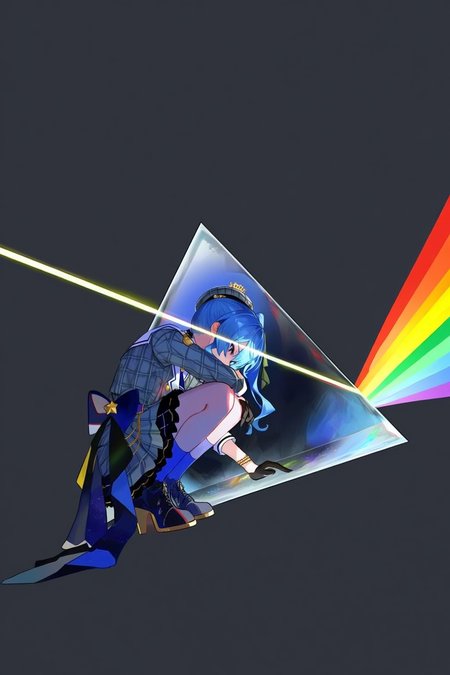

A vibrant and dynamic illustration of Hoshimachi Suisei from Hololive, featuring her squatting in front of a glowing triangular prism.

A beam of white light enters the prism from the left and refracts into a vibrant rainbow on the right. The background is a solid dark grey to emphasize the lighting effects.Description

Trained on Anima Preview (version 1)

Assume that any lora trained on the preview version won't work well on the final version.

Trained the mixed tags and natural language dataset.

Config:

# dataset-anima.toml

# Resolution settings.

resolutions = [1024, 1280]

# Aspect ratio bucketing settings

enable_ar_bucket = true

min_ar = 0.5

max_ar = 2.0

num_ar_buckets = 7

[[directory]] # IMAGES

# Path to the directory containing images and their corresponding caption files.

path = '/mnt/d/training_data/images'

num_repeats = 1

resolutions = [1024, 1280]

# Change these paths

output_dir = '/mnt/d/anima/training_output'

dataset = 'dataset-anima.toml'

# training settings

epochs = 50

micro_batch_size_per_gpu = 16

pipeline_stages = 1

gradient_accumulation_steps = 1

gradient_clipping = 1.0

warmup_steps = 100

train_llm_adapter = true

# eval settings

eval_every_n_epochs = 1

eval_before_first_step = true

eval_micro_batch_size_per_gpu = 1

eval_gradient_accumulation_steps = 1

# misc settings

save_every_n_epochs = 1

checkpoint_every_n_minutes = 120

activation_checkpointing = true

partition_method = 'parameters'

save_dtype = 'bfloat16'

caching_batch_size = 1

steps_per_print = 1

[model]

type = 'anima'

transformer_path = '/mnt/c/workspace/models/diffusion_models/anima-preview.safetensors'

vae_path = '/mnt/c/workspace/models/vae/qwen_image_vae.safetensors'

qwen_path = '../qwen0.6/Qwen3-0.6B/'

dtype = 'bfloat16'

timestep_sample_method = 'logit_normal'

sigmoid_scale = 1.0

shift = 3.0

# Caption Processing Options

cache_text_embeddings = false

# NOTE: Requires cache_text_embeddings = false to work!

# For cached embeddings, use cache_shuffle_num in your dataset config instead.

shuffle_tags = true

tag_delimiter = ', '

keep_first_n_tags = 5

shuffle_keep_first_n = 5

tag_dropout_percent = 0.3

protected_tags_file = './protected_tags.txt'

nl_shuffle_sentences = false

nl_keep_first_sentence = true

# 'tags' 'nl' 'mixed'

caption_mode = 'mixed'

debug_caption_processing = false

debug_caption_interval = 1000

[adapter]

type = 'lora'

rank = 64

dtype = 'bfloat16'

# AdamW from the optimi library is a good default since it automatically uses Kahan summation when training bfloat16 weights.

[optimizer]

type = 'adamw_optimi'

lr = 8e-5

betas = [0.9, 0.99]

weight_decay = 0.01

eps = 1e-8