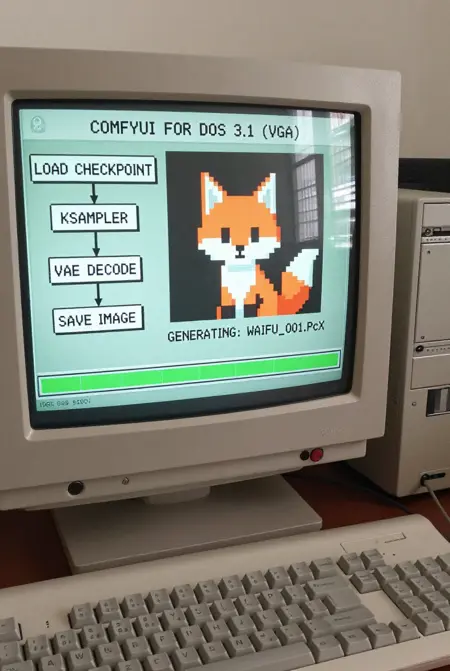

A workflow for Z-Image-Turbo focused on high-quality image styles and ease of use.

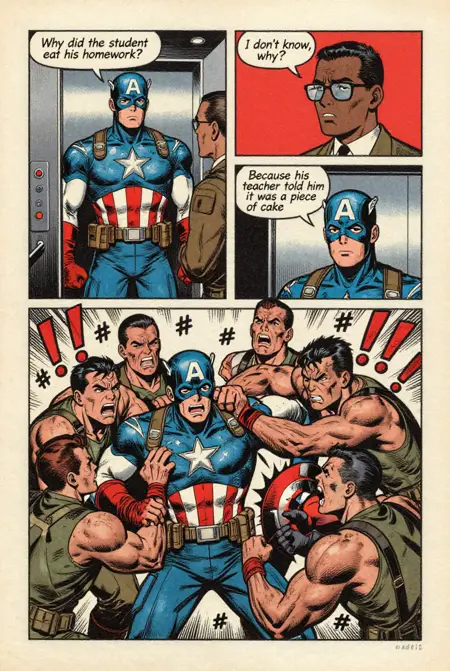

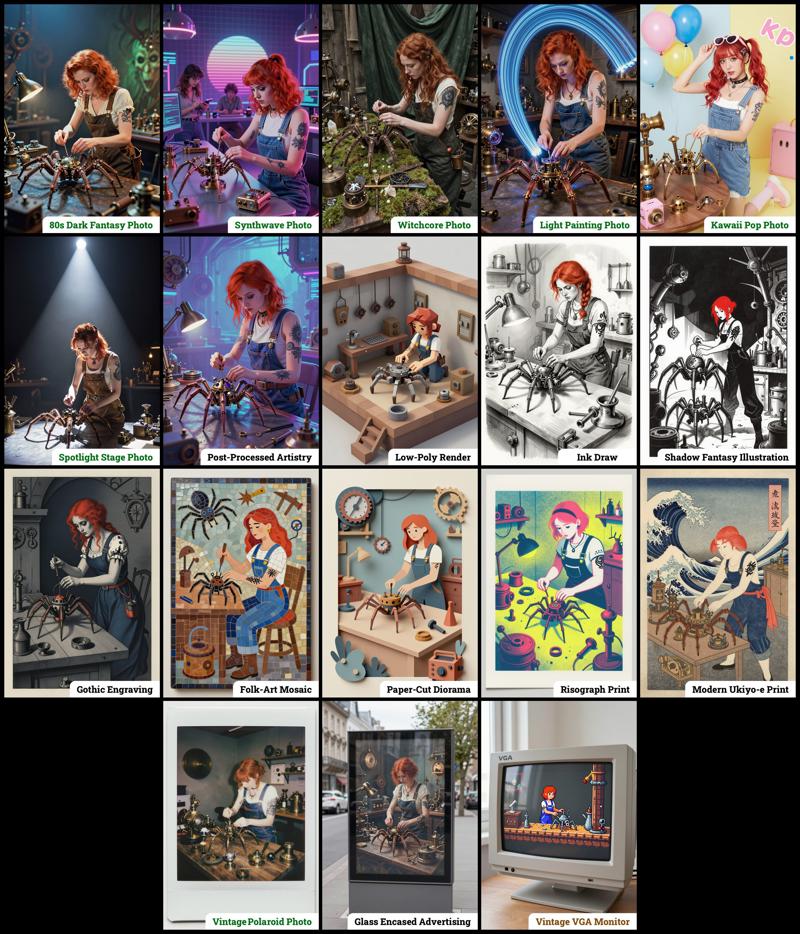

Style Selector: Choose from eighteen (x2) customizable image styles.

Refiner: Improves final quality by performing a second pass.

Upscaler: Increases the resolution of any generated image by 50%.

Speed Options:

7 Steps Switch: Uses fewer steps while maintaining the quality.

Smaller Image Switch: Generates images at a lower resolution.

Extra Options:

Sampler Switch: Easily test generation with an alternative sampler.

Landscape Switch: Change to horizontal image generation with a single click.

Spicy Impact Booster: Adds a subtle spicy condiment to the prompt.

Preconfigured workflows for each checkpoint format (GGUF / SAFETENSORS).

Includes the "Power Lora Loader" node for loading multiple LoRAs.

The zip contains four workflow files:

The zip contains four workflow files:

amazing-z-image-a_GGUF: Recommended for GPUs with 12GB or less.

amazing-z-image-b_GGUF: similar workflow "a", but with more experimental styles.

amazing-z-image-a_SAFETENSORS: Based directly on the ComfyUI example.

amazing-z-image-b_SAFETENSORS: similar workflow "a", but with more experimental styles.

When using ComfyUI, you may encounter debates about the best checkpoint format. From my experience, GGUF quantized models provide a better balance between size and prompt response quality compared to SafeTensors versions. However, it's worth noting that ComfyUI includes optimizations that work more efficiently with SafeTensors files, which might make them preferable for some users despite their larger size. The optimal choice depends on factors like your ComfyUI version, PyTorch setup, CUDA configuration, GPU model, and available VRAM and RAM. To help you find the best fit for your system, I've included links to various checkpoint versions below.

Required Checkpoints Files

: for "amazing-z-image_GGUF"

z_image_turbo-Q5_K_S.gguf [5.19 GB]

local directory:ComfyUI/models/diffusion_models/Qwen3-4B.i1-Q5_K_S.gguf [2.82 GB]

local directory:ComfyUI/models/text_encoders/ae.safetensors [335 MB]

local directory:ComfyUI/models/vae/4x_foolhardy_Remacri.safetensors (for illustration refining) [66.9 MB]

local directory:ComfyUI/models/upscale_models/

: for "amazing_zimage-SAFETENSORS"

Based directly on the official ComfyUI example,

z_image_turbo_bf16.safetensors (12.3 GB)

local directory:ComfyUI/models/diffusion_models/qwen_3_4b.safetensors (8.04 GB)

local directory:ComfyUI/models/text_encoders/ae.safetensors (335 MB)

local directory:ComfyUI/models/vae/4x_foolhardy_Remacri.safetensors (for illustration refining) [66.9 MB]

local directory:ComfyUI/models/upscale_models/

: for Version 3.x

If, for some reason, you need to use the older version 3.x, you will also require the following additional file:

4x_Nickelback_70000G.safetensors [66.9 MB]

Local Directory:ComfyUI/models/upscale_models/

Required Custom Nodes

The workflows require the following custom nodes:

(which can be installed via ComfyUI-Manager or downloaded from their repositories)

rgthree-comfy: https://github.com/rgthree/rgthree-comfy

ComfyUI-GGUF: https://github.com/city96/ComfyUI-GGUF

License

This project is licensed under the Unlicense license.

More info:

Description

This version includes the following features and improvements:

Increased number of preset styles from eight to fourteen

Improved versions of some original styles from v1

Implementation of "SAMPLER SELECTOR" for easy switching

Updated workflow layout with optimized node arrangement

FAQ

Comments (8)

That one time I had all nodes already installed. Very nice style selector and workflow.

The switches etc dont work. Any idea why they arent showing?

Absolute GAS!!!!!! 🔥🔥🔥🔥

Style Selector doesn't show any styles

Could be a compatibility issue with newer versions of ComfyUI. Try disabling Nodes 2.0 in ComfyUI and let me know if that helps.

This is an amazing workflow. So fun! Well done.

It's not just a workflow... It's so much more! Switching between styles is incredibly enjoyable and efficient.

I have a slight preference for Drone mode!

Doesn't work. I get errors with CLIPLoader.

Error(s) in loading state_dict for Llama2: and gives me a page of errors.