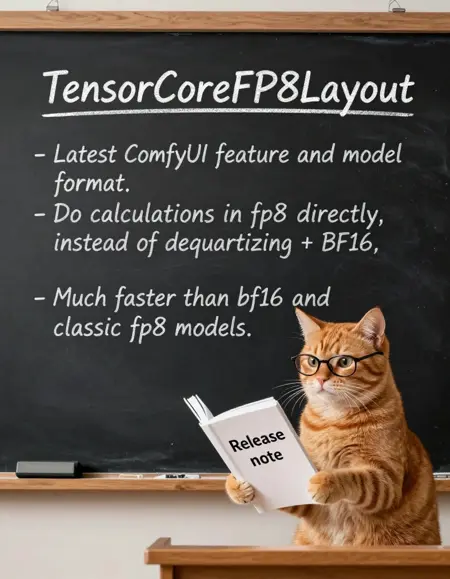

fp8 quantized Z-Image for ComfyUI using its quantization feature "TensorCoreFP8Layout".

Scaled fp8 weights. higher precision than pure fp8.

Also with "mixed precision". Important layers remain in bf16.

There is no "official" fp8 version for z-image from ComfyUI, so I made my own.

All credit belongs to the original model author. License is the same as the original model.

FYI: many people might think that fp8 model has huge quality loss. That's because "fp8 model" saved by ComfyUI is ... just a model with fp8 weights. And many creators made their fp8 model in that way.

Normally when people talk about "fp8 model", they mean "quantized fp8 model", like scaled fp8 and gguf q8. The weights are "compressed".

If you see creators complaining about the poor quality of fp8 models saved by ComfyUI, send them this link, or make your own quantized fp8 model from bf16.

https://github.com/silveroxides/ComfyUI-QuantOps

I just share the tool, I'm not using it. I'm using my own old script.

Base

Quantized Z-Image. Aka. the "base" version of z-image.

https://huggingface.co/Tongyi-MAI/Z-Image

Note: No hardware fp8, all calculations are still using bf16. This is intentional.

Rev 1.1: An updated version with better "mixed precision". More bf16 layers, so the file is bigger. Previous version will be deleted.

Turbo

Quantized Z-Image-Turbo

https://huggingface.co/Tongyi-MAI/Z-Image-Turbo

Rev1.1: An updated version with better "mixed precision". More bf16 layers, so the file is bigger. No hardware fp8. Previous version will be deleted.

v1: It contains calibrated metadata for hardware fp8 linear. If you GPU supports it, ComfyUI will use hardware fp8 automatically, which should be a little bit faster. More about hardware fp8 and hardware requirement, see ComfyUI TensorCoreFP8Layout.

Qwen3 4b

Quantized Qwen3 4b. Scaled fp8 + mixed precision. Early (embed_tokens, layers.[0-1]) and final (layers.[34-35]) layers are still in BF16.

Description

FAQ

Comments (31)

Thank you for sharing quantized qwen3 4b, work flowless with base CLIP loader on CPU. Old 5800x still holding strong :)

(12/9/2025): files reuploaded for ComfyUI v0.4.

ComfyUI v0.4 changed how it handles calibrated metadata. Turbo and Qwen3 files are reuploaded with updated metadata. Please redownload them to prevent quality regression.

Could you post a workflow, please? :)

Hey, can you share the script you're using to make this? I'd really like to try it on the Josiefied Qwen3 I'm using with JoZiMagic, and compare results. [more details if you want, just ask]

no, it's not a simple script, it's a bunch of messy scripts because I also need to sample the model to get calibrated metadata. Not easy to share, at least for now.

If you just want to quantize text encoder, I recommend gguf. Much more "efficient and precise" than comfyui built-in fp8, and can be smaller.

tbh simply replacing original text encoder with other version is counterproductive. Because DiT is not trained.

@reakaakasky JoziMagic proves that your TBH is wrong, it works amazingly so even. JoziMagic makes different images from Stock Zimage, and in my extensive testing (including blind surveys of others on Discord), it's better than Stock sometimes [often tied, occasionally losing (10% or so of the time).] Try it, grab a Josiefied Qwen3 4b model GGUF q8, and you'll see for yourself.

@reakaakasky Having messy scripts is better than not having them. It would be great if you posted them

With Z-IMG Anime Fine-tune and NEWBIE IMG (referencing Lumina) both likely releasing soon, it's hard to imagine that just six months ago I was still getting headaches over SDXL's rigid prompt requirements. Now, it feels like everything has been solved

I don't think there will be a z-image anime fine-tune. It's too problematic for big company.

They highly likely will use the anime data to train the editing version. for style consistency.

It's a good thing. There will be no legal issues.

And, teaching the model to keep art style consistency from referencing images theoretically is way easier than memorizing thousands of art styles.

If the editing version has perfect art style consistency, then say goodbye to style loras and finetunes. The model will be able to generate any art style, no longer limited to the training dataset. All we need is giving the model style refencing images.

I am very optimistic that this will happen.

I know this isn't exactly the topic, but is there any page / discussion / ressources for torch compile, I can't get it to work, with either comfy native node or KJ advanced.

Honestly really glad I found this checkpoint, loads quite fast and makes great realism pics, on par with the OG bf16 version, thank you for making this checkpoint variant. I'm so used to A1111 that I gave up on comfy, but now with ZIT, I'm learning the workflows as I go, it seems really nice with this fp8 variant.

Is it possible to release an INT8 quantized version? This is necessary for my Tesla V100 and 2080 Ti setups. Thank you very much.

Can you please add a ComfyUI workflow? I tried downloading all the submitted generations into ComfyUI, none of them seems to have a workflow included.

https://civitai.com/models/2170134/z-image-turbo-workflow - this one works, just be sure to set the textencoder to the tensorcore one.

Stupid question: ComfyUI does an auto "manual cast: torch.bfloat16" when it detects fp8 models. To circumvent that one need only at a --fast as runtime argument. My stupid question is if this is actually faster than other fp8 models or if this is just formatted in such a way Comfy no longer does it's auto "manual" cast and is as fast as any other pf8 model that is run of hardware that can run fp8 precision naively like the 40 and 50 series cards from nvidia?

And before you ask why I don't test it myself, space is at a premium at the moment. my SSD is a bit too full at present and I need to purge a few model before I dl a new model or checkpoint.

@reakaakasky ah, so this would be activation quantized as opposed to a simple weight quant. thank you.

Share a workflow? None of the stuff I've tried seems to be faster. 50xx card here

https://civitai.com/models/2170134/z-image-turbo-workflow - this one works, just be sure to set the text encoder to the tensorcore one.

Last time I tried fp8 on Blackwell, ComfyUI wasn't doing the maths right, and the output was far worse than GGUF Q8. This was some months ago. For fp8 to have a benefit, maths calculations need to be done in fp8, not fp16- but then the code has to be careful to handle the accumulated precision correctly. I don't think the ComfyUI coders are competent enough to handle issues of numerical analysis. Personally, as a Blackwell user, I see no current reason to move from GGUF - GGUF is well understood and well supported. These other compressed formats are not!

I can't get any speed-up. What am I doing wrong? I'm on a 4060 Ti, using the default ZIT workflow on the latest ComfyUI nightly. I tried --fast, --fp8_e4m3fn-unet, and every weight_dtype setting, but the console always says manual cast: torch.bfloat16. No matter what, the speed stays the same as with other fp8 or bf16 models.

Have you tried --fast fp16_accumulation setting?

@MeMakeStuff Tried that, ~16s for 1024, 8 steps, res_multistep, exactly the same speed as with bf16 with no flags or anything. Still getting model weight dtype torch.float8_e4m3fn, manual cast: torch.float16 when selecting fp8_fast dtype OR model weight dtype torch.float16, manual cast: torch.float16 on default.

CUDA: 12.8

Torch: 2.7.1+cu128

Triton: 3.3.1

SageAttention: 2.2.0+cu128torch2.7.1.post3

Enabled fp16 accumulation

What am I missing?

fp8 model works without any flags, comfyui will automatically use it

"manual cast: torch.bfloat16." iirc this log does not matter, as long as you see "detecting/using mixprecision layers", something like this

"fp8 exactly the same speed as with bf16", normally fp8 model is ~10% slower, if you are sure the speed is the same, then fp8 works, just not fast enough, you might need torch.compile.

@reakaakasky I'm seeing Detected/Using and Found quantization metadata version 1 messages, but the speed improvement is only ~30% compared to other FP8 model and matches BF16 performance. Is this expected? I thought this should give 30%-80% speedup.

“It will not be faster, but it is still better than the old FP8 model” - I’m somewhat new to all of this, so I could have made some mistakes, but after testing it on my 3070 Ti (8GB) + 16GB, it actually was faster, and not by small margins. https://i.imgur.com/NzJiTyF.png - Nunchaku r256 (worst visual result of all), Q8, Q6, FP8 (yours), and random FP8 from HF. So big thanks! I might actually switch from Q8 to this; I need to do more visual comparisons.

Anyway, about TorchCompileModelAdvanced - it should be after LoRA but before ModelSamplingAuraFlow, correct? And should I use the default settings (inductor / false / default / auto / true / 64 / false)? If so, then I think it unfortunatelly doesn't work in my case (meaning the speeds are all the same as before). RIP!

Thanks once again!

I mean the sampling speed will not be faster. It might load faster, but that's depends on your cpu etc.

I'm not sure about rtx 30xx. So I don't know which model is faster.

If you prefer best quality. gguf q8 is the best.

gguf q8 (more complicated) > scaled fp8 >> pure fp8 >> Nunchaku (which is hardware q4, should be 3x faster, it supports 30xx iirc)

@reakaakasky there is the Imgur link in my main comment with the speed comparisions; Nunchaku r256 loses against all of them while having the worst visual quality too. Either something is broken, or it isn't beneficial for my setup.

About Torch; so the 1st gen should be faster, well it isn't at all in my case, but that could be my PC. Thanks anyway!

In my FACE editing test it lost about 25% quality. When I went back to BF16, the faces got very very good quality again so I can verify that this does not work well with FACE DETAILER workflow.