About Flux.1-krea-dev

https://docs.comfy.org/tutorials/flux/flux1-krea-dev

About Flux.1-krea-dev (nunchaku version)

https://huggingface.co/nunchaku-tech/nunchaku-flux.1-krea-dev

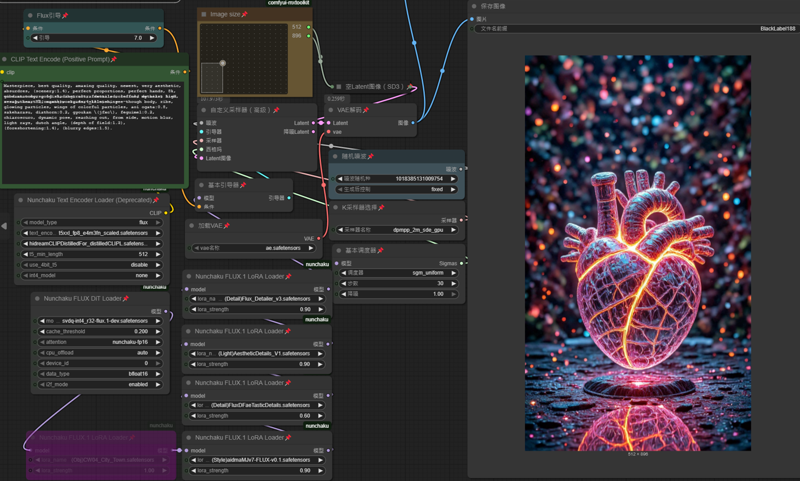

Discover the cutting-edge power of nunchaku-flux.1-krea-dev, the latest advance in text-to-image AI models! Built on the robust FLUX.1-dev base, this model delivers stunning, high-quality image generation with optimized efficiency through innovative 4-bit quantization technology.

With the included svdq-fp4_r32-flux.1-krea-dev.safetensors file, you can experience blazing fast inference speeds on Nvidia GPUs while maintaining excellent image fidelity. Whether you’re a digital artist, developer, or AI enthusiast, this model empowers you to create rich visuals effortlessly.

Download now to unlock:

Flux Krea is fully compatible with LoRAs designed for Flux Dev.

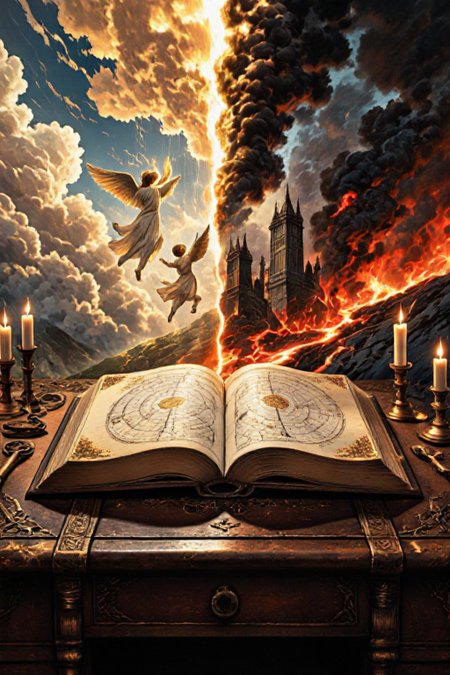

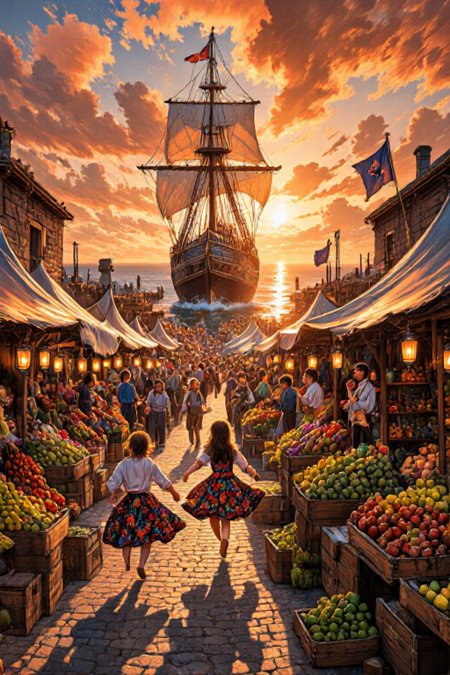

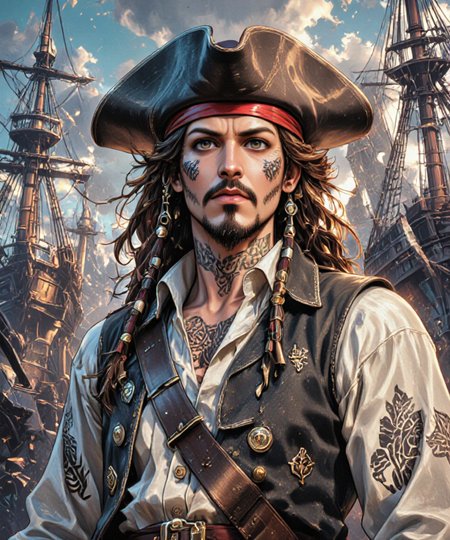

State-of-the-art image synthesis tuned for creative flexibility

Efficient performance optimized for Blackwell GPU architectures

Robust open-source foundation with cutting edge quantization research backing

Ready integration with Diffusers and ComfyUI for seamless workflow

Elevate your AI-generated art and media projects with Elevate your AI-generated art and media projects with nunchaku-flux.1-krea-dev — innovation meets artistry in every pixel.

Flux.1-krea-dev Release date : 2025.7.31

Requirement :

Minimum VRAM requirement is about 6 GB vram (such as RTX 2060 or 3060)

Recommended VRAM is 10 GB or more for better performance and stability

Settings like CPU offload and smaller batch sizes help when VRAM is limited

Minimum system-RAM requirement is about 16 GB

Recommended system-ram: 32 GB

1) Download the Flux.1-krea-dev (nunchaku version)

https://huggingface.co/nunchaku-tech/nunchaku-flux.1-krea-dev/tree/main

----

Nvidia RTX50

svdq-fp4_r32-flux.1-krea-dev.safetensors (plz visit huggingface.co find download)

----

Nvidia RTX 20 / 30 / 40

svdq-int4_r32-flux.1-krea-dev.safetensors (Click me = Download now)

This File has uploaded at this post

---

path: \ComfyUI\models\diffusion_models\svdq-int4_r32-flux.1-krea-dev.safetensors

---

2 advanced text_encoders are recommended to make it easier for you to create masterpieces

text_encoders 1 : t5xxl_fp8_e4m3fn_scaled (same with Flux Dev)

text_encoders 2 : clip_l (you can just use this to replace HiDream)text_encoders 3 : Long CLIP - Distilled for Flux (Download) (471.71MB) optional !!!

path : \ComfyUI\models\text_encoders\

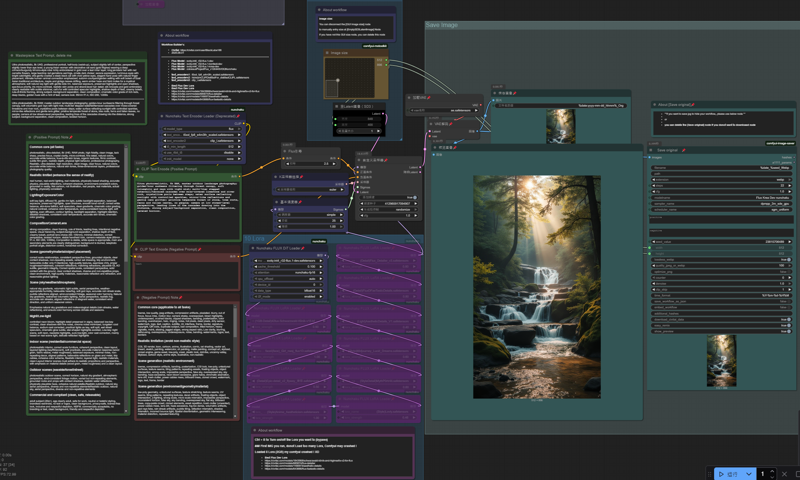

2) Download my workflow for <Flux.1 (nunchaku version)>

{ 20sec 1 photo Workflow }

Flux Dev / Krea 20 sec Workflow v1.0 (2025.08.03)

Flux Dev / Krea 20 sec Workflow v2.0 (New 2025.08.13)

Flux Kontext 20 sec workflow v1.0 (2025.08.13)

workflow name : 1-nunchaku-flux.1-dev(T2I) 10Lora-Pro.json v2.0 (with prompt ChatGPT 5.0)

workflow name : 1-nunchaku-flux.1-Kontext(I2I)_v1.json

workflow name : 1-nunchaku-flux.1-Krea-dev(T2I) 5Lora.json (Old version but simple)

Lora I use in this workflow

https://civarchive.com/collections/11428250 (check my Flux Lora collection)

https://civarchive.com/models/685874/flux-detailer

https://civarchive.com/models/1166973/aesthetic-details

https://civarchive.com/models/643886/flux-faetastic-details

https://civarchive.com/models/1470162/midjourney-v7-meets-flux-illustrious

https://civarchive.com/models/1371216/realanime

path : ....\ComfyUI\models\loras\

--- --- --- --- --- --- --- --- --- --- --- ---

advanced text_encoders are recommended to make it easier for you to create masterpieces

text_encoders 1 : t5xxl_fp8_e4m3fn_scaled (same with Flux Dev)

text_encoders 2 : HiDream CLIP - Distilled for Flux (Download) (471.71MB) optional !!!

text_encoders 3 : clip_l

(You can use Flux Dev - text_encoders)

path : ....\ComfyUI\models\text_encoders\

Description

Flux.1-krea-dev (nunchaku fp4)

easier for you to create masterpieces artworks

Minimum VRAM requirement 6 GB +

System-ram 16 GB +

FAQ

Comments (26)

Mega good, thanks a lot. I wanted to create an nf4 quant myself but had issues with DeepCompressor on Runpod.

Got issues ? Is it about my workflow node ?

BlackLabe188 ChatGPT:

No, I had some trouble with DeepCompressor — I also wanted to create an NF4 quant.

By the way, how did you do your NF quant? Also with DeepCompressor or some other tool?

denrakeiw to be honest, this is FP4, there's no other options I can choose on the upload page. and this Base-model is not mine, it's nunchaku team create it, I just upload it.

denrakeiw If you try to creating a Base-model, fp4 / SVQD . you can ask him, I believe he is friendly to share https://civitai.com/user/Afroman4peace

Got RTX3090 and this worked super fast, had no idea this speed exists, thanks.

It's great to hear that! Please feel free to [add post] to upload your artwork to this page.

and I look forward to seeing your art here ❤️ Thank you for your feedback 👍

Lora I use in this workflow

https://civitai.com/collections/11428250 (check my Flux Lora collection)

https://civitai.com/models/685874/flux-detailer

https://civitai.com/models/1166973/aesthetic-details

https://civitai.com/models/643886/flux-faetastic-details

https://civitai.com/models/1470162/midjourney-v7-meets-flux-illustrious

https://civitai.com/models/1371216/realanime

path : ....\ComfyUI\models\loras\

--- --- --- --- --- --- --- --- --- --- --- ---

advanced text_encoders are recommended to make it easier for you to create masterpieces

text_encoders 1 : t5xxl_fp8_e4m3fn_scaled (same with Flux Dev)

text_encoders 2 : Distilled ClIP_L for Flux (Download) optional !!!

text_encoders 3 : clip_l

(You can use Flux Dev - text_encoders)

path : ....\ComfyUI\models\text_encoders\

steps and cfg? any clue?

I only get blurry or highly exposed pictures with this workflow, I tried the different text encoders but no luck... anyone know why this happens ? Tried with and without loras, different resolution, nothing seems to work :(

disable all Lora and flux cfg from 7 to 2.5

upload screenshot, I can help to check

BlackLabe188 I changed it to 2.5 and disabled all loras but it's still the same https://imgur.com/a/4v3nCFT

CRAZYAI4U i meant screenshot the workflow details

BlackLabe188 https://imgur.com/a/f3ANDut

CRAZYAI4U try change the text_encoder 2 (longCLIPDis...) to (Clip_I)

or under ( svdq-int4 ) change <cache_threshold 0.100 to 0.0>

cache_threshold is speed up generate time. values 0.0 - 0.25

BlackLabe188 https://imgur.com/a/yvl469E still the same for me :/

CRAZYAI4U this 2 change to other , (dpmpp_2m_sde_gpu) (sgm_unifrom)

e.g,: euler & simple

BlackLabe188 That did the trick now it works, many thanks for answering and fixing it for me <3

where to download nodes for:

NunchakuFluxDiTLoader

NunchakuTextEncoderLoader

NunchakuFluxLoraLoader

comfyui-manager - does not find them

This ^

you are unsuccessful install Nunchaku, kindly find a tour on youtube--(install flux nunchaku )

thewy are updating everyday : )

https://nunchaku.tech/docs/nunchaku/installation/installation.html

Every time I update some important plugins, it's almost nearly messes it up my ComfyUI,

I took a hour to reinstall lot of things (with chatGPT)

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.