.

★Training Wan2.2 Lora with 16GB of VRAM

.

!!! No download required! This is a technical showcase! !!!

.

→→→Original / Training dataset containing configurations provider→→→ https://civitai.com/models/1944129?modelVersionId=2200388

.

●Differences from the original:

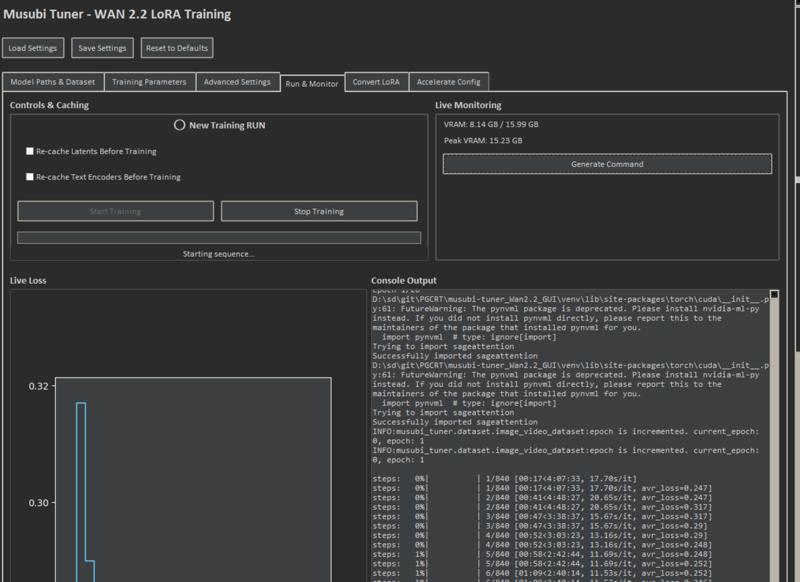

・4070 Ti Super 16GB / Mem ??GB

・This is my first training (Really the first run)

・(Replace musubi_tuner_gui.py)(I forgot to do this so i2v didn't work)

・t2v (Unchecked I2V Training)

・The model was also changed from i2v to t2v

・Changed the path of other models to my environment

.

・Install Triton and Sageattention2 (SDPA / I'm not sure about the effect, but I think Xformers would be good too.)

pip install -U "triton-windows<3.3"

python -s -m pip install .\triton-3.2.0-cp312-cp312-win_amd??.whl

→→→Guide is here→→→https://civitai.com/articles/12848

.

・Blocks to Swap (As far as I know, this model has 40 blocks)

35 (original is 10)

.

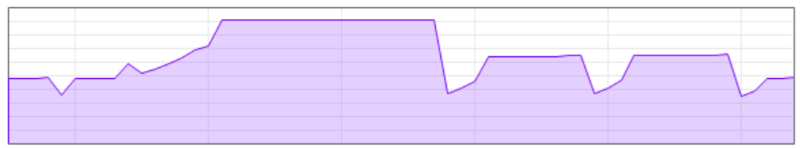

●VRAM required for training

30 percent of the time, I was using 15GB, and the rest of the time it was around 10GB.

.

As I write this, I set it to 40 to see if I could train with 12GB, but I got an error.

"AssertionError: Cannot swap more than 39 blocks. Requested 40 blocks to swap."

.

●Time required for training

The high was 2 hours and 19 minutes, and Low was 2 hours and 17 minutes.

I trained for 20 epochs, but it took 5 epochs to see results.

So if I do it right, I can train in just over an hour. (Wow, I can't believe that!)

.

.

.

.

Description

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.