Klein 9B

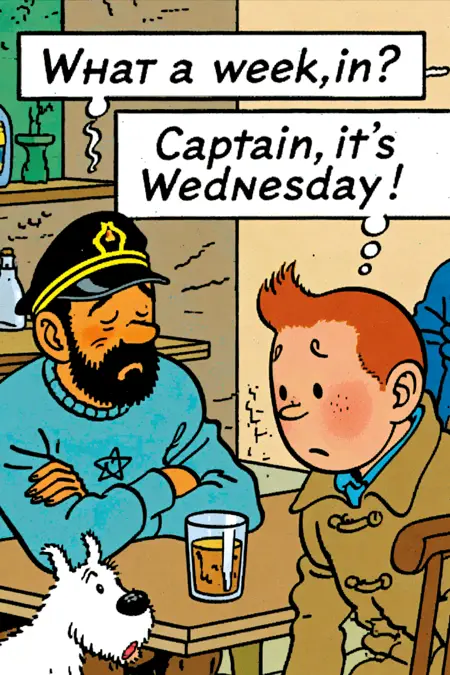

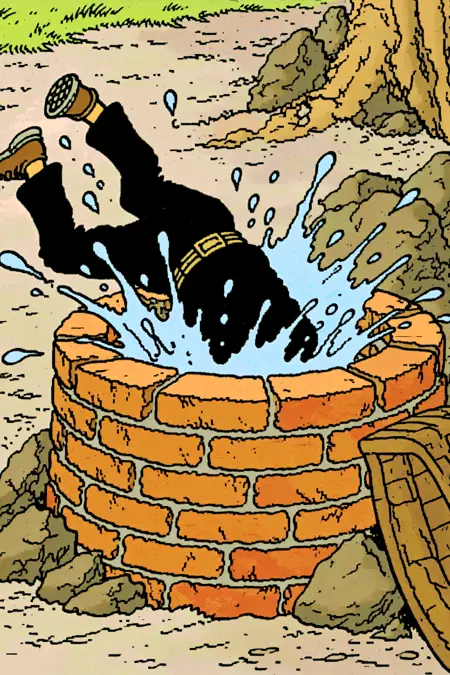

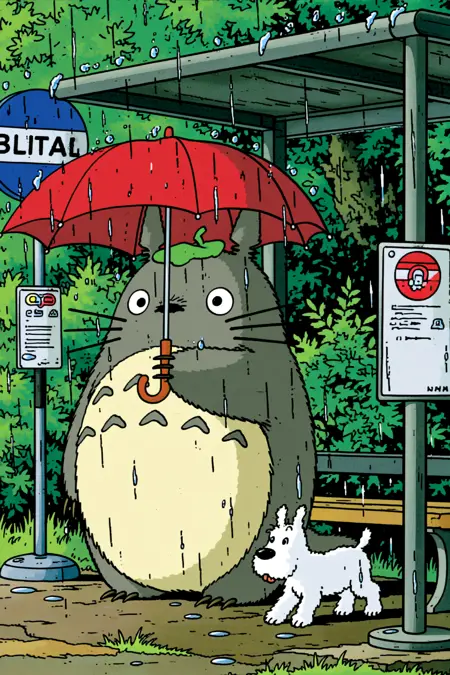

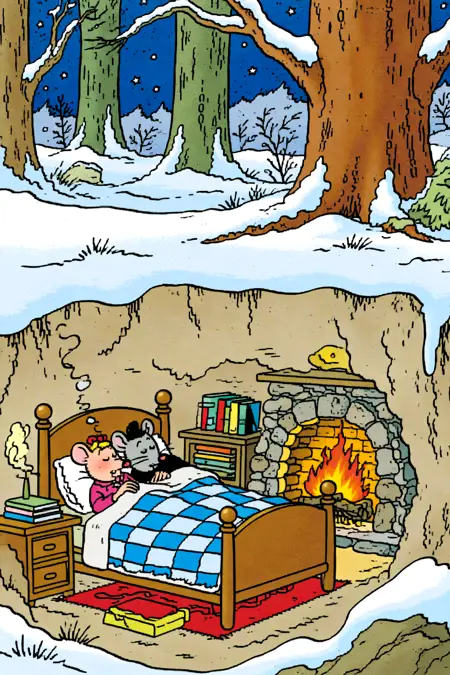

Showcase images :

Distilled model

768x1152

Euler / normal / 4 steps / CFG 1

Lora strength of 0.8-1

Qwen:

v2: Retrained on a completely new and higher quality dataset (same one used for training the ZIT version)

ZIT:

Showcase images settings :

Lora strength 0.7 - 0.8

DDIM & Beta

10 steps & 1 CFG

1056x1584

'comic style image'

Long Markdown type prompt for some of them

Flux:

Trained on 30 images handpicked from the comic The adventures of Tintin

It's a style Lora, so it doesnt really know the characters (except maybe Tintin and his dog which Flux already knew a bit).

a bande dessinée style image of

with a weight around 0.8-1. (for Qwen it's 1)

Description

FAQ

Comments (8)

Merci !!! J'adore votre travail !

I just tried to train a couple of nights to make one for another comic, but the results didn't come together at all. I used AI-Toolkit. They said not to use over 30 images, but I did 33 images. My images had detailed descriptions, because I wanted it to learn how to swap out certain parts, like replacing some of the old clothing fashion with newer fashion.

May I ask what you used to train this and what your settings were? How many dataset images did you have? How complex were your captions? I understand if you don't want to spend time answering, but thank you for considering it!

So for Flux it was better to be around 20-30 images, but for Qwen I don't believe it's true and you can have way more (even >100). My captions are usually one paragraph. Here an example of a caption from my tintin dataset : https://i.imgur.com/ELsZ6Nw.png

This one was trained online on tensor.art, so I think they use kohya_ss in backend (my settings : https://imgur.com/a/5AX41J9)

Ostris made a video on ai-toolkit, i've already trained Qwen loras following this video and it worked well : https://www.youtube.com/watch?v=MUint0drzPk

If I had to guess, maybe your LR was too low or too high?

Also, I'm still experimenting with Qwen, and for me it's way harder than with Flux to really get the style you want.

@Yofaraway Thank you. I was following that video from Ostris. that's where he said to do 20-30 images, and not to do much more than that. My learning rate started at the default of 0.0001, but nothing even started to change until around 1,750 steps. Is that normal? In the Ostris video, he changed his settings when he wasn't getting visible differences by 500 or 750 steps, so I used his 0.0002 LR setting, and got changes at the same stage he did.

I'm guessing the only change I need to make, is more dataset images, which I have. I curated pretty hard after watching that video. Thanks for confirmation of that piece.

@Yofaraway Oh, another difference between our approaches. I also followed Ostris's suggestion, and didn't use a trigger phrase and didn't train one into the captions. That's something you did, so maybe I should try it too.

@Jellai Yeah start with a 0.0002 LR, that will make the whole difference. The dataset size and the trigger words it kind of depends of what you're training, but it won't affect your results too much (in comparison to LR)

@Yofaraway I did 0.0002 on my second attempt earlier in the week, but yeah, I think I just need a bigger dataset. thanks for the info.

@Jellai And maybe you just need to train for way more steps