♦ All-in-one Checkpoint fp8 is available with both fp8 and fp16 T5XXL ENCODER, choose "Full model fp16" in the downloads for fp16, and "Full model fp8" for fp8 t5xxl.

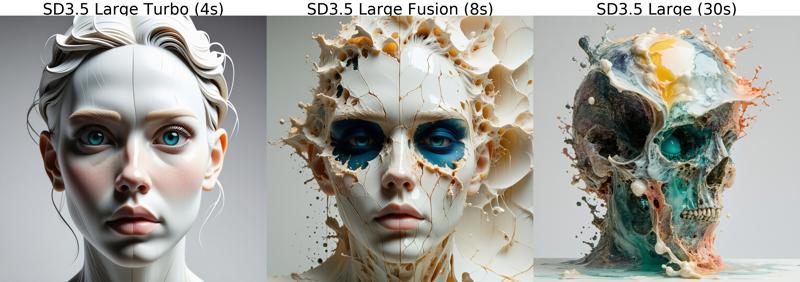

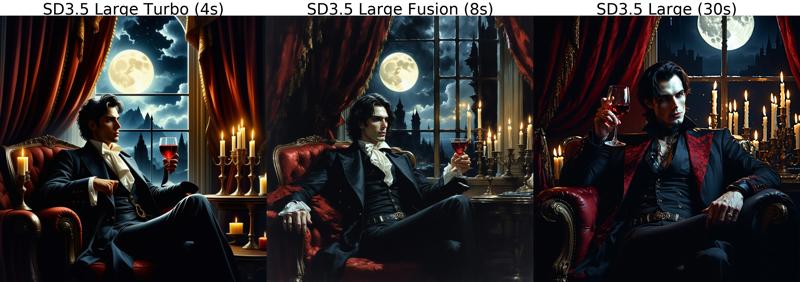

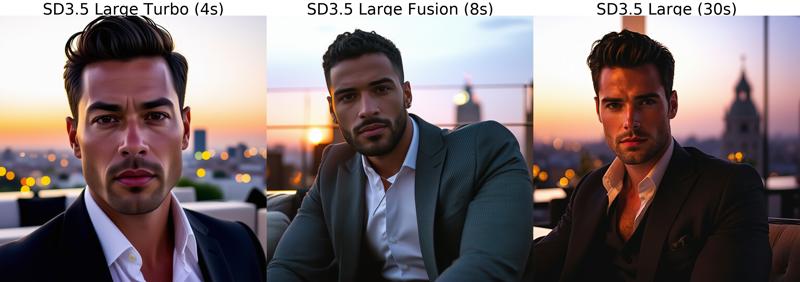

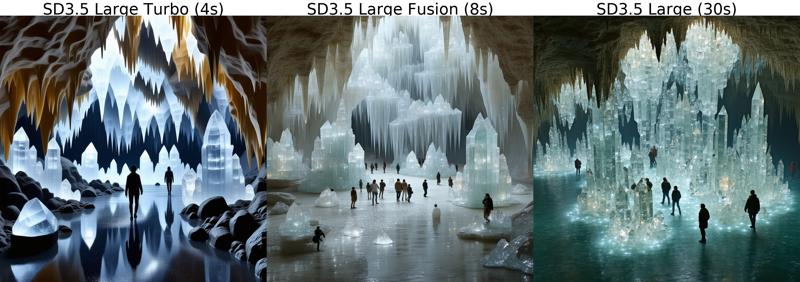

Experimental merge of SD3.5 Large and Large Turbo. I saved when I found a good balance at 8 steps, but needs further testing! So far, quality seems better than Turbo model with little speed sacrifice, although it can make some pretty incoherent images too.

Good results with CFG 1.0-3.0

Work in progress

PS. I borrowed some test prompts from SD3.5 image feed :)

Node for merging SD3.5 Large with right amount of joint blocks: https://pastebin.com/b88v5D8k

Comparisons

Description

Extracted LoRA from difference between this model and regular SD3.5 Large.

FAQ

Comments (6)

This model does not contain VAE and needs to be loaded separately

Seems like a good compromise. I am finding SD3.5 Large a bit slow without any hyper loras available yet, even on a RTX 3090.

Only 2 GB, for the pruned model !

Was this uploaded correctly ?

The same fucking butt chin from flux is here as well, fuck this shit I'm out, back to sdxl.

Comfy says "cannot detect model type" where do I have to place it? (the full version)

I just tested this out and yeah it's pretty fast and the images have great quality on them that I would get with the original checkpoint model. All in 8 steps and since it generated my image in 3 minutes as opposed to the 20 minutes on the original model, I will try to test 16-24 steps. SD3.5 is killing it so far, I'm not returning to FLUX, so thanks for setting this up for us buddy.

P.S. make sure you guys use the Load Diffusion Model node for the checkpoint model and download the VAE file on this page and connect to your VAE node. Use Load VAE node separately as well.