It is a simple workflow of Flux AI on ComfyUI.

Actually there are many other beginners who don't know how to add LORA node and wire it, so I put it here to make it easier for you to get started and focus on your testing.

And we have new way to run Flux ez with 1 click: https://civarchive.com/models/628682/flux-1-checkpoint-easy-to-use

Check out more detailed instructions here: https://maitruclam.com/flux-ai-la-gi/

To summarize my experience with it:

You will need at least 30 GB to use them :)

***

If you are a newbie like me, you will be less confused when trying to figure out how to use Flux on ComfyUI.

In addition to this workflow, you will also need:

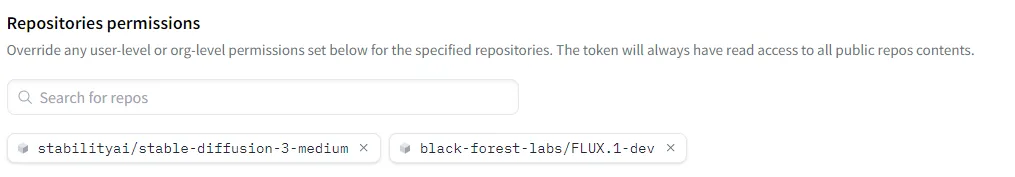

Download Model:

1. Model: flux1-dev.sft: 23.8 GB

Link: https://huggingface.co/black-forest-labs/FLUX.1-dev/tree/main

Location: ComfyUI/models/unet/

Download CLIP:

1. t5xxl_fp16.safetensors: 9.79 GB

2. clip_l.safetensors: 246 MB

3. (optional if your machine has less than 32GB of TvT ram) t5xxl_fp8_e4m3fn.safetensors: 4.89 GB

Link: https://huggingface.co/comfyanonymous/flux_text_encoders/tree/main

Location: ComfyUI/models/clip/

Download VAE:

1. ae.sft: 335 MB

Link: https://huggingface.co/black-forest-labs/FLUX.1-schnell/blob/main/ae.safetensors

Location: ComfyUI/models/vae/

If you are using an Ubuntu VPS like me, the command is as simple as this:

# Download t5xxl_fp16.safetensors to the directory ComfyUI/models/clip/

wget -P /home/ubuntu/ComfyUI/models/clip/ https://huggingface.co/comfyanonymous/flux_text_encoders/resolve/main/t5xxl_fp16.safetensors

# Download tp clip_l.safetensors to ComfyUI/models/clip/

wget -P /home/ubuntu/ComfyUI/models/clip/ https://huggingface.co/comfyanonymous/flux_text_encoders/resolve/main/clip_l.safetensors

# (Optional) Download t5xxl_fp8_e4m3fn.safetensors to ComfyUI/models/clip/

wget -P /home/ubuntu/ComfyUI/models/clip/ https://huggingface.co/comfyanonymous/flux_text_encoders/resolve/main/t5xxl_fp8_e4m3fn.safetensors

# Download ae.sft to ComfyUI/models/vae/

wget -P /home/ubuntu/ComfyUI/models/vae/ https://huggingface.co/black-forest-labs/FLUX.1-schnell/resolve/main/ae.safetensors

For the model, you will need to learn how to generate Huggingface Access Tokens and add them to download and use like this:

I don't know much about them so you can find out more.

Why don't I make tutorial for Windows 10, 11 or XP? What do you expect from a Mario 64 laptop :)

Original tutorial: https://comfyanonymous.github.io/ComfyUI_examples/flux/

Cách sử dụng:

•Sampling method: Euler

•Schedule type: Simple

•Sampling steps: 30

Weight: 0.8 - 1.2. Best: 0.8

Instructions for use:

•Sampling method: Euler a

•Schedule type: Simple

•Sampling steps: 30

Weight: 0.8 - 1.2. Best: 0.8

In the prompt, you should use the word: Woman using the word Girl will create many body anatomy errors.

0-0-0-0-0-0-0-0-0-0-0-0

Actually, you will not need the activation keyword for it to work, but you can add it to make Flux understand faster and give better results. ⚡

Note: It works well with FLUX.1-Turbo-Alpha, LORA human face. 👤✨

Useful and FREE resources:

Useful and FREE resources:

❤️Free server to make art with Flux: Shakker

✨ More FLUX LORA? List and detailed description of each LORA I implement here: https://maitruclam.com/lora

🆕 First time using FLUX? Explanation and tutorial with A1111 forge offline and Comfy UI here: https://maitruclam.com/flux-ai-la-gi/

🛠️ How to train your LORA with Flux? My detailed instructions are here: https://maitruclam.com/training-flux/

❤️ Donate me (I would be really surprised if you did that! 😄): https://maitruclam.com/donate

Find me / Contact for work on:

📱 Facebook: @maitruclam4real

💬 Discord: @maitruclam

🌐 Web: maitruclam.com

Description

Flux dev AI

FAQ

Comments (38)

30 GB of what do we need?

model + vae + clip = 30gb++ storage

Also decent amount of Ram and Vram

@Darknoice oh yeah i have 24gb vram and 32gb ram total average time for dev version is 30s to 47s

@maitruclam is that time taken for 1024 by 1024 res images?

@maitruclam with the same setup it takes me a little longer, but the latest comy UI seems to have a bug or something, getting an error regarding FP8 (even with FP16 clip :( ) well I will try again later.

@JohnnyB1 same for me

@JohnnyB1 try reducing the steps to 12 and the image to 512x768, some of my friends tried it and it was ok on the dev version

I've done some experiments and still can't figure out how to add in a negative prompt. Perhaps it can't be done right now?

basically it's not necessary, unless you combine it with SD to improve face or inpaint or img to img (someone will do workflow for these eventually)

@maitruclam It's not necessary for your workflow, no. You're right. But it is necessary if you want to remove reoccurring objects that keep popping up.

@filmington Yeah i'd love to know too! No matter how much i emphasise on an image to look like a digital oil painting with heavy brush strokes, they always turn out like real photographs.

I don't know if the dev team likes BlackPink, but if you prompt Asian or Korean, the face will look very similar to Jennie in BlackPink :)

Потребляет около 36 Гб. Результат с реализмом хороший. С аниме неплохой. Из минусов нету персонажей в базе данных не Nier 2b, не Konosuba. и да кошко деффок тоже не хочет не видит промт neko

My comfyui is the latest version, and both the large model and VAE model of sft format have been placed in the specified position, but when I select the large model and VAE in the comfyui workflow, I do not know the reason why I cannot see the sft model.

try reloading and switching between .safetensors and .sft. Also, do you have comfy connected to A1111 to save space? If so, try disconnecting them or putting the model file in the A1111 folder.

Also, you can check this post to see if it has any suggestions for you: https://civitai.com/articles/6479/flux-error-and-how-to-fix-it-or-not

same here, couldnt find the solution,

I change the extention to safetensors,

then i press the queue after that it gave an error

im getting this error when i change the unet flux1-dev from .sft to safetensors

Error occurred when executing UNETLoader: 'conv_in.weight' File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\execution.py", line 151, in recursive_execute output_data, output_ui = get_output_data(obj, input_data_all) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\execution.py", line 81, in get_output_data return_values = map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\execution.py", line 74, in map_node_over_list results.append(getattr(obj, func)(**slice_dict(input_data_all, i))) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\nodes.py", line 814, in load_unet model = comfy.sd.load_unet(unet_path) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\comfy\sd.py", line 565, in load_unet model = load_unet_state_dict(sd) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\comfy\sd.py", line 538, in load_unet_state_dict model_config = model_detection.model_config_from_diffusers_unet(sd) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\comfy\model_detection.py", line 380, in model_config_from_diffusers_unet unet_config = unet_config_from_diffusers_unet(state_dict) File "D:\_software\TyDiffusion\Engines\ComfyUI\ComfyUI\comfy\model_detection.py", line 262, in unet_config_from_diffusers_unet match["model_channels"] = state_dict["conv_in.weight"].shape[0]

The VAE link points to a 404 page, is there an alternative link?

You have to get it here https://huggingface.co/black-forest-labs/FLUX.1-dev/tree/main , it is called ae.safetensors as of today

@InfiniteFantasyArt Thank you!!

im trying to make a simple spiral using flux. It cant draw a simple spiral. It only does round circles, but it cant do a spiral :/

Anyone else think this is weird? Shouldn't these models easily generate simple primitives like this?

trying to generate something like this:

https://openclipart.org/image/2400px/svg_to_png/131347/1302066690.png

I am surprised it doesn't recognize a spiral in the prompt - but you could also train a LoRA to teach it to draw spirals, worst case!

Sooooooooooooooo if i download it and use it inside of A1111 and generate iamges did i able to generaste any image with text effect now ??

if u use a1111 forge it will work

Can anyone please help me update dual clip loader? Mine only has options for SD3 and SDXL. :(

check this: https://civitai.com/models/617609/flux1-dev

I needed to update-all, which took all night, but now I've gotten the option for FLUX to appear. The problem now is that 6gb VRAM is apparently not enough to run it, I need at least 8. It keeps telling me the "header is too large" when I try to load ae.safetensors.

The links to the 1-click installer are still helpful so thank you, but I imagine that 8gb limit will still be in effect.

@RuinDweller i think u need to try g4 ver, i can run it in 6gb vram 3060 laptop

please change the vae link. ae.safetsnor moved to another folder

ok done bro

@maitruclam thanks

can you recommend a flux workflow to me that lets me create handwriting that looks like it was drawn on a whiteboard? I tried it myself in flux and it doesnt look photo realistic

hmm i think you will need to train a lora yourself for that, as of now it is still the best model for text generation.

So far, this is the only Flux workflow in civit AI I got to run. And unfortunately, it's a little to simplistic for me. sorry.

like its name dude, simple and for beginners, i make it more complex by adding 1 more node to load lora V:

@maitruclam I didn't mean to come off as rude. But it was a great starting point. I have since added elements to this workflow and made it my own. Thanks for that.

@shinypubes2626 it's normal, i don't find it offensive or rude hahaha

Lucky you, I just get black square. Back to forge I guess.