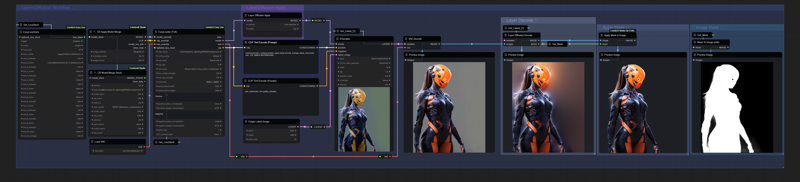

🌟ComfyUI LayerDiffusion Workflow [V.1.02] 🌟

This workflow is based on Layer Diffusion model and implementation by Chenlei Hu

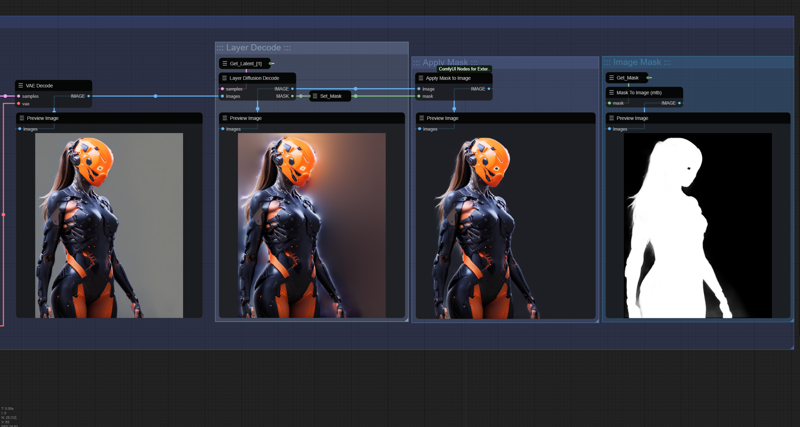

This is useful for compositing with mask and transparency.

NOTE :: This workflow need ComfyUI-layerdiffusion

https://github.com/huchenlei/ComfyUI-layerdiffusion.git

SDXL | LCM SUPPORT

Multi Model Merge

Multi Lora

Easy Loader Full

Recomended model and Loras for this Workflow::

Any SDXL model

Need comfyUI Manager for missing node

Description

FAQ

Comments (3)

I tried creating a LayerDiffusion workflow earlier today and I got terrible results using a single Lightning checkpoint. It worked pretty well with a normal SDXL checkpoint, but the Lightning checkpoints were just awful.

Did you have the same problem? Is that why you merged a Lightning model with a standard model?

@wyxzddsjj919 SDXL Lightning models are not terrible in my experience. They have been able to create everything I have wanted with very high quality. It's much better, in my experience, than LCM or Turbo.

Aw shucks I'm running out of VRAM during decoding, of all things (not generation, not regular vae decoding, the mask/ARGB decoding).

On the upside though it seems to work with Pony Diffusion and I don't want to decode anyway I want to latent compose with proper masking, still haven't ruled out that it can work fine without decoding (it should, shouldn't it, the transparency is already present in latent space that's the point).