A unified, modular SDXL/Pony/Illustrious powerhouse designed to consolidate professional-grade tools into a single, cohesive environment. This workflow eliminates "tab fatigue" by integrating CFG/Noise engineering, multi-LoRAs management, ControlNet, IPAdapter, InstantID, advanced Upscaling, and Detailers into ONE interface.

Designed for speed and high-precision control, this workflow is essentially a "power workbench" overflowing with switches, sliders, and logic gates. It is undeniably complex, complex enough to make a beginner’s brain do a backflip, but for the pros and technical geeks - it’s a total playground. Think of it as the cockpit of a fighter jet: intimidating at first, but incredibly powerful once you know what the buttons do.

Don’t panic! I’ve left a breadcrumb trail of notes and mini-tutorials in every single section. Please, I’m begging you read them. If you skip the manual and things get weird, don't say I didn't warn you! Devour the notes before you hit that "Queue Prompt" button; your VRAM (and your sanity) will thank you.

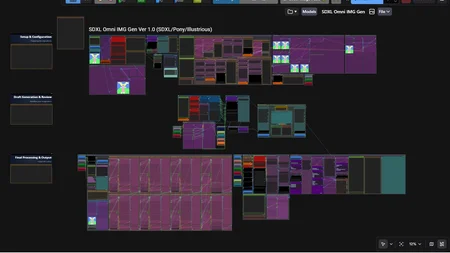

Core Philosophy: Process in clear layers; fully configure the workflow in Row 1, generate and review a draft in Row 2, then produce the final high-quality render in Row 3. The completed image returns to Row 2 for side-by-side comparison before saving.

The workflow is intentionally organized into three horizontal zones for logical progression:

Row 1 (Top) – Setup & Configuration

Row 2 (Middle) – Draft Generation & Review

Row 3 (Bottom) – Final Processing & Output

🛠️ System Requirements & Hardware

GPU: NVIDIA (30xx/40xx Series).

VRAM: 12GB Minimum / 24GB Recommended.

System RAM: 32GB Minimum / 64GB+ Recommended.

🎯 Software Environment

ComfyUI Version: 0.15.0+

ComfyUI Frontend: 1.39.16+

💻 Core Software Environment

Python 3.12.x: (Highly recommended). While versions as low as 3.10, 3.11 may work, 3.12 provides the best compatibility for recent dependency updates). This version provides native support for nested f-strings and delivers improved execution speed.

PyTorch: 2.10.0+cu130 - (Minimum 2.6.0+cu124) specialized build specifically engineered for full compatibility with CUDA 13.0.

TorchVision: 0.25.0+cu130

TorchAudio: 2.10.0+cu130

📦 Essential Python Dependencies

These libraries are crucial for the stability of your Custom Nodes. Versions are strictly locked to prevent conflict.

Core:

numpy==1.26.4,setuptools>=70.0.0,pydantic>=2.10.0.Imaging:

pillow==10.x,opencv-python,blendmodes==2025,glitch_this,rembg.Identity & Face:

insightface==0.7.3,onnxruntime-gpu.Miscellaneous:

tensorflow

⚡ High-Performance Attention

Please read this article for more information: https://civarchive.com/articles/21516/install-sageattention-2-notes.

SageAttention: Version 2.2.0 (Mandatory optimization).

Flash Attention 2: (Fallback/Legacy support via Torch SDPA).

Flash Attention 3: Version 3.0.0+cu130 (Optimized for RTX 40 series).

Triton: 3.+ Windows-compatible build for FP8/NF4 dequantization.

🧩 Required Custom Node Packs

Core Infrastructure:

rgthree-comfy,ComfyUI-Manager,ComfyUI_essentials,Use Everywhere (UE Nodes),ComfyUI-LogicUtils,ComfyMath,ComfyUI-JNodes,ComfyLiterals.CFG & Sampling:

efficiency-nodes-comfyui,sd-perturbed-attention,Skimmed_CFG,RES4LYF,pre_cfg_comfy_nodes_for_ComfyUI,ComfyUI-Detail-Daemon.Identity & Perception:

ComfyUI_IPAdapter_plus,comfyui_controlnet_aux,ComfyUI_ZenID,ComfyUI-WD14-Tagger,ComfyUI-AdvancedLivePortrait,Extra Models for ComfyUI.Upscaling & Motion:

ComfyUI Impact Pack,ComfyUI Impact Subpack,UltimateSDUpscale,ComfyUI-SeedVR2_VideoUpscaler.Creative Suite:

JPS Custom Nodes,Comfyroll Studio,ComfyUI-KJNodes,ComfyUI_Primere_Nodes,ComfyUI-JakeUpgrade,ComfyUI-pause,ComfyUI-mxToolkit.VFX & Realism:

ComfyUI-Optical-Realism,CRT-Nodes,Virtuoso Nodes,ComfyUI_SKBundle,ComfyUI-FBCNN,comfyui_fill-nodes.

📥 Model Requirement

SDXL Checkpoint: Any SDXL/Pony/Illustrious

SDXL VAE: Baked VAE (or any SDXL VAE)

Upscale/ESRGAN: Any 4x upscale models.

ControlNet:

xinsir/controlnet-tile-sdxl-1.0;ttplanet_sdxl_controlnet_tile;diffusion_pytorch_model-instantID;diffusion_pytorch_model_union_promax(or you can use separated models for every preprocesses)IPAdapter:

ip-adapter_sdxl_vit-horip-adapter-plus_sdxl_vit-h;ip-adapter-faceid-plusv2_sdxl;ip-adapter-faceid-portrait_sdxl;ip-adapter-faceid-portrait_sdxl_unnormCLIP-Vision:

CLIP-ViT-H-14orCLIP-ViT-bigG-14Instant-ID:

ip-adapter_instant_id_sdxlDetailers: Any detailer you prefer, I use person (#1), face (#2), eyes (#3), hair (#4), hands (#5), skin (#5), fashion (non-cond #1), furry (non-cond #2)

Others:

depth_anything_v2_vitl(depth map);ema_vae_fp16(SeedVR2);seedvr2_ema_7b_fp8_e4m3fn_mixed_block/seedvr2_ema_3b_fp8_e4m3fn(SeedVR2);clipseg-rd64-refined-fp16(noise map)

🚩 Optimized Execution Flags (Optional)

Use these flags in your .bat file to leverage the full power of your GPU

--fast fp16_accumulation

--dont-upcast-attention

--use-sage-attention

--disable-xformers (because xformers only support python <= 3.10.11)

--preview-method auto

--highvram/--normalvram/--lowvram