V 3.2.0

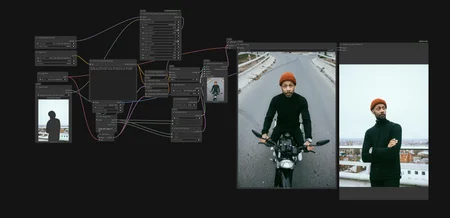

FLUX.2 Klein Identity Feature Transfer Advanced

Identity Feature Transfer now has an Advanced sibling, shipped as part of ComfyUI-Flux2Klein-Enhancer. Same core mechanism as the original, just way more control and an optional subject mask.

FLUX.2 Klein Identity Feature Transfer Advanced : Here

Workflow : here please use your own parameters as it's a taste based not set params :D

If you find my work helpful you can support me and buy me a coffee, I truly spend long hours thinking of solutions :)

----------------------------------------------------------------------------------------------------------------

Controls identity feature steering with per-band strength, a tunable similarity floor, a block schedule, and an optional spatial mask.

double_strength: per-block intensity for double blocks (pose, color, identity early). 0.15 to 0.20 is a safe start, raise to 0.4 to 0.6 for stronger guidance especially when the reference has multiple subjects.

single_strength: per-block intensity for single blocks (style, texture late). Same scale as double_strength.

double_start / double_end / single_start / single_end: which blocks are active. Lets you isolate identity (early blocks) or texture (late blocks) without touching the other.

block_schedule: flat keeps strength constant, ramp_down hits early blocks harder, ramp_up favors later blocks, peak_mid concentrates in the middle of the active range.

sim_floor: cosine similarity threshold gating which matches actually contribute. Low (around 0.05) gives a wide pull and a tight identity lock, ideal for subtle edits like outfit swaps where you want the character bit-perfect. High (around 0.4 to 0.6) makes the pull sparse and gives the model freedom to drift, ideal for broader edits.

mask_threshold: only matters when subject_mask is connected. 0.5 keeps boundary tokens, raise toward 1.0 to shrink the effective mask inward.

subject_mask (optional): paint the area of the reference you want the identity pulled from. When connected, the cosine pull samples ONLY from masked-in reference tokens.

mode and top_k_percent: same as the standard node.

---------------------------------------------------------------------------------------------------------------------------------------------------

The headline upgrade is the mask. The original node pulled features from anywhere in the reference, which meant backgrounds and unwanted subjects could bleed into the generation. With the mask connected, the pull is restricted to whatever you painted, so only the character or area you actually care about contributes to the identity transfer.

To be clear, the mask does NOT modify the reference latent. The model still sees the full reference, attention works exactly the same, scene context is intact. The mask only narrows which reference tokens our identity pull samples from. So the model keeps full freedom over the rest of the generation while the identity transfer stays clean and surgical.

Combined with sim_floor you can dial the node from full identity lock all the way to loose guidance with maximum prompt freedom. With separate double and single block strengths you can target identity early or texture late without touching the other.

The standard Identity Feature Transfer is still in the pack. Use it for quick setups, reach for Advanced when you need the mask, the floor, or fine block control.

To Do next Identity Guidance Advanced...

v3.0.0

The identity nodes are now released as part of ComfyUI-Flux2Klein-Enhancer. Workflow included.

Two new nodes:

Identity Guidance Controls identity correction during the sampling loop.

strength: how hard to pull toward the reference. 0.3 to 0.5 is a good rangestart_percent/end_percent: when the correction is active during denoising. Leaving some room at the end (0.8) lets textures refine naturallymode: adaptive preserves prompt-driven changes, direct locks everything, channel_match transfers color/feature palette only

Identity Feature Transfer Controls feature-level steering inside the attention blocks.

strength: per-block intensity, cumulative so start low. 0.15 to 0.25start_block/end_block: which blocks are active. 0 to 23 covers the full rangemode: cosine_pull for per-feature matching, topk_replace to only affect the most similar tokens, mean_transfer for overall character flavortop_k_percent: how many tokens are affected in topk_replace mode

Both can be used together. Guidance handles the macro, Feature Transfer handles the micro.

Workflow : here

If you find my work helpful you can support me and buy me a coffee :)

-----------------------------------------------------------------------------------------------------------------------------------------

V2.7.0

I created a node in attempt to prevent color shifting in flux2klein and I wanted to share it here, as it's been bugging me for a while.

The problem: when using a reference latent, the model gradually overrides its color statistics as sampling progresses, causing drift away from your reference, especially noticeable in short 4–8 step schedules.

This node hooks into the sampler's post-CFG callback and after every denoising step, measures the difference between the model's predicted color (per-channel spatial mean) and the reference latent's color, then gently nudges it back. Crucially, only the DC offset (color) is corrected; structure, edges, and texture are completely untouched.

The correction ramps up over time using whichever is stronger between a sigma-based and step-count-based progress signal, so it works reliably even on very short schedules where sigma barely moves.

Settings:

Strength (0.3–0.6 recommended) how aggressively to pull color back to reference

Ramp curve shape of the correction over time; higher values front-load the correction

Channel weights optionally trust channels with more stable color more heavily

Debug mode prints per-step drift info to console

In the examples I used the node to target each source-color in each photo individually, then mixed them both together just for fun.. it can do that as well, aside from its main purpose.

Examples were also using the ref latent controller node I released earlier this week.

Repo : https://github.com/capitan01R/ComfyUI-Flux2Klein-Enhancer

Sample workflow : https://pastebin.com/QTQkukpw

----------------------------------------------------------------------------------------------------------------------------------------------------

v2.6.0

Updated 04/06/2026

Note that the examples of the new version are only posted here, Github does NOT have the new examples, the code is updated though :)

https://github.com/capitan01R/ComfyUI-Flux2Klein-Enhancer!

sample workflow : https://pastebin.com/mz62phMe

post with examples here

So I have been working on my Flux2klein-Enhancer node pack and I did few changes to some of its nodes to make them better and more faithful to the claim and the results are pretty wild as this model is actually capable of a lot but only needs the right tweaks, in this post I will show you the examples of what I achieved with preservation and please note the note has more power that what I'm posting here but it will take me longer show more example as these were on the go kind of examples and you can see the level of preservation, The slide will be in order from low to high preservation for both examples then some random photos of the source characters ( in the random ones I did not take my time to increase the preservation).

so the use case currently is two nodes one is for your latent reference and one for the text enhancing ( meaning following your prompt more)

Nodes that are crucial FLUX.2 Klein Ref Latent Controller and FLUX.2 Klein Text/Ref Balance node:

FLUX.2 Klein Ref Latent Controller is for your latent you only care about the strength parameter it goes from 1-1000 for a reason as when you increase the balance parameter in the FLUX.2 Klein Text/Ref Balance node you will need to increase the strength in the ref_latent node so you introduce your ref latent to it , since when you increase the Balance you are leaning more toward the text and enhancing it but the ref controller node will be bringing back your latent.

Do NOT set the balance to 1.000 as it will ignore your latent no matter how hard you try to preserve it which is why I set the number at float value eg : 0.999 is your max for photo edit!

Also please note there are no set parameter for best result as that totally depends on your input photo and the prompt, for best result lock in the seed and tweak the parameter using the main concept as you can start from 1.00 for the strength in the ref latent control node and 0.50 for the ref/text balance node

-

Node updated and added as BETA experimental.

"FLUX.2 Klein Mask Ref Controller"

explanation of the node's functions : here

example workflow drag and drop : here

Repo: https://github.com/capitan01R/ComfyUI-Flux2Klein-Enhancer

I'm working on a mask-guided regional conditioning node for FLUX.2 Klein... not inpainting, something different.

The idea is using a mask to spatially control the reference latent directly in the conditioning stream. Masked area gets targeted by the prompt while staying true to its original structure, unmasked area gets fully freed up for the prompt to take over. Tried it with zooming as well and targeting one character out of 3 in the same photo and it's following smoothly currently.

Still early but already seeing promising results in preserving subject detail while allowing meaningful background/environment changes without the model hallucinating structure.

Part of the Flux2Klein Enhancer node pack

If you find this helpful :) https://buymeacoffee.com/capitan01r

*** Please note this is a beta version as I'm still finalizing the stable release but I wanted you guys to get a feel for it :) ***

Description

v3.2

FLUX.2 Klein Identity Feature Transfer Advanced