update : April 14th 2026 : Lightricks has updated their LTX 2.3 distilled model to 1.1 (and Lora):

Model (1.1 fp8 _scaled by Kijai): https://huggingface.co/Kijai/LTX2.3_comfy/tree/main/diffusion_models

dist. Lora 1.1 : https://huggingface.co/Lightricks/LTX-2.3/tree/main

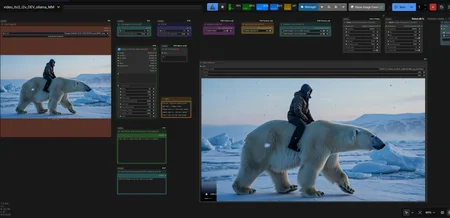

V2.5 LTX-2.3 DEV & Distilled Video with Audio

Image to Video and a Text to Video workflow, both can use own Prompts or Ollama generated/enhanced prompts.

works with latest LTX 2.3 Distilled model (8 steps, CFG=1) or Dev model (20 steps, CFG=3)

Updated the processing for DISTILLED and DEV model, select the DIST or DEV model in loader node and switch to dedicated DIST or DEV processing pipeline, so each model has its own processing.

DIST model pipeline: Standard Guider and Basic Scheduler, follows the manual sigmas issued by Lightricks

DEV model pipeline: MultiModal Guider and LTX Scheduler + Distilled Lora on latent upscaler

Included a workflow version with "RTX Video Super Resolution" node, which upscales videos in highspeed.

Tip: With latest Comfy and LTX updates, the processing got faster for me, so I can increase the scale_by in sampler node from 0.5 to 0.6 or higher to have crisper videos with minor impact on render time.

V2.3 LTX-2.3 DEV & Distilled Video with Audio

Downloads for LTX 2.3:

LTX-2.3 Distilled & Dev Models (fp8_scaled): https://huggingface.co/Kijai/LTX2.3_comfy/tree/main/diffusion_models

Textencoder1: (fp8_e4m3fn, same as LTX-2): https://huggingface.co/GitMylo/LTX-2-comfy_gemma_fp8_e4m3fn/tree/main

Textencoder2: (projection_bf16): https://huggingface.co/Kijai/LTX2.3_comfy/tree/main/text_encoders

Video & Audio Vae: https://huggingface.co/Kijai/LTX2.3_comfy/tree/main/vae

Loras:

Spartial upscaler (x2-1.1): https://huggingface.co/Lightricks/LTX-2.3/tree/main

Distilled Lora for upscaler (lora.384): https://huggingface.co/Lightricks/LTX-2.3/tree/main

Smaller, alternative Desitilled Lora by Kijai: https://huggingface.co/Kijai/LTX2.3_comfy/tree/main/loras

Detailer Lora (same as LTX-2): https://huggingface.co/Lightricks/LTX-2-19b-IC-LoRA-Detailer/tree/main

Ollama Model (prompt only, fast): https://ollama.com/mirage335/Llama-3-NeuralDaredevil-8B-abliterated-virtuoso

alternative model with Vision (reads input image+prompt, slower): https://ollama.com/huihui_ai/qwen3-vl-abliterated

other model with Vision (great for I2V): https://ollama.com/huihui_ai/qwen3.5-abliterated

smaller LTX 2.3 GGUF Dev or Dist. models work as well. (replace Checkpoint loader node with Unet loader node from this custom node: https://github.com/city96/ComfyUI-GGUF ):

models: https://huggingface.co/unsloth/LTX-2.3-GGUF/tree/main

save to models/unet/

V1.5 LTX-2 DEV Video with Audio including latest 🅛🅣🅧 Multimodal Guider

Image to Video and a Text to Video workflow, both can use own Prompts or Ollama generated/enhanced prompts.

Replaced the Guider node with latest Multimodal Guider node, see more details in WF notes or here: https://ltx.io/model/model-blog/ltx-2-better-control-for-real-workflows Before we had 1 CFG parameter for audio and video. With multimodal guider, we now can tweak audio and video seperately with even more parameters...

added a Power Lora Loader node to inject further Loras

use Image to Video Adapter Lora to improve motion for I2V: https://huggingface.co/MachineDelusions/LTX-2_Image2Video_Adapter_LoRa/tree/main

replaced a node to no longer require comfymath custom nodes

V1.0 LTX-2 DEV Video with Audio:

Image to Video and a Text to Video workflow with own Prompts or Ollama generated/enhanced prompts.

setup for the LTX2 Dev model.

uses Detailer Lora for better quality and LTX tiled VAE to avoid OOM and visual grids

2 pass rendering (motion+upscale). Upscale process uses distilled and spatial upscale Lora

setup with latest LTXVNormalizingSampler to increase video & audio quality.

Text to Video can use dynamic prompts with wildcards.

Download LTX-2 Files: (Workflow V1.0 and V1.5 only)

Find Model/Lora Loader nodes within Sampler Subgraph node.

- LTX2 Dev Model (dev_Fp8): https://huggingface.co/Lightricks/LTX-2/tree/main

- Detailer Lora: https://huggingface.co/Lightricks/LTX-2-19b-IC-LoRA-Detailer/tree/main

- Distilled (lora-384) & Spatial upscaler Lora: https://huggingface.co/Lightricks/LTX-2/tree/main

- VAE (already included in above dev_FP8 model, but needed if you go for GGUF models): https://huggingface.co/Lightricks/LTX-2/tree/main/vae

- Textencoder (fp8_e4m3fn): https://huggingface.co/GitMylo/LTX-2-comfy_gemma_fp8_e4m3fn/tree/main

- Image to Video Adapter Lora (more motion with I2V): https://huggingface.co/MachineDelusions/LTX-2_Image2Video_Adapter_LoRa/tree/main

Save Location:

📂 ComfyUI/

├── 📂 models/

│ ├── 📂 checkpoints/

│ │ ├── ltx-2-19b-dev-fp8.safetensors

│ ├── 📂 text_encoders/

│ │ └── gemma_3_12B_it_fp8_e4m3fn.safetensors

│ ├── 📂 loras/

│ │ ├── ltx-2-19b-distilled-lora-384.safetensors

│ └── 📂 latent_upscale_models/

│ └── ltx-2-spatial-upscaler-x2-1.0.safetensors

│ └── 📂 Clip/

│ └── ltx-2.3_text_projection_bf16.safetensors

Custom Nodes used:

https://github.com/Comfy-Org/Nvidia_RTX_Nodes_ComfyUI (RTX VSR Version)

Text 2 Video only:

Ollama help:

Install Ollama from https://ollama.com/

download a model: Go to a model page, chose a model , then hit the copy button, i.e. https://ollama.com/huihui_ai/qwen3-vl-abliterated

open terminal and paste the model name, i.e.: ollama run huihui_ai/qwen3-vl-abliterated

model will be downloaded and can be selected in green comfy node "Ollama Connectivity". Hit "Reconnect" to refresh.

Example longer Video

Description

LTX2 DEV Image to Video and Text to Video

(including Multimodal guider and LTXV Normalizing Sampler)