RH Invite Code:

https://www.runninghub.ai/?inviteCode=rh-v1279

New user with 1000 free RH credit

All my workflow and models are avaible on RunningHub

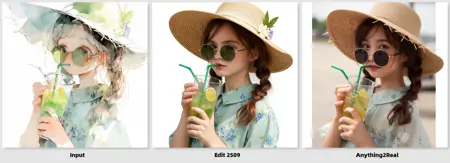

F2K 9B Anything2Real A

New version of Anything2Real on F2K model.

Based on previous version, adjusted the style to more realistic and less 3D

Flux2 Klein 9B

Based on strong transformation ablitiy on Flux2 Klein 9B

The new anything2real lora adjusted aesthetic and image fidelity

Prompt:

transform the image into high quality realistic photograph. {male/female}

The model mostly trained on female images. Please add male if the input image is male.

Lora strength:

0.8-1

The lora tends to be a little bit more "3D" use less strength to gain more realistic from base model.

RH App One Click Transform Image:

https://www.runninghub.ai/ai-detail/2016070515554783233/?inviteCode=rh-v1279

RH Workflow:

https://www.runninghub.ai/post/2016060164108980226/?inviteCode=rh-v1279

RH Comparer with base:

https://www.runninghub.ai/ai-detail/2016075121722658818/?inviteCode=rh-v1279

RH Workflow:

https://www.runninghub.ai/post/2016072419668140033/?inviteCode=rh-v1279

Hugging Face:

https://huggingface.co/lrzjason/Anything2Real/tree/main

f2k_anything2real.safetensors

Qwen Edit 2511

RH APP:

https://www.runninghub.ai/ai-detail/2007507982543757313/?inviteCode=rh-v1279

RH online workflow:

https://www.runninghub.ai/post/2007432627858444289/?inviteCode=rh-v1279

Anything2Real 2601 A is an adjusted version which mixed alpha more and with extra weight adjustment based on 2601. It produces stronger transformation between image and realistic.

This LoRA, built on Qwen-Edit 2511, incrementally maps images of any visual style into the photographic domain. The training objective is to minimize perceptual distance rather than to perform pixel-level reconstruction.

Training Protocol

Dataset

7 style-paired subsets, 200 source images ≥ 1024 px, covering portraits, landscapes, and objects.

Training Pipeline

Two-stage tuning: (a) trigger-word + long-caption pairs, (b) trigger-word only.

Layer-wise merge with Anything2Real α; weights manually tuned to preserve low-frequency structure while replacing high-frequency texture.

Recommended Positive Prompt

transform the image to realistic photograph. {detailed description}

Note: {detailed description} is optional. Style-related tokens such as “anime”, “cyber”, or “oil-painting” are strictly forbidden; their inclusion will inject out-of-domain noise.

Strength Tuning

Default range: 0.8–1.0.

If over-smoothing or texture loss occurs, decrease in 0.1 steps down to 0.5.

Should realism remain insufficient, blend with an additional photorealistic LoRA and adjust to taste.

Because “realism” is inherently subjective, first modulate strength or switch base models rather than further increasing the LoRA weight.

Contact

Feel free to reach out via any of the following channels:

Twitter: @Lrzjason

Email: [email protected]

QQ Group: 866612947

WeChat ID:

fkdeaiCivitAI: xiaozhijason

Description

FAQ

Comments (43)

Looks like it's Flux Kontext of Flux lora. It works with Flux kontext with no errors and no visible effect

Are you sure that it's qwen image lora? I've tried with 2509 and got an error

- Exception Message: The size of tensor a (96) must match the size of tensor b (32) at non-singleton dimension 1

I am 100% sure It is qwen edit lora and tested with official model

something is definitely wrong, "lora key not loaded: transformer.transformer_blocks.59.attn.add_k_proj.lora_A.weight

lora key not loaded: transformer.transformer_blocks.59.attn.add_q_proj.lora_A.weight

lora key not loaded: transformer.transformer_blocks.59.attn.add_v_proj.lora_A.weight

lora key not loaded: transformer.transformer_blocks.59.attn.to_add_out.lora_A.weight

lora key not loaded: transformer.transformer_blocks.59.attn.to_k.lora.down.weight

lora key not loaded: transformer.transformer_blocks.59.attn.to_out.0.lora.down.weight

lora key not loaded: transformer.transformer_blocks.59.attn.to_q.lora.down.weight

lora key not loaded: transformer.transformer_blocks.59.attn.to_v.lora.down.weight

lora key not loaded: transformer.transformer_blocks.59.img_mlp.net.2.lora_A.weight

lora key not loaded: transformer.transformer_blocks.59.txt_mlp.net.2.lora_A.weight" getting these errors with qwen-image-edit THOUGH it is working and actually makes a recognizable difference

same thing happen on me

please share the comfyui workflow you are using

@maxfluxai254 It could be used on runninghub or you could download the workflow on huggingface. https://huggingface.co/lrzjason/QwenEdit-Anything2Real_Alpha/tree/main

I can confirm the same thing is happening to me

@miggaroz676 Might be update the comfyui version? I run at local and runninghub both are ok. I updated comfyui recently.

Same here.

I use nunchaku with this lora loader: https://github.com/ussoewwin/ComfyUI-QwenImageLoraLoader

Got the same but lora works. Just some weights are messed up.

Grok is free and pretty good at fixing Compfyui issues, paste a workflow screenshot, the text, or .json workflows. maybe chatGPT can do it also..

I just notice I'm getting "lora key not loaded too"...and i guess its making difference, since using 4 or 8 steps lightning, on first iteration, at preview, shows image transformed into real, but in 2nd iteration it turns back to anime style again.

@xiaozhijason I used your workflow but this still happening

@sondroal I just noticed it happen. But it doesn't affect the result. I freezed 59 layer while training the lora. 59th layer doesn't affect the results

@xiaozhijason I keep getting images created but I'm a beginner so I'm nervous when I see some messages.. so I asked.

Thank you for your response!

If you are using Nunchaku and encounter the error "The size of tensor a (xxx) must match the size of tensor b (xxx)", this indicates that this LoRA is incompatible with the Nunchaku architecture. Please note that this is not an issue the LoRA author can resolve, as it stems from inherent compatibility limitations within the Nunchaku framework regarding certain LoRAs. In this situation, the only solution is to switch to using a standard model or a GGUF quantized model.

Is this for Qwen Edit, or Qwen Edit 2509 (Qwen Edit Plus)? Your example compares against 2509, but all the other text just mentions Qwen Edit.

Qwen Edit 2509.

Please mix the training dataset with non-asian people too. For some reason most of the anime2real models make every character look asian, even changing the eye color and hair color. Thank you!

All you have to do is add desired race/important (don't add much, less is more) details in the llm prompt and it fixes it...

ex: change the picture 1 to realistic photograph with a caucasian woman with blue hair and blue eyes.

and that changed output from asian to caucasian and kept the blue hair/eyes from the picture instead of changing both brown.

不愧是志佬

I was unable to get anything close to the examples imagens, only high level CGI like...and yep, its only works at range of 0.75 - 0.90...

It has bad cases. You might use another realistic lora on top of this lora to enhance the transfer.

Hello, I’ve been using the LoRA and workflow you provided, and they’ve been working extremely well. Thank you very much for creating them.

I have one request regarding a feature that I hope might be considered in a future update. Would it be possible to allow the use of multiple reference images—such as three images—similar to the original Qwen Image Edit 2509, instead of being limited to a single image as it is now?

I often work on converting multiple 3D-rendered character images into realistic photos. Since these images feature the same character in different poses and camera angles, I process several of them together. Overall, the results are excellent, but one thing I wish could be improved is that the character’s hairstyle and facial features tend to come out slightly different across each generated image.

To address this, I’d like to try referencing an additional image—such as a character sheet or a close-up portrait of the character—so I can transform image #1 while using image #2 as a guide for the character’s face and hairstyle. I thought it might be possible to handle this as part of the prompt, which is why I’m reaching out to ask if adding multi-image support could be considered.

Thank you again for your work.

默认没有beta57这个调度器啊 哪里找的?

nunchaku支援嗎?

thanks, i love this, but the images sometimes are too dark.

Adjust lora strength and add prompt to describe the environment. It is a known issue of this alpha version

@xiaozhijason okey, thanks brother. 👍

It's really good, but my one complaint is that it can't really handle unusual skin colors (purple, blue etc.) It just tends to make them all white.

Yes, it is a limited due to dataset. You might try to low strength and use prompt to force the model do it.

THANK YOU!!! this might be my favorite lora of all-time...its amazing.

Thank you for creating this excellent LoRA! I've tested it with the standard qwen-image-edit-2509 model, and the results are fantastic.

However, I wanted to note that it is currently incompatible with the Nunchaku quantized model. I want to clarify that this is not an issue with your LoRA, but rather an architectural limitation within Nunchaku itself.

Specifically, Nunchaku's CPUOffloadManager pre-allocates SVD quantization buffers with fixed shapes. While this works for standard LoRAs, any LoRA that modifies the structure of the MLP or Attention layers (resulting in changed ranks or dimensions) will trigger a 'Shape Mismatch' error during loading. This LoRA appears to modify those specific layers, which conflicts with Nunchaku's fixed buffers.

This isn't a major issue for me, as I can simply switch to using the standard or GGUF model formats. I am just sharing these findings as a reference for other users who might be attempting to run this with Nunchaku.

这个LORA 用nunchaku K采样器会报错显示 The size of tensor a (192) must match the size of tensor b (128) at non-singleton dimension 1别的同类没问题

非常感谢你的分享,这个模型非常轻量,你的lora生成的脸又比另一个cos的lora好看不少,现在2511 lighting出了,有打算支持吗?

会基于2511训练一个新的,在准备训练集

This looks great. Is there also a model/workflow that goes the opposite? Realistic -> illustration?

tried using this on wan2gp and got this error

"The generation of the video has encountered an error, please check your terminal for more information. 'addmm_(): argument 'mat1' (position 1) must be Tensor, not NoneType'"

the quant type the wangp has downloaded for you does not match what this lora expects, try adding a better quant model or do the sensible thing and learn comfyui

Depending on your hardware, comfy may not be worth the hassle. 4070ti super 16gb vram i9 12th gen 64gb ram and comfy is slower with ooming. I'm also spoiled by wan2gp's custom perks. I came here for the same reason you did so I explored w2gp and ran some images through Ditto sim2real. I had fun using Ditto for video effects in the past but it will gen images as well. Unlike the video using globaltype, sim2real images were awful. I eventually got good results only using 1080p. Then I tried Qwen2511 with same real-life photo-realism prompt and it knocked it out the park every time in around 30s. Whatever this lora may add, I can't imagine its worth the trouble to use comfy. There were 2 images Ditto gave a unique appeal that I preferred but the rest was no contest. 2511 is more than capable. Just to add, Ive found playing with various loras for other qwen models auto-downloaded in w2gp will work and give different results. You can also download the lora that lets you change cam position using prompts like rotate the cam 45 degrees to the right. Sometimes using it without changing cam position has helped follow my prompt when otherwise wouldn't. Hope this helps.

I found some old Midjourney/Leonardo/SDXL images made over two years ago. Anything2Real does a great job bring these old images into the modern space. Great job