READ THE DESCRIPTION

Do not download LoRa (NOT NECESSARY)

This is a simple and powerful tutorial, I uploaded a LORA file because it was mandatory to upload something, it has nothing to do with the tutorial. Tribute and credit to hnmr293.

Step 1: Install this extension

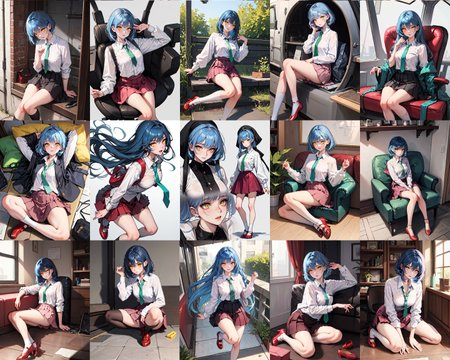

Step 2: Restart Stable Diffusion (close and open)

Step 3: Enable Cutoff (in txt2img under controlnet or additional network)

Step 4: Put in positive prompt: a cute girl, white shirt with green tie, red shoes, blue hair, yellow eyes, pink skirt

Step 5: Put in negative prompt: (low quality, worst quality:1.4), nsfw

Step 6: Put in Target tokens (Cutoff): white, green, red, blue, yellow, pink

Step 7: Put 2 of weight. Leave everything else as the image.

Tips:

0# Give priority to colors, first them and then everything else, 1girl, masterpiece... but without going overboard, remember tip #3

1# The last Token of Target Token must have "," like this: white, green, red, blue, yellow, pink, 👈 ATTENTION: For some people it works to put a comma at the end of the token, for others this gives an error. If you see that it has an error, delete it.

2# The color should always come before the clothes. Not knowing much English happened to me that I put the colors after the clothes or the eyes and the changes were not applied to me.

3# Do not go over 75 token. It is a problem if they go to 150 or 200 tokens.

4# If you don't put any negative prompt, it can give an error.

5# Do not use token weights below 1 eg: (red hoddie:0.5)

20 images were always worked on and in most of the tests it was 100%. If they put, for example, green pants, some jean pants (blue) can appear, also with the skirts a black skirt can appear. These "mistakes" can happen.

That's why I put 95% in the title because 1 or 2 images out of 20 images may appear with this error.

Description

FAQ

Comments (83)

civitai needs a whole new category for this type of stuff, like tutorials.

do i need to update to latest sd build for this?

(i was getting a runtime error and wouldnt generate when using)

That's weird, if you want, open a ticket here: hnmr293/sd-webui-cutoff: Cutoff - Cutting Off Prompt Effect (github.com)

I'm not seeing any errors, but I can't generate either. I click generate and it goes gray as if it's going to work but just freezes and I can't interrupt or stop it.

@skullzy77 but in the CMD you do not see any error? something that one can identify where the problem is. I read that if you are doing it with more than 75 tokens it can cause problems.

@DitamAi yeah, mine usually has quite a lot of tokens sadly, ':D

@Yabba xD Mine too, as long as they don't put it with more tokens, it's time to use it "lightly"

@DitamAi Nope, didn't show any errors in CMD. I may have used more than 75 tokens though. I'll try again today with less.

@skullzy77 What I saw in some people is that if you put a comma in the final token it can give you an error, if you have the comma there try to remove it to see if it solves it.

[Edit] Seems like there is exponential(?) scaling with preprocessing time the more textual inversion embeddings you use in prompt. More than one or two causes prohibitively long delays. With 3 TI in my negative prompt it took ~30 seconds to begin generation.

[Edit2] Seems like the problem is not with TI specifically, but the number of tokens in the prompt. As I approach ~60 or so in the negative prompt (with 12 in positive prompt) it becomes prohibitively long.

So, as is this plugin is not useful for my workflow. Hopefully the plugin author finds methods to improve the performance

I think I read on Github that it can only be used from 75 tokens and below. I only tried it with simple prompts. I'm going to try it with complicated ones to see how it works out for me.

You're right, the preprocessing time scales with tokens in general, not just TI (just TI tend to take more tokens per word).

@Machi Maybe later they will do it with 150 or 200 tokens. You know how it is, it starts slowly and PUM! already this week you can run ChatGPT3 on your own computer locally.

谢谢你

The gif you're using is annoying as fuck.

I did it on purpose. If you go to my other models you will see other more beautiful designs. I saw these results so good that I wanted to share it as quickly as possible.

Yeah, i adblockered the hell out of it. You could barely make it more annoying if you tried. with 11 images in 1 second at the end of that gif. I conveyed my point weeks ago in the discord. There's a discussion on it. Chime in there maybe. Acceptable solutions all seem to be there in the discussion though so hopefully just a matter of time.

@dillion1920 sorry and by the way you are my favorite actress. :3

@DitamAi some people get seizures for that kind of speed. Just fyi. The dude had a point

https://i.imgur.com/EMWv8Mh.png

can't generate, how can I fix it?

How much token are you using? If you use more than 75 it can give this type of error.

I had the same eror but with only 5 tokens =/

https://media.discordapp.net/attachments/1058668279419371520/1084808431808028715/Screenshot_1.png

@jacky00025 and @frozentear

Try writing a ticket here: https://github.com/hnmr293/sd-webui-cutoff

I also have the same issue i don't know if python version matters but I'm currently using 3.10.6

@DitamAi 23only.

1# The last Token of Target Token must have "," like this: white, green, red, blue, yellow, pink,

↑ get error with this, works with "white, green, red, blue, yellow, pink" so you don't need last comma?

@DitamAi Okay I will try more things before sending a ticket. Just got that error on my own prompt. But sadly removing the other colors from negative prompt didn't work so far. Looks like using the simpler prompt just like the example in the tutorial is working for me.

@jacky00025 ohhhhhhhh!!! If I don't put the comma, it gives me an error. xD

@frozentear but you must put the colors in Target Token, not in the negative prompt.

@invalid Try to do what Jacky did.

@DitamAi It works for me, what I did was I generated an image without the cutoff, then I did with and that seemed to work.

@jacky00025 Can you upload some generated images?

@DitamAi https://i.imgur.com/jITV9XB.png

@DitamAi Yes I did that, I followed the instructions before posting here. But back at that time I noticed there was "white background" in negatives and removed it in case there was some sort of conflict.

@frozentear Still not working bro?

@DitamAi I have had mixed results. But my computer is not really suited to use SD at the moment. So don't worry about it, thanks in any case! This looks very promising.

@frozentear "Send a virtual hug"

i had the same issue i had to close both the ui tab and the cmd window when i restarted to get it to run at all

Really useful tutorial, thanks a lot for sharing!

Do you mind sharing how you got the VAE dropdown and the Clip Skip slider in the first image of your description, below Step 1 ?

You can quickly access settings by editing "Quicksettings list" under Settings, for example

sd_model_checkpoint,CLIP_stop_at_last_layers,sd_vae

Setting > User Interface > Quicksettings list >

Paste this: sd_model_checkpoint, sd_vae, CLIP_stop_at_last_layers

Printscreen: https://prnt.sc/fV2WJOaGIBsA

Now let me see the result images, wit this "trick" plz :3

I couldn't get this to work. It either didn't fix the color issues, or completely froze the generation, requiring a total restart of the webui.

@chaosleges Could it be that you used more than 75 token?

@DitamAi No, I made sure both positive and negative were 75 or less. It simply hangs forever once the generate button is pressed. No errors or anything.

@chaosleges I just updated the indications in the description. Some people get an error if they put the comma at the end of the token in target token, if you put it, remove it, to see if that's the problem. If it continues like this, I suggest you submit a ticket.

@DitamAi That was the problem, yes. The comma caused the freezing. But even after fixing this, the colors are no different with this enabled than they are without it. Do positive and negative prompts have to total 75 or each being 75 should be fine?

@chaosleges I wouldn't know how to tell you there, but the best thing is that you try it with basic indications and then go on increasing in detail.

You could technically link to the zip file which is the extension lol

I say it in the description.

Exactly DarkAgent, he could technically take all the credits for itself.

@hexc0de I don't want any credit, from minute one in the description I put who the creator is.

this isn't about credit just saying you could also upload it as the content lol

Anyway, how to install it? I am super newbie. TIA!

I think you should add read description in the title. I'm sure some people will just look at the title and not realize what this is.

Ready!

Does this work with any adjectives beside colours?

I have only tested it with colors, although supposedly if you put "Tennis Jordan 23" in the prompt and in the target token and the AI in its database has training on those Shoes, it may appear in all your images instead of getting confused with sandals or heels.

I tried it with even more basic descriptors, but for facial features. Unfortunately, the effect was at best a minor change, at worst corrupted the image.

good work! model for the first 3 grids? i love the clean art style

I'm sorry, I don't know much English... but the prompt with which I made the girls you see here, are in each image. I'm sorry, I didn't misunderstand what you asked for.

@DitamAi No worries. I want to know the 'stable diffusion model' used to make the first image. If it is private, then I understand

@hfpan Look at the prompt of each image.

for the girl in the green skirt, use this: 1girl, beige blouse, green skirts, red eyes, red hair, white shoes

@DitamAi I see the prompt., sorry I should have been clearer. I'm asking for the model, like Anything v3, AOM3, or DreamShaper?

@hfpan ohh sorry bro, AOM3

very very useful. thanks

Thank you!

Hmm... not working for me at all. Not sure if it is because I'm using directml webui version (AMD graphic card)

Probably.

This only works on some models (anime/illustration mostly).

I'm not really getting much difference and definitely not more closely matching my prompts.

Edit: Actually, I've noticed that you have to add all the tags that might conflict with each other. So if you have 5 colors you need to use all 5 colors in you cutoff or you won't get much difference.

Can confirm it doesn't work on an AMD card with the directml webui :(

有用,但同时也没用,有点鸡肋... 最大的缺陷,在于对tag的字数(token)限制到了75以内(我几乎不可能仅输入这么少的字数....)。而当tag字数足够少的时候,即使不用这个插件,也不会出现很大面积的 “串色” 问题。但它又确实能起到锦上添花的作用,可以帮助修复那些细节的颜色错误。

It says "install this extension." Can anybody see what the name/URL of the extension is? Nevermind, I just noticed the obvious link.

I need more sleep.

I'm guessing this doesn't work with token merge?

Nvm, looks like it even works with token merge! Quality extension.

Is this different from putting BREAK in your prompt to split it up? I read that BREAK was meant to address the same problem with color contamination.

Well BREAK doesn't seem to work -- for me atleast

Tried this extension and tried with the BREAK method. None seems to provide consistent results.

not working.

I tried your positive + negative prompt, but get several of the following errors:

*** Error creating infotext for key "Cutoff targets" Traceback (most recent call last): File "D:\Stable Diffusion\stable-diffusion-webui\modules\processing.py", line 807, in create_infotext generation_params[key] = value[index] IndexError: list index out of range---I tried with and without the comma at the end of target tokens. Either gives me the errors. The images are generated, but colors are not where they are supposed to be. Do you have any advice on how to proceed?

Yep, same thing happens here. Sometimes it shows this error and then the colors are messed up. Anyone has any ideas?

I only use the on site generator what app, interface or software is this to generate images locally?

I am using webui forge and when I install this extension, it doesnt show up in txt2img. any help would be appreciated

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.