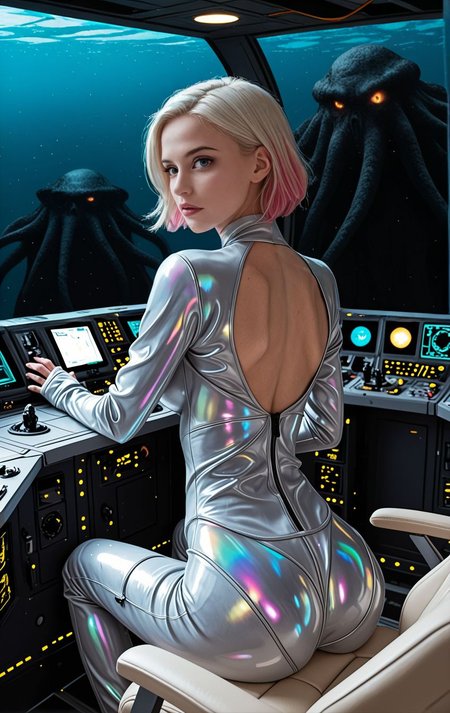

Latent Chimera is an Illustrious based model with an emphasis on semi-realism.

Switching to Forge improved memory management significantly, making SDXL models more practical. After exploring various models, I decided to merge my favorites to experiment with new possibilities. Latent Chimera has non-Illustrious SDXL models mixed back in to boost creativity and reduce overfitting.

This model is the result of combining multiple sources using the SuperMerger extension for A1111. Through methods such as MBW, cosine interpolation, and static weight blending, I aimed to produce a composition distinct enough to warrant sharing—an outcome shaped by ongoing experimentation with merge strategies.

All source model licenses were reviewed prior to merging, and the most permissive terms available under the inherited usage conditions were applied. Merge recipes are included in metadata. VAE has been baked in.

Reality Check

This isn’t intended as a flagship model. My aim here isn’t to drop a “top-tier” release, but to explore merge behavior, stylistic bias, and scene composition in a controlled way. I avoid rushing merges right after donor model releases, both out of respect for the creators and to keep the focus on understanding mechanics—not chasing hype. Most of my excessively long prompts are reused across tests so I can compare bias and behavior between models; while I still make occasional adjustments, I generally post results as they come—flaws, quirks, and all.

Description

⚠️ Inpainting-only model. Do not use for txt2img.

Employ this checkpoint only within inpainting workflows upon an image already in being. It was wrought to amend masked regions, and not to conjure forth a whole image from naught.

A chief virtue of an inpainting model is this: if the base image was first fashioned with the base model, and thereafter refined with the matching inpainting model, the result shall oft bear greater coherence—both in style and in structure—than if one were to mingle unrelated models. For Civitai users, this commonly meaneth: generate thy SFW base image first, then inpaint only the masked portion with the matching inpainting model.

For the finest results, employ it alongside such tools and workflows as these:

ADetailer

https://github.com/Bing-su/adetailer

A1111

https://github.com/AUTOMATIC1111/stable-diffusion-webui

Forge

https://github.com/lllyasviel/stable-diffusion-webui-forge

ComfyUI

https://github.com/comfy-org/ComfyUI

InvokeAI

https://invoke-ai.github.io/InvokeAI/

ADetailer detection / segmentation models

https://huggingface.co/Bingsu/adetailer

The basic conceit is thus: begin with an image, mask the region thou wouldst alter, and thereafter run the model only upon that masked portion. Shouldst thou load this as though it were a common txt2img model, the issue shall most oft be amiss, broken, or full of bewilderment.