This is the most OPTIMAL (in terms of Quality/Speed|VRAM) quant of the HiDream Full model packed with completely "lobotomized"=uncensored text encoder (Meta Llama 3.1)

This file collection contains two main ingredients that make HiDream way better and !UNcensored:

Nice trick: converted-flan-t5-xxl-Q5_K_M.gguf is used instead of t5-v1_1-xxl-encoder-Q5_K_M.gguf for better text-to-vector translation/encoding;

The main secret ingredient: meta-llama-3.1-8b-instruct-abliterated.Q5_K_M.gguf - instead of Meta-Llama-3.1-8B-Instruct-Q5_K_M.gguf --> Read about the process of LLM abliteration/uncensoring here: https://huggingface.co/blog/mlabonne/abliteration (get other uncensored LLMs from his repos on Huggingface...)

So ... simply do:

Unpack the archive file!

place: hidream-i1-full-Q5_K_M.gguf file in ComfyUI\models\unet folder;

place: converted-flan-t5-xxl-Q5_K_M.gguf, meta-llama-3.1-8b-instruct-abliterated.Q5_K_M.gguf, clip_g_hidream.safetensors, clip_l_hidream.safetensors in ComfyUI\models\text_encoders folder;

place: HiDream.vae.safetensors under ComfyUI\models\vae folder;

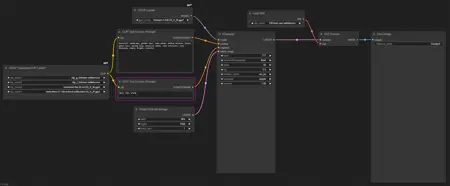

use my UNcensored HiDream-Full Workflow.json as starting workflow to test how it works;

in case of VRAM problems - use my VRAM optimized bat file: run_nvidia_gpu_fp8vae.bat to start ComfyUI (put it directly into the ComfyUI folder);

... this way you can have a nice high quality HiDream-Full image generation with 12Gb VRAM (tested!)

Update1: You can also use other uncensored Meta Llama 3.1 versions in text encoder part, for example this image: https://civarchive.com/images/71818416 is using DarkIdol-Llama-3.1-8B-Instruct-1.2-Uncensored-GGUF

Update2: Try this CLIP-G --> https://civarchive.com/models/1564749?modelVersionId=1773479

Description

This file collection contains two main ingredients that make HiDream way better and !UNcensored:

Nice trick: converted-flan-t5-xxl-Q5_K_M.gguf is used instead of t5-v1_1-xxl-encoder-Q5_K_M.gguf for better text-to-vector translation/encoding;

The main secret ingredient: meta-llama-3.1-8b-instruct-abliterated.Q5_K_M.gguf - instead of Meta-Llama-3.1-8B-Instruct-Q5_K_M.gguf --> Read about the process of LLM abliteration/uncensoring here: https://huggingface.co/blog/mlabonne/abliteration (get other uncensored LLMs from his repos on Huggingface...)

Unpack the archive file!

place: hidream-i1-full-Q5_K_M.gguf file in ComfyUI\models\unet folder;

place: converted-flan-t5-xxl-Q5_K_M.gguf, meta-llama-3.1-8b-instruct-abliterated.Q5_K_M.gguf, clip_g_hidream.safetensors, clip_l_hidream.safetensors in ComfyUI\models\text_encoders folder;

place: HiDream.vae.safetensors under ComfyUI\models\vae folder;

use my UNcensored HiDream-Full Workflow.json as starting workflow to test how it works;

in case of VRAM problems - use my VRAM optimized bat file: run_nvidia_gpu_fp8vae.bat to start ComfyUI (put it directly into the ComfyUI folder);

... this way you can have a nice high quality HiDream-Full image generation with 12Gb VRAM (tested!)

Use it well ;)

HFGL

FAQ

Comments (71)

Awseome to start seeing a better uncensored verion of hidream! Are all needed files you mention part of the .zip file?

yes - all of them are inside of that zip archive (for inconvenient convenience...) - CivitAI prohibits them all as one upload unless they are in zip :(

Can this be done with the Q8 versions for those of us who have more resources available?

Yes, but you need at least uncensored LLAMA and ... also FLAN is an optional but nice choice.

can i presume that the actual model is still original (just Q5) ? only the LLAMA and FLAN are doing the work ?

@bk227865750 the model is OG, the trick is in Clip LLMs (Meta Llama + Google FLAN)

Yes. I'm working on it now and will have uploads shortly.

@_Envy_ <3

@OliviaRossi What uncensored llama are you using? Is there a safetensors file of it? I can only find a diffusers one, which doesn't do much good with comfy.

@_Envy_ @OliviaRossi I think I may have got it working with these https://huggingface.co/dumb-dev/flan-t5-xxl-gguf/tree/main/Q8, https://huggingface.co/mlabonne/Meta-Llama-3.1-8B-Instruct-abliterated-GGUF/tree/main the Q8 model from there. It doesn't throw errors at least and it runs.

No errors, but at he end no image, just noise.

that is the case with HiDream, I was getting the same problem, when in my ComfyUI bat file was the command-line switch --force-FP16, so try to play with that bat file, maybe use my version or adapt it

@OliviaRossi Using your bat file leads to the same result - blueviolet noise

@fsandersphoto and my workflow? sampler and other settings? HiDream is very sensitive for them ...

@OliviaRossi @OliviaRossi I am using your workflow and all files from the zip

@fsandersphoto oh, you need to change ComfyUI to the nightly and update to the latest, the reason that happens is that large length prompts go over the token allowance so it was reported and fixed in a more recent update, but be warned the more recent update breaks some workflow nodes.

@ByteCrafter You are right, update to nightly breaks some workflow nodes, but still no images after update.

@fsandersphoto here is a very fresh image: https://civitai.com/images/72107327 - maybe copy nodes from it and try? (updated Comfy and workflow)

@fsandersphoto then its likely you have not got something installed that should be.

@ByteCrafter yup - something is off in ComfyUI settings (commandline switches - the most probable cause), maybe some conflicts with nodes

I'm unconvinced. I generated 18 images, 9 with this setup and 9 with the normal one. And I see no indication that this "uncensored" setup is any better at nudes than the normal one.

I agree with you based on my tests. On top of that the workflow provided generated blurry messy images.

@Pantilicious blurry messy - something is wrong with your Comfy updates / settings, I was having the same problem with HiDream until I was brutally changing them, because if ComfyUI is OK for Flux - HiDream is way more sensitive for tiny changes of the environment and generation parameters ;)

here is more straightforward example https://civitai.com/images/71911200 <-- use natural language, not just "pussy" (you have to explain to both included LLMs what you want)

@Pantilicious blurry and messy - on some of the scheduler / sampler combos - same as the HiDream itself is

These encoders are an actual improvement. But I am using the text-encode-only (TE-only) version of the flan_T5 encoder to save even more memory

I think that my attached version is also a TE only, if I'm wrong - then = you have a good point!

What about Llama 3.1 Lexi Uncensored? That's supposedly the uncensored variant of Instruct.

well, thanks for the suggestion - gonna test it too - very interesting point!!!

so ... after testing Lexi Llama (uncensored) - I've found this (another one), for example this image: https://civitai.com/images/71818416 is using DarkIdol-Llama-3.1-8B-Instruct-1.2-Uncensored-GGUF, which is better than Lexi for generating hands

Nice! I'll take a look. Thx!

@MysticMindAi but the one that is in this file collection works the best in my numerous tests

@OliviaRossi been getting great results between abilerated and DarkIdol variants thus far!

@MysticMindAi nice to know!

take a look at your gguf section I think you have the wrong clip loader in the build

here is the workflow in the image's metadata that has both quadruple gguf loaders (one at a time ...) - they are both doing the same job/result = tested!

https://civitai.com/images/71811027

No matter what I try, I absolutely cannot get an image of a woman not wearing pants. This shows off GREAT tits—thank you for that. But I just can't get a single pussy to show up. Sad times.

by the way - pants are on with Euler/Beta but Off with dpmpp_2m/Beta ;)

try to change Scheduler/Sampler combo!

Yes, it's annoying me everyone is saying this model is uncensored when it clearly isn't, I've tried tons of text encoders etc but no joy.

@azeli https://civitai.com/images/71558294 - this image compares same settings with censored and uncensored one - and yes, female pelvis area is not an easy task for it but ... boobs are gorgeous ;)

@azeli here is an example for this matter: https://civitai.com/images/71911200 <-- use it (copy nodes)

Fantastic work! Any chance you could back up the model to huggingface? Apologies if this is already done. Thank you greatly for your work!

all parts of this model are from various repos on Huggingface - the point is to prove the concept, anf the quant size (which is best suitable) you can get from there ;)

and TY!

Does it have to use meta-llama-3.1 or can we use something like gemma-3-12b-it-abliterated-GGUF?

unfortunately - Meta LLAMA 3.1, gemma - I was not testing it - doing it right now (for science!)

UPDATE: - no, error is popping up - "unexpected architecture - Gemma"

Working great so far, thank you for sharing this!!!

TY!

u r welcome!!!

Can you add Q2 GGUF versions of these models?

You can easily get all the needed parts just by searching for them on https://huggingface.co website. All parts of this model are from there.

But - you should know, that Q2-Q3 are unusable since they are producing very low quality output. What you can do - is to try to fit Q4-K-S into your VRAM, and also, instead of using quad gguf clip loader - you can use only a single gguf clip loader (now that Comfyui supports it) - and as a clip you have to load only the obliterated meta llama (no need in others - but you have to switch the type of a clip to hidream)

@OliviaRossi Since my main Laptop's HDD went caput, I'm stuck for now using old potato laptop & it can only handle Q2 versions :(

I just use Hidream to set main scene composition & SD1.5 or SDXL as refiner models.

Am I correct in understanding that it's "Uncensored LLM" used as text encoder that converts/makes this model into uncensored version?

That means I can use any Q2 model I already have ie Q2+Un-LLM=Uncensored Hidream Q2 ???

BTW what is difference between outputs generated by "uncensored" & "ablitirated" LLM models?

@AKDesigns censored LLMs simply are trying to limit your prompts within "decency" and the most impressive part - uncensored LLMs tend to create way more artful SFW images, simply because they are not artificially limited in their output (if that makes sense ...)

@AKDesigns yes, you can "construct" your own model file-set = Q2 if you want to

@OliviaRossi is there anyway to run q4 in 6gb vram ive tried q3 its literally unusable

for HiDream I1, especially with quantized or dev/full models, it's highly recommended to use the ModelSamplingSD3 node to set the shift parameter (e.g., shift=3.0 for full, 6.0 for dev, 3.0 for fast). The shift value directly influences the denoising and sampling behavior, and skipping it can noticeably affect image quality and prompt adherence. Adding this node to your workflow can really help you get the best out of HiDream!

Yes, I tested it, and in many cases it makes the output much worse. This may be because I'm using a different sampler/scheduler combination or because I'm using different models for clip than what was intended. In any case, sampling has to be tested to meet your needs!

UPDATE: again re-tested ModelSamplingSD3 (3-6 strength) and it is really detrimental on various samplers / schedulers - not going to use it at all

Do we need to download text encoder/vae or is it baked

HiDream uses the same VAE as FLUX

How better train LORA for this? I fount train TE is good, but Onetrainer load only huggingface folder, but not safetensor or gguf

I hope someone knows the answer ...

can I download somewhere just the llama and t5? I already got the unet and it's too many GB for me

all parts of this model were taken from huggingface.co - so: YES!

PSA: If you have at least 12GB VRAM and 16GB system RAM, you can make this workflow a bit more reliable by using the fp8 15GB Dev/Full model instead of GGUF Q5. It improves quality and seems a bit more reliable. It also works fine with GGUF Clip loaders, so you can use Q5 FLAN and Llama with it. Remember to switch ComfyUI to LowVRAM mode.

yes, Q8 of both text encoder and the model itself are fitting in 12GB VRAM, for that purpose you need to have not too many nodes installed in Comfyui and preferrable - turned OFF the preview (which eats up some additional memory)

Workflow is throwing an error about missing nodes:

QuadrupleClipLoaderGGUF

LoaderGGUF

Not really an error :) anytime you import a workflow that uses nodes you don't have installed, ComfyUI will inform you about it

Close the notification, click Manager in the top right, then Install Missing Custom Nodes. It'll walk you through the rest

is it possible to use it in SDNEXT?

if it supports this architecture (HiDream) - then - yes

Is it possible to edit existing pictures with this? I'm new in confyui not sure how to add that to the workflow. Thanks.

it is simple - instead of empty latent - you need pass your image with mask ;)