Gives dudes a big raging hard-on in their pants.

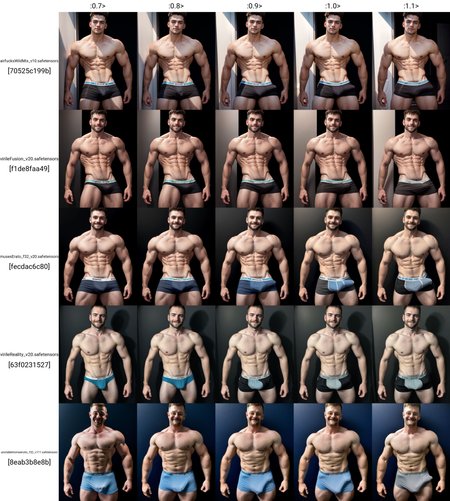

In the prompt, use the trigger word "vpl" and maybe what kind of pants/underwear the subject is wearing (so far underwear works best.) Throwing the word "bulge" in there may yield better results under certain conditions. It seems to do better with semi-realistic models. The weight is most effective around 0.7-1.0.

P.S. this is my very first LoRA, and I intend to release an improved version in the future, so stay tuned! If you've generated any good VPLs, please post or share as I would love to add it to the training data for v2.

Description

Trained on 139 images, 2 repeats for 50 epochs. Saved and sampled the model for every epoch completed. Epoch #22 out of 50.

[[subsets]]

num_repeats = 2

keep_tokens = 1

caption_extension = ".txt"

shuffle_caption = true

flip_aug = false

is_reg = false

image_dir = "E:/Projects/vpl_lora/v7/dataset"

[noise_args]

[logging_args]

[general_args.args]

pretrained_model_name_or_path = "E:/Projects/v1-5-pruned.safetensors"

mixed_precision = "fp16"

seed = 23

clip_skip = 1

xformers = true

max_data_loader_n_workers = 1

persistent_data_loader_workers = true

max_token_length = 225

prior_loss_weight = 1.0

cache_latents = true

max_train_epochs = 50

[general_args.dataset_args]

resolution = 512

batch_size = 2

[network_args.args]

network_dim = 16

network_alpha = 9.0

[optimizer_args.args]

optimizer_type = "AdamW8bit"

lr_scheduler = "cosine"

learning_rate = 0.0001

[saving_args.args]

output_dir = "E:/Projects/vpl_lora/v7/build"

save_precision = "fp16"

save_model_as = "safetensors"

output_name = "hardvpl"

save_every_n_epochs = 1

[bucket_args.dataset_args]

enable_bucket = true

min_bucket_reso = 256

max_bucket_reso = 1024

bucket_reso_steps = 64

[sample_args.args]

sample_prompts = "E:/Projects/vpl_lora/v7/prompt.txt"

sample_sampler = "ddim"

sample_every_n_epochs = 1

[optimizer_args.args.optimizer_args]

weight_decay = "0.1"

betas = "0.9,0.99"

FAQ

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.